Benoît Frénay

Towards Photonic Band Diagram Generation with Transformer-Latent Diffusion Models

Oct 02, 2025

Abstract:Photonic crystals enable fine control over light propagation at the nanoscale, and thus play a central role in the development of photonic and quantum technologies. Photonic band diagrams (BDs) are a key tool to investigate light propagation into such inhomogeneous structured materials. However, computing BDs requires solving Maxwell's equations across many configurations, making it numerically expensive, especially when embedded in optimization loops for inverse design techniques, for example. To address this challenge, we introduce the first approach for BD generation based on diffusion models, with the capacity to later generalize and scale to arbitrary three dimensional structures. Our method couples a transformer encoder, which extracts contextual embeddings from the input structure, with a latent diffusion model to generate the corresponding BD. In addition, we provide insights into why transformers and diffusion models are well suited to capture the complex interference and scattering phenomena inherent to photonics, paving the way for new surrogate modeling strategies in this domain.

Towards a Trustworthy Anomaly Detection for Critical Applications through Approximated Partial AUC Loss

Feb 17, 2025

Abstract:Anomaly Detection is a crucial step for critical applications such in the industrial, medical or cybersecurity domains. These sectors share the same requirement of handling differently the different types of classification errors. Indeed, even if false positives are acceptable, false negatives are not, because it would reflect a missed detection of a quality issue, a disease or a cyber threat. To fulfill this requirement, we propose a method that dynamically applies a trustworthy approximated partial AUC ROC loss (tapAUC). A binary classifier is trained to optimize the specific range of the AUC ROC curve that prevents the True Positive Rate (TPR) to reach 100% while minimizing the False Positive Rate (FPR). The optimal threshold that does not trigger any false negative is then kept and used at the test step. The results show a TPR of 92.52% at a 20.43% FPR for an average across 6 datasets, representing a TPR improvement of 4.3% for a FPR cost of 12.2% against other state-of-the-art methods. The code is available at https://github.com/ArnaudBougaham/tapAUC.

On the effectiveness of Rotation-Equivariance in U-Net: A Benchmark for Image Segmentation

Dec 12, 2024

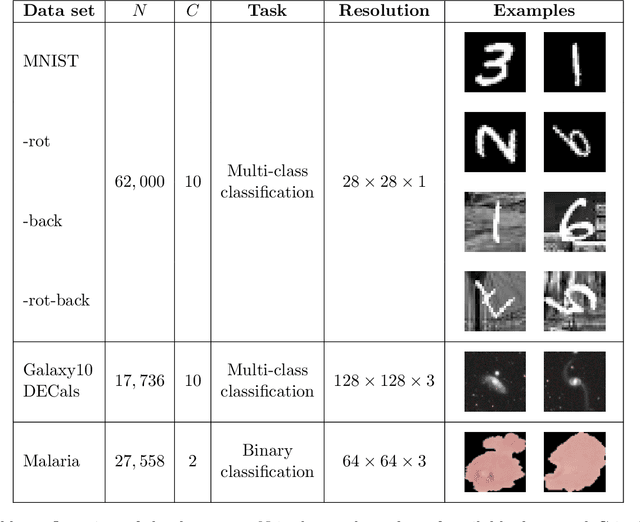

Abstract:Numerous studies have recently focused on incorporating different variations of equivariance in Convolutional Neural Networks (CNNs). In particular, rotation-equivariance has gathered significant attention due to its relevance in many applications related to medical imaging, microscopic imaging, satellite imaging, industrial tasks, etc. While prior research has primarily focused on enhancing classification tasks with rotation equivariant CNNs, their impact on more complex architectures, such as U-Net for image segmentation, remains scarcely explored. Indeed, previous work interested in integrating rotation-equivariance into U-Net architecture have focused on solving specific applications with a limited scope. In contrast, this paper aims to provide a more exhaustive evaluation of rotation equivariant U-Net for image segmentation across a broader range of tasks. We benchmark their effectiveness against standard U-Net architectures, assessing improvements in terms of performance and sustainability (i.e., computational cost). Our evaluation focuses on datasets whose orientation of objects of interest is arbitrary in the image (e.g., Kvasir-SEG), but also on more standard segmentation datasets (such as COCO-Stuff) as to explore the wider applicability of rotation equivariance beyond tasks undoubtedly concerned by rotation equivariance. The main contribution of this work is to provide insights into the trade-offs and advantages of integrating rotation equivariance for segmentation tasks.

SO and O Equivariance in Image Recognition with Bessel-Convolutional Neural Networks

Apr 18, 2023

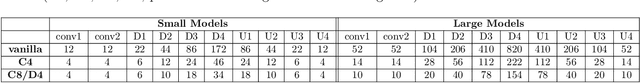

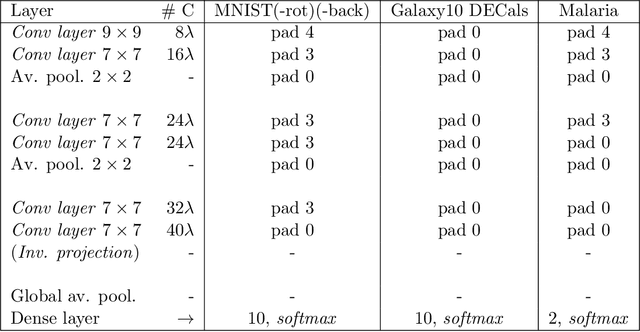

Abstract:For many years, it has been shown how much exploiting equivariances can be beneficial when solving image analysis tasks. For example, the superiority of convolutional neural networks (CNNs) compared to dense networks mainly comes from an elegant exploitation of the translation equivariance. Patterns can appear at arbitrary positions and convolutions take this into account to achieve translation invariant operations through weight sharing. Nevertheless, images often involve other symmetries that can also be exploited. It is the case of rotations and reflections that have drawn particular attention and led to the development of multiple equivariant CNN architectures. Among all these methods, Bessel-convolutional neural networks (B-CNNs) exploit a particular decomposition based on Bessel functions to modify the key operation between images and filters and make it by design equivariant to all the continuous set of planar rotations. In this work, the mathematical developments of B-CNNs are presented along with several improvements, including the incorporation of reflection and multi-scale equivariances. Extensive study is carried out to assess the performances of B-CNNs compared to other methods. Finally, we emphasize the theoretical advantages of B-CNNs by giving more insights and in-depth mathematical details.

Industrial and Medical Anomaly Detection Through Cycle-Consistent Adversarial Networks

Feb 10, 2023Abstract:In this study, a new Anomaly Detection (AD) approach for real-world images is proposed. This method leverages the theoretical strengths of unsupervised learning and the data availability of both normal and abnormal classes. The AD is often formulated as an unsupervised task motivated by the frequent imbalanced nature of the datasets, as well as the challenge of capturing the entirety of the abnormal class. Such methods only rely on normal images during training, which are devoted to be reconstructed through an autoencoder architecture for instance. However, the information contained in the abnormal data is also valuable for this reconstruction. Indeed, the model would be able to identify its weaknesses by better learning how to transform an abnormal (or normal) image into a normal (or abnormal) image. Each of these tasks could help the entire model to learn with higher precision than a single normal to normal reconstruction. To address this challenge, the proposed method utilizes Cycle-Generative Adversarial Networks (Cycle-GANs) for abnormal-to-normal translation. To the best of our knowledge, this is the first time that Cycle-GANs have been studied for this purpose. After an input image has been reconstructed by the normal generator, an anomaly score describes the differences between the input and reconstructed images. Based on a threshold set with a business quality constraint, the input image is then flagged as normal or not. The proposed method is evaluated on industrial and medical images, including cases with balanced datasets and others with as few as 30 abnormal images. The results demonstrate accurate performance and good generalization for all kinds of anomalies, specifically for texture-shaped images where the method reaches an average accuracy of 97.2% (85.4% with an additional zero false negative constraint).

Composite Score for Anomaly Detection in Imbalanced Real-World Industrial Dataset

Nov 25, 2022Abstract:In recent years, the industrial sector has evolved towards its fourth revolution. The quality control domain is particularly interested in advanced machine learning for computer vision anomaly detection. Nevertheless, several challenges have to be faced, including imbalanced datasets, the image complexity, and the zero-false-negative (ZFN) constraint to guarantee the high-quality requirement. This paper illustrates a use case for an industrial partner, where Printed Circuit Board Assembly (PCBA) images are first reconstructed with a Vector Quantized Generative Adversarial Network (VQGAN) trained on normal products. Then, several multi-level metrics are extracted on a few normal and abnormal images, highlighting anomalies through reconstruction differences. Finally, a classifer is trained to build a composite anomaly score thanks to the metrics extracted. This three-step approach is performed on the public MVTec-AD datasets and on the partner PCBA dataset, where it achieves a regular accuracy of 95.69% and 87.93% under the ZFN constraint.

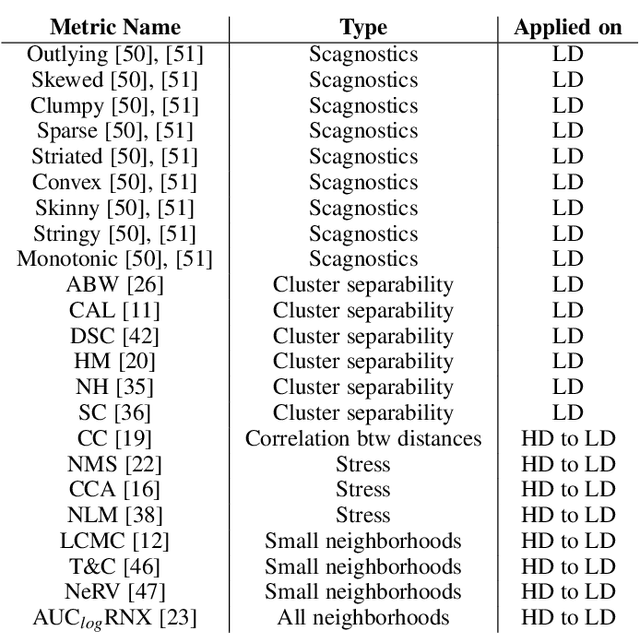

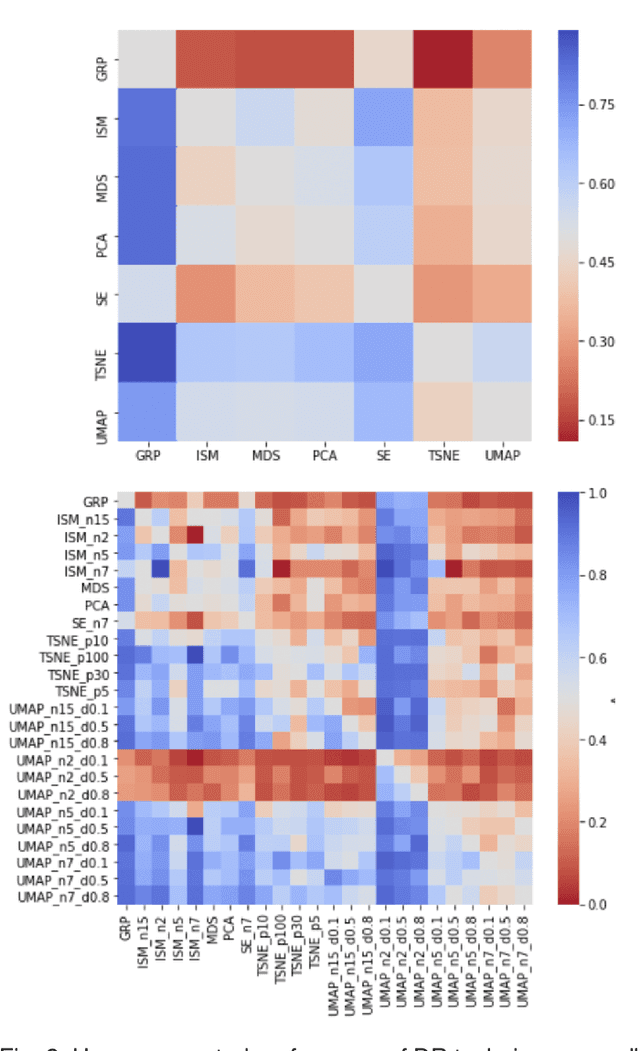

DumbleDR: Predicting User Preferences of Dimensionality Reduction Projection Quality

May 19, 2021

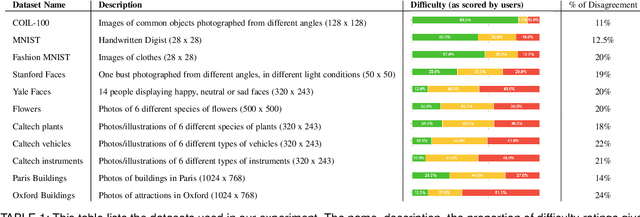

Abstract:A plethora of dimensionality reduction techniques have emerged over the past decades, leaving researchers and analysts with a wide variety of choices for reducing their data, all the more so given some techniques come with additional parametrization (e.g. t-SNE, UMAP, etc.). Recent studies are showing that people often use dimensionality reduction as a black-box regardless of the specific properties the method itself preserves. Hence, evaluating and comparing 2D projections is usually qualitatively decided, by setting projections side-by-side and letting human judgment decide which projection is the best. In this work, we propose a quantitative way of evaluating projections, that nonetheless places human perception at the center. We run a comparative study, where we ask people to select 'good' and 'misleading' views between scatterplots of low-level projections of image datasets, simulating the way people usually select projections. We use the study data as labels for a set of quality metrics whose purpose is to discover and quantify what exactly people are looking for when deciding between projections. With this proxy for human judgments, we use it to rank projections on new datasets, explain why they are relevant, and quantify the degree of subjectivity in projections selected.

Impact of Legal Requirements on Explainability in Machine Learning

Jul 10, 2020

Abstract:The requirements on explainability imposed by European laws and their implications for machine learning (ML) models are not always clear. In that perspective, our research analyzes explanation obligations imposed for private and public decision-making, and how they can be implemented by machine learning techniques.

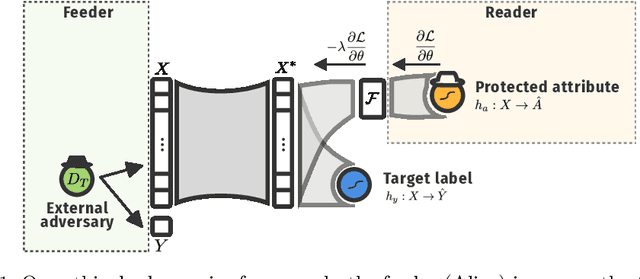

Ethical Adversaries: Towards Mitigating Unfairness with Adversarial Machine Learning

May 14, 2020

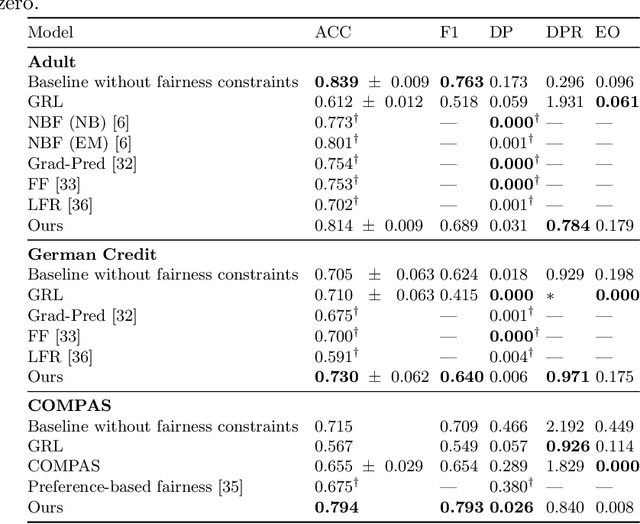

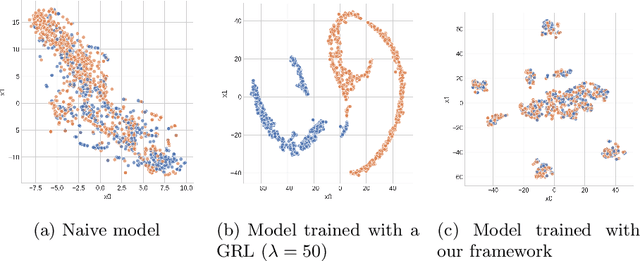

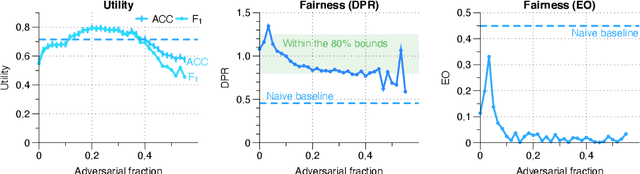

Abstract:Machine learning is being integrated into a growing number of critical systems with far-reaching impacts on society. Unexpected behaviour and unfair decision processes are coming under increasing scrutiny due to this widespread use and also due to theoretical considerations. Individuals, as well as organisations, notice, test, and criticize unfair results to hold model designers and deployers accountable. This requires transparency and the possibility to describe, measure and, ideally, prove the 'fairness' of a system. This involves concepts such as fairness, transparency and accountability that will hopefully make machine learning more amenable to criticism and improvement proposals towards the fulfilment of societal goals. We concentrate on fairness, taking into account that both the transparency of the neural networks and accountability of actors and systems will require further methods. We offer a new framework that assists in mitigating unfair representations in the dataset used for training. Our framework relies on adversaries to improve fairness. First, it evaluates a model for unfairness w.r.t. protected attributes and ensures that an adversary cannot guess such attributes for a given outcome, by optimizing the model's parameters for fairness while limiting utility losses. Second, the framework leverages evasion attacks from adversarial machine learning to perform adversarial retraining with new examples unseen by the model. These two steps are iteratively applied until a significant improvement in fairness is obtained. We evaluated our framework on well-studied datasets in the fairness literature-including COMPAS-where it can surpass other approaches concerning demographic parity, equality of opportunity and also the model's utility. We also illustrate our findings on the subtle difficulties when mitigating unfairness and highlight how our framework can help model designers.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge