Bala Murali Manoghar

EWareNet: Emotion Aware Human Intent Prediction and Adaptive Spatial Profile Fusion for Social Robot Navigation

Dec 01, 2020

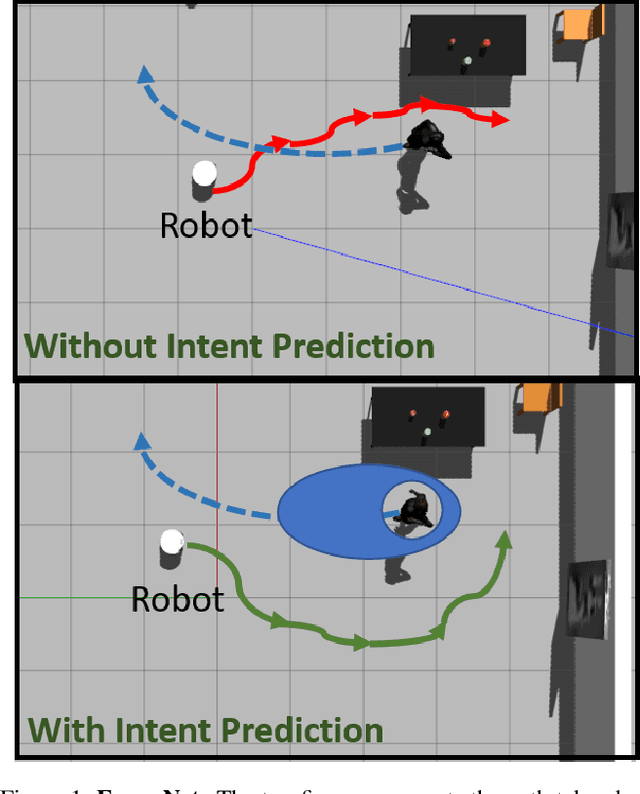

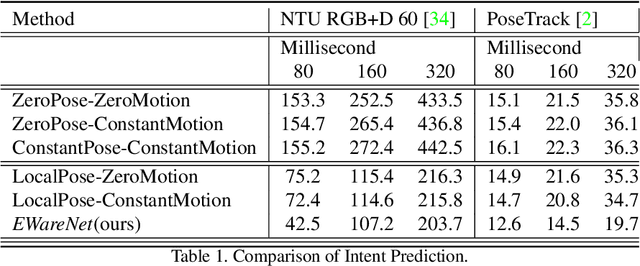

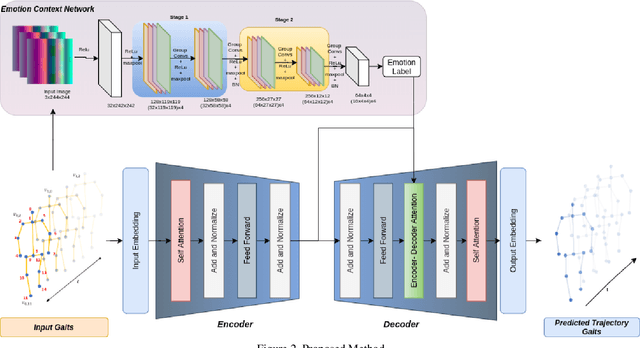

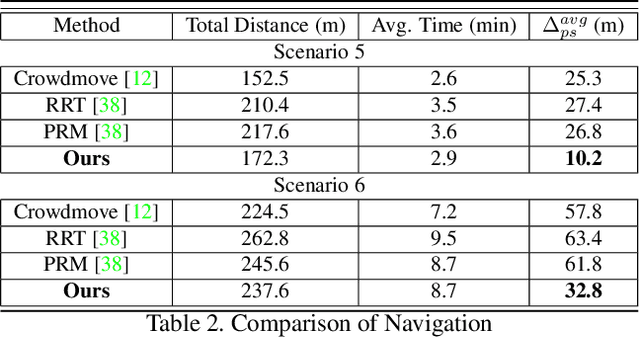

Abstract:We present EWareNet, a novel intent-aware social robot navigation algorithm among pedestrians. Our approach predicts the trajectory-based pedestrian intent from historical gaits, which is then used for intent-guided navigation taking into account social and proxemic constraints. To predict pedestrian intent, we propose a transformer-based model that works on a commodity RGB-D camera mounted onto a moving robot. Our intent prediction routine is integrated into a mapless navigation scheme and makes no assumptions about the environment of pedestrian motion. Our navigation scheme consists of a novel obstacle profile representation methodology that is dynamically adjusted based on the pedestrian pose, intent, and emotion. The navigation scheme is based on a reinforcement learning algorithm that takes into consideration human intent and robot's impact on human intent, in addition to the environmental configuration. We outperform current state-of-art algorithms for intent prediction from 3D gaits.

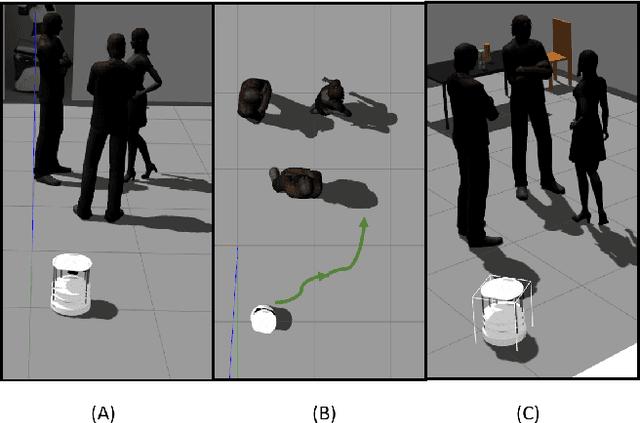

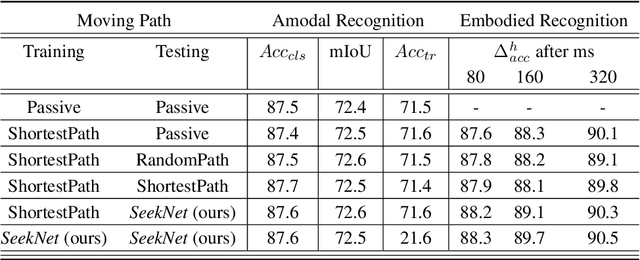

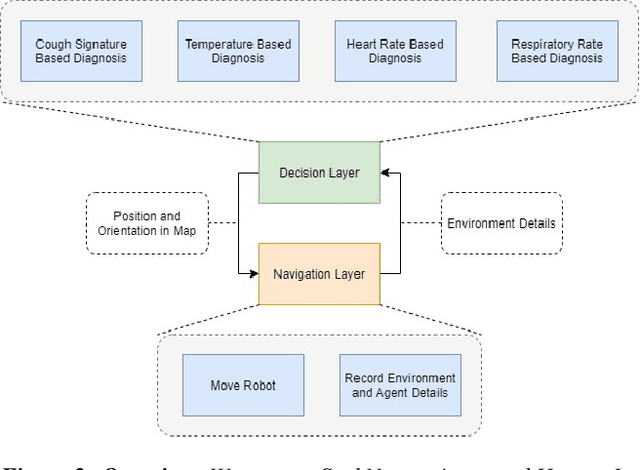

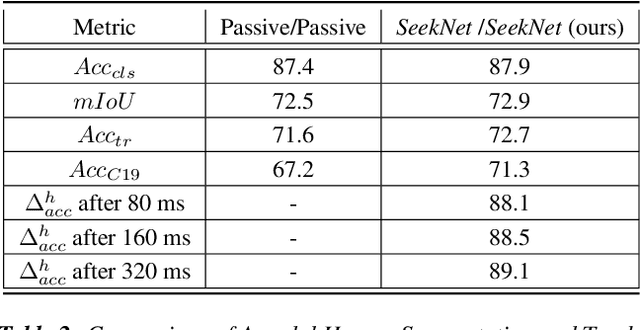

SeekNet: Improved Human Instance Segmentation via Reinforcement Learning Based Optimized Robot Relocation

Nov 17, 2020

Abstract:Amodal recognition is the ability of the system to detect occluded objects. Most state-of-the-art Visual Recognition systems lack the ability to perform amodal recognition. Few studies have achieved amodal recognition through passive prediction or embodied recognition approaches. However, these approaches suffer from challenges in real-world applications, such as dynamic objects. We propose SeekNet, an improved optimization method for amodal recognition through embodied visual recognition. Additionally, we implement SeekNet for social robots, where there are multiple interactions with crowded humans. Hence, we focus on occluded human detection & tracking and showcase the superiority of our algorithm over other baselines. We also experiment with SeekNet to improve the confidence of COVID-19 symptoms pre-screening algorithms using our efficient embodied recognition system.

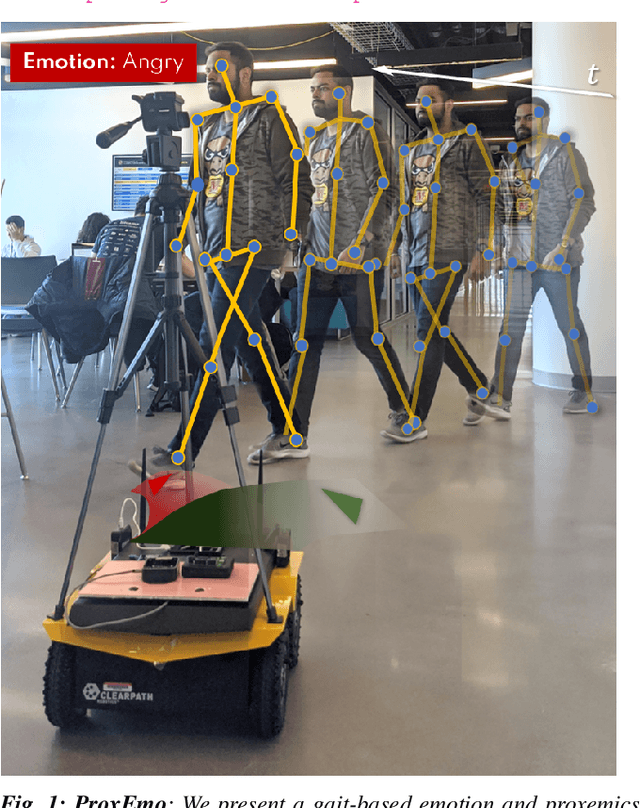

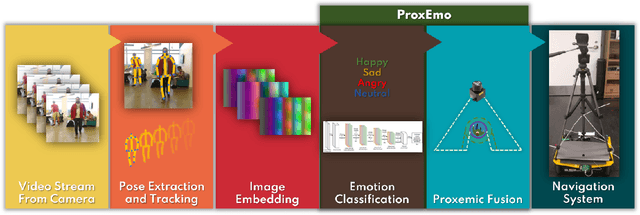

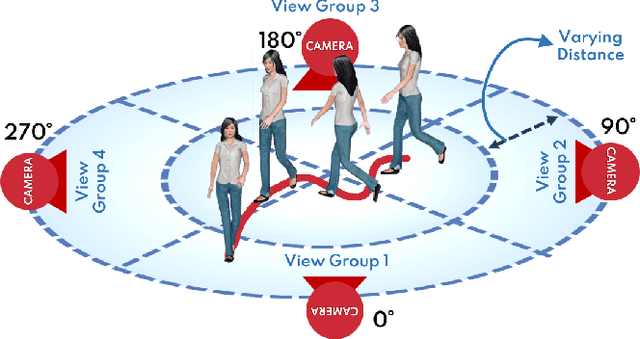

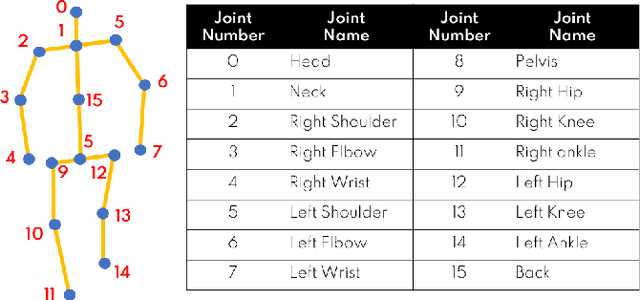

ProxEmo: Gait-based Emotion Learning and Multi-view Proxemic Fusion for Socially-Aware Robot Navigation

Mar 02, 2020

Abstract:We present ProxEmo, a novel end-to-end emotion prediction algorithm for socially aware robot navigation among pedestrians. Our approach predicts the perceived emotions of a pedestrian from walking gaits, which is then used for emotion-guided navigation taking into account social and proxemic constraints. To classify emotions, we propose a multi-view skeleton graph convolution-based model that works on a commodity camera mounted onto a moving robot. Our emotion recognition is integrated into a mapless navigation scheme and makes no assumptions about the environment of pedestrian motion. It achieves a mean average emotion prediction precision of 82.47% on the Emotion-Gait benchmark dataset. We outperform current state-of-art algorithms for emotion recognition from 3D gaits. We highlight its benefits in terms of navigation in indoor scenes using a Clearpath Jackal robot.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge