Bahram Jalali

Standardizing Medical Images at Scale for AI

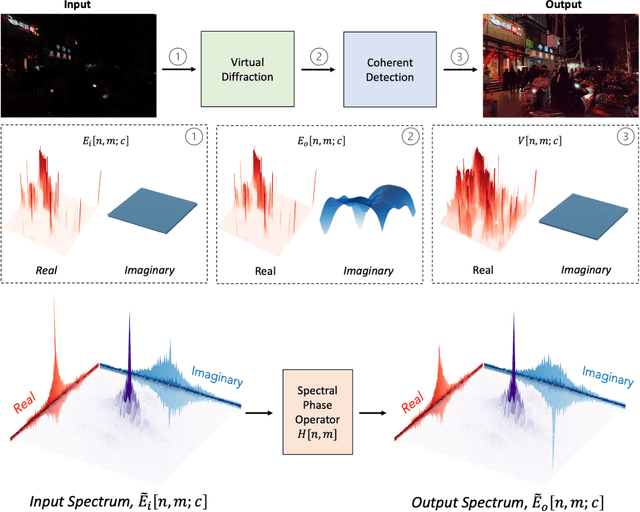

Mar 16, 2026Abstract:Deep learning has achieved remarkable success in medical image analysis, yet its performance remains highly sensitive to the heterogeneity of clinical data. Differences in imaging hardware, staining protocols, and acquisition conditions produce substantial domain shifts that degrade model generalization across institutions. Here we present a physics-based data preprocessing framework based on the PhyCV (Physics-Inspired Computer Vision) family of algorithms, which standardizes medical images through deterministic transformations derived from optical physics. The framework models images as spatially varying optical fields that undergo a virtual diffractive propagation followed by coherent phase detection. This process suppresses non-semantic variability such as color and illumination differences while preserving diagnostically relevant texture and structural features. When applied to histopathological images from the Camelyon17-WILDS benchmark, PhyCV preprocessing improves out-of-distribution breast-cancer classification accuracy from 70.8% (Empirical Risk Minimization baseline) to 90.9%, matching or exceeding data-augmentation and domain-generalization approaches at negligible computational cost. Because the transform is physically interpretable, parameterizable, and differentiable, it can be deployed as a fixed preprocessing stage or integrated into end-to-end learning. These results establish PhyCV as a generalizable data refinery for medical imaging-one that harmonizes heterogeneous datasets through first-principles physics, improving robustness, interpretability, and reproducibility in clinical AI systems.

Nonlinear Schrödinger Network

Jul 19, 2024

Abstract:Deep neural networks (DNNs) have achieved exceptional performance across various fields by learning complex nonlinear mappings from large-scale datasets. However, they encounter challenges such as high computational costs and limited interpretability. To address these issues, hybrid approaches that integrate physics with AI are gaining interest. This paper introduces a novel physics-based AI model called the "Nonlinear Schr\"odinger Network", which treats the Nonlinear Schr\"odinger Equation (NLSE) as a general-purpose trainable model for learning complex patterns including nonlinear mappings and memory effects from data. Existing physics-informed machine learning methods use neural networks to approximate the solutions of partial differential equations (PDEs). In contrast, our approach directly treats the PDE as a trainable model to obtain general nonlinear mappings that would otherwise require neural networks. As a physics-inspired approach, it offers a more interpretable and parameter-efficient alternative to traditional black-box neural networks, achieving comparable or better accuracy in time series classification tasks while significantly reducing the number of required parameters. Notably, the trained Nonlinear Schr\"odinger Network is interpretable, with all parameters having physical meanings as properties of a virtual physical system that transforms the data to a more separable space. This interpretability allows for insight into the underlying dynamics of the data transformation process. Applications to time series forecasting have also been explored. While our current implementation utilizes the NLSE, the proposed method of using physics equations as trainable models to learn nonlinear mappings from data is not limited to the NLSE and may be extended to other master equations of physics.

Time Stretch with Continuous-Wave Lasers for Practical Fast Realtime Measurements

Sep 19, 2023

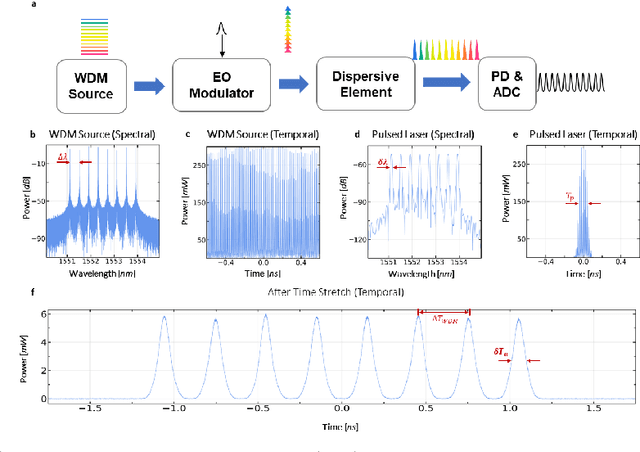

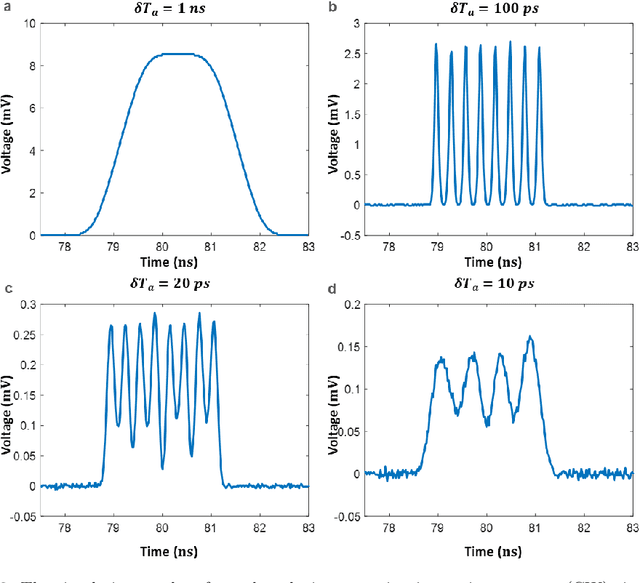

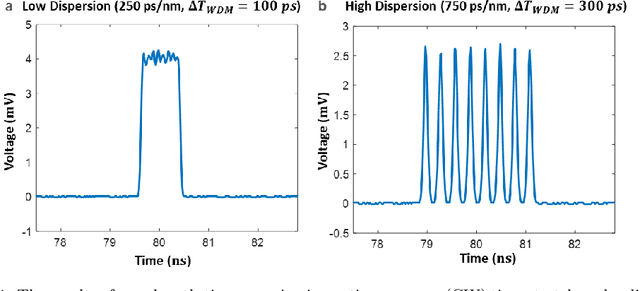

Abstract:Realtime high-throughput sensing and detection enables the capture of rare events within sub-picosecond time scale, which makes it possible for scientists to uncover the mystery of ultrafast physical processes. Photonic time stretch is one of the most successful approaches that utilize the ultra-wide bandwidth of mode-locked laser for detecting ultrafast signal. Though powerful, it relies on supercontinuum mode-locked laser source, which is expensive and difficult to integrate. This greatly limits the application of this technology. Here we propose a novel Continuous Wave (CW) implementation of the photonic time stretch. Instead of a supercontinuum mode-locked laser, a wavelength division multiplexed (WDM) CW laser, pulsed by electro-optic (EO) modulation, is adopted as the laser source. This opens up the possibility for low-cost integrated time stretch systems. This new approach is validated via both simulation and experiment. Two scenarios for potential application are also described.

Low Latency Computing for Time Stretch Instruments

Apr 05, 2023Abstract:Time stretch instruments have been exceptionally successful in discovering single-shot ultrafast phenomena such as optical rogue waves and have led to record-speed microscopy, spectroscopy, lidar, etc. These instruments encode the ultrafast events into the spectrum of a femtosecond pulse and then dilate the time scale of the data using group velocity dispersion. Generating as much as Tbit per second of data, they are ideal partners for deep learning networks which by their inherent complexity, require large datasets for training. However, the inference time scale of neural networks in the millisecond regime is orders of magnitude longer than the data acquisition rate of time stretch instruments. This underscores the need to explore means where some of the lower-level computational tasks can be done while the data is still in the optical domain. The Nonlinear Schr\"{o}dinger Kernel computing addresses this predicament. It utilizes optical nonlinearities to map the data onto a new domain in which classification accuracy is enhanced, without increasing the data dimensions. One limitation of this technique is the fixed optical transfer function, which prevents training and generalizability. Here we show that the optical kernel can be effectively tuned and trained by utilizing digital phase encoding of the femtosecond laser pulse leading to a reduction of the error rate in data classification.

PhyCV: The First Physics-inspired Computer Vision Library

Jan 29, 2023

Abstract:PhyCV is the first computer vision library which utilizes algorithms directly derived from the equations of physics governing physical phenomena. The algorithms appearing in the current release emulate, in a metaphoric sense, the propagation of light through a physical medium with natural and engineered diffractive properties followed by coherent detection. Unlike traditional algorithms that are a sequence of hand-crafted empirical rules, physics-inspired algorithms leverage physical laws of nature as blueprints for inventing algorithms. In addition, these algorithms have the potential to be implemented in real physical devices for fast and efficient computation in the form of analog computing. This manuscript is prepared to support the open-sourced PhyCV code which is available in the GitHub repository: https://github.com/JalaliLabUCLA/phycv

VEViD: Vision Enhancement via Virtual diffraction and coherent Detection

Aug 25, 2022

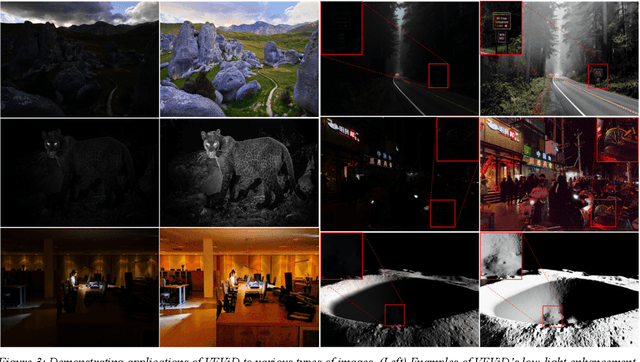

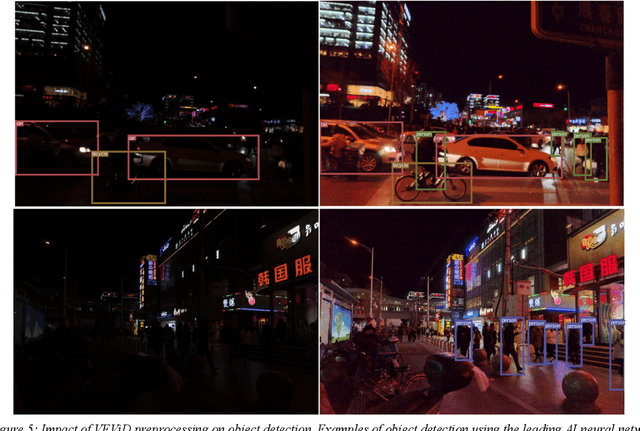

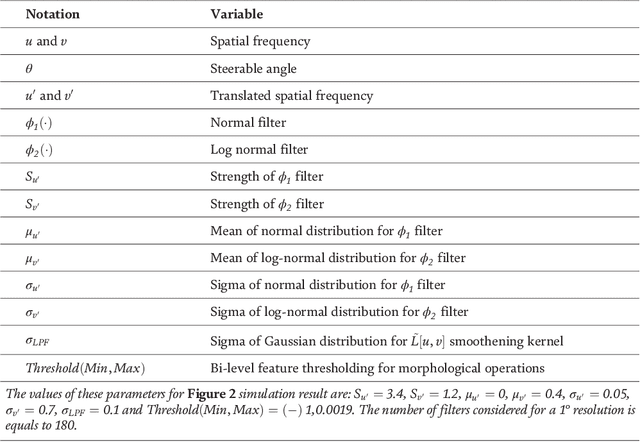

Abstract:The history of computing started with analog computers consisting of physical devices performing specialized functions such as predicting the trajectory of cannon balls. In modern times, this idea has been extended, for example, to ultrafast nonlinear optics serving as a surrogate analog computer to probe the behavior of complex phenomena such as rogue waves. Here we discuss a new paradigm where physical phenomena coded as an algorithm perform computational imaging tasks. Specifically, diffraction followed by coherent detection, not in its analog realization but when coded as an algorithm, becomes an image enhancement tool. Vision Enhancement via Virtual diffraction and coherent Detection (VEViD) introduced here reimagines a digital image as a spatially varying metaphoric light field and then subjects the field to the physical processes akin to diffraction and coherent detection. The term "Virtual" captures the deviation from the physical world. The light field is pixelated and the propagation imparts a phase with an arbitrary dependence on frequency which can be different from the quadratic behavior of physical diffraction. Temporal frequencies exist in three bands corresponding to the RGB color channels of a digital image. The phase of the output, not the intensity, represents the output image. VEViD is a high-performance low-light-level and color enhancement tool that emerges from this paradigm. The algorithm is interpretable and computationally efficient. We demonstrate image enhancement of 4k video at 200frames per second and show the utility of this physical algorithm in improving the accuracy of object detection by neural networks without having to retrain model for low-light conditions. The application of VEViD to color enhancement is also demonstrated.

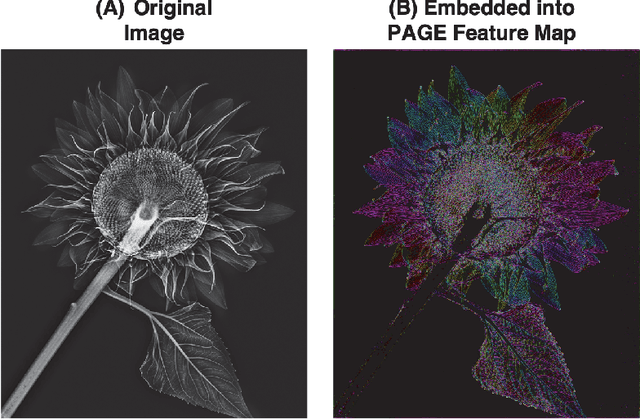

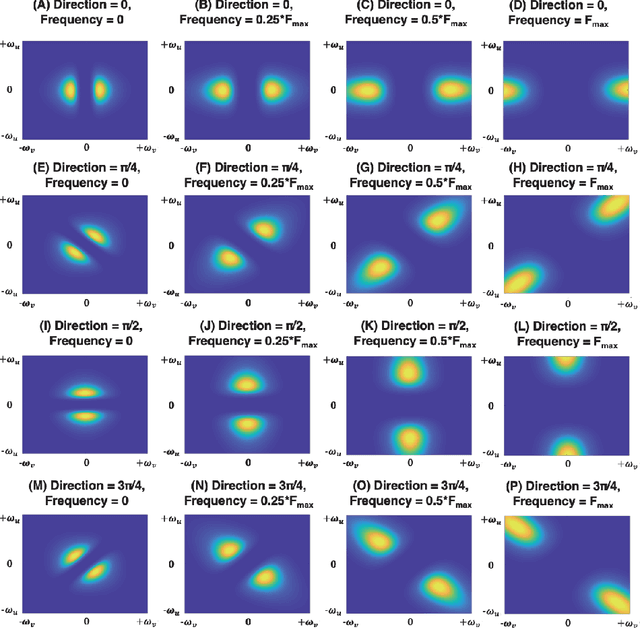

Phase-Stretch Adaptive Gradient-Field Extractor (PAGE)

Feb 12, 2022

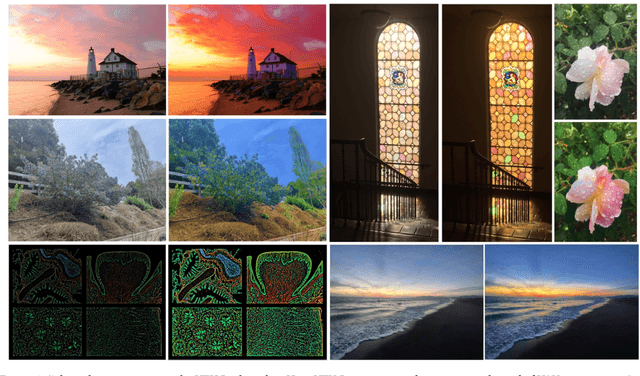

Abstract:Phase-Stretch Adaptive Gradient-Field Extractor (PAGE) is an edge detection algorithm that is inspired by physics of electromagnetic diffraction and dispersion. A computational imaging algorithm, it identifies edges, their orientations and sharpness in a digital image where the image brightness changes abruptly. Edge detection is a basic operation performed by the eye and is crucial to visual perception. PAGE embeds an original image into a set of feature maps that can be used for object representation and classification. The algorithm performs exceptionally well as an edge and texture extractor in low light level and low contrast images. This manuscript is prepared to support the open-source code which is being simultaneously made available within the GitHub repository https://github.com/JalaliLabUCLA/Phase-Stretch-Adaptive-Gradient-field-Extractor/.

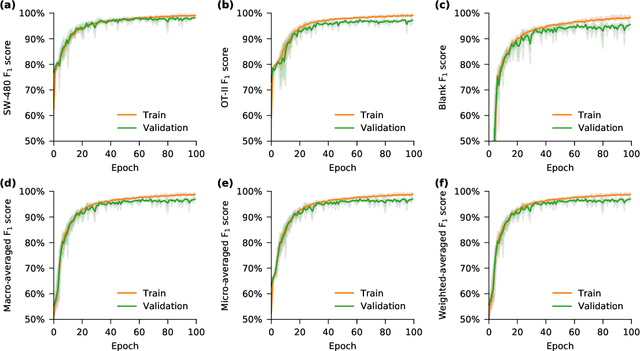

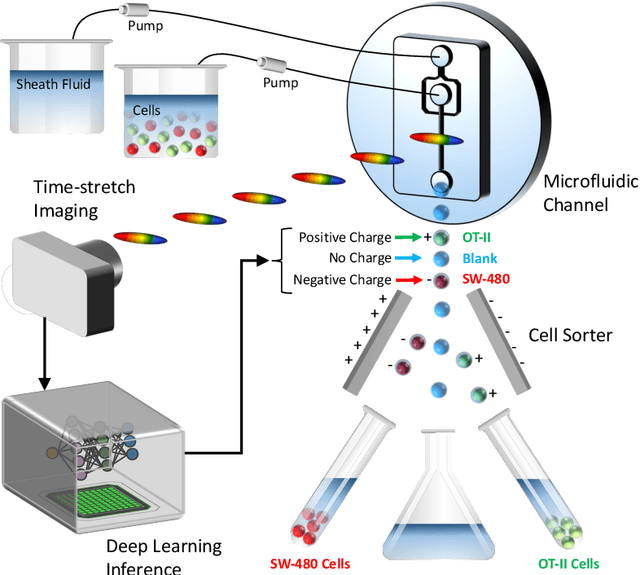

Deep Cytometry

Apr 09, 2019

Abstract:Deep learning has achieved spectacular performance in image and speech recognition and synthesis. It outperforms other machine learning algorithms in problems where large amounts of data are available. In the area of measurement technology, instruments based on the Photonic Time Stretch have established record real-time measurement throughput in spectroscopy, optical coherence tomography, and imaging flow cytometry. These extreme-throughput instruments generate approximately 1 Tbit/s of continuous measurement data and have led to the discovery of rare phenomena in nonlinear and complex systems as well as new types of biomedical instruments. Owing to the abundance of data they generate, time stretch instruments are a natural fit to deep learning classification. Previously we had shown that high-throughput label-free cell classification with high accuracy can be achieved through a combination of time stretch microscopy, image processing and feature extraction, followed by deep learning for finding cancer cells in the blood. Such a technology holds promise for early detection of primary cancer or metastasis. Here we describe a new implementation of deep learning which entirely avoids the computationally costly image processing and feature extraction pipeline. The improvement in computational efficiency makes this new technology suitable for cell sorting via deep learning. Our neural network takes less than a millisecond to classify the cells, fast enough to provide a decision to a cell sorter. We demonstrate the applicability of our new method in the classification of OT-II white blood cells and SW-480 epithelial cancer cells with more than 95\% accuracy in a label-free fashion.

Brain MRI Image Super Resolution using Phase Stretch Transform and Transfer Learning

Jul 31, 2018Abstract:A hallucination-free and computationally efficient algorithm for enhancing the resolution of brain MRI images is demonstrated.

Feature Enhancement in Visually Impaired Images

Jun 14, 2017

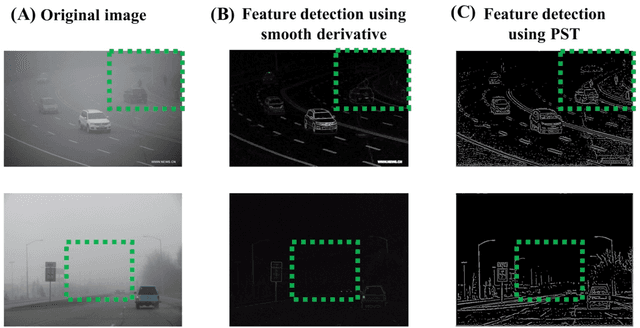

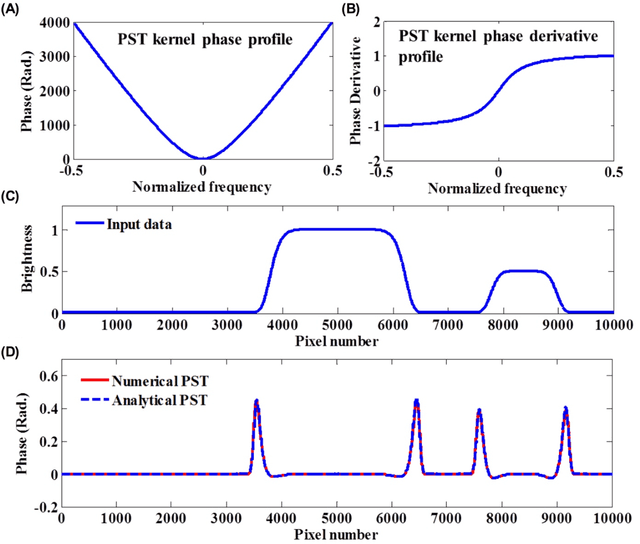

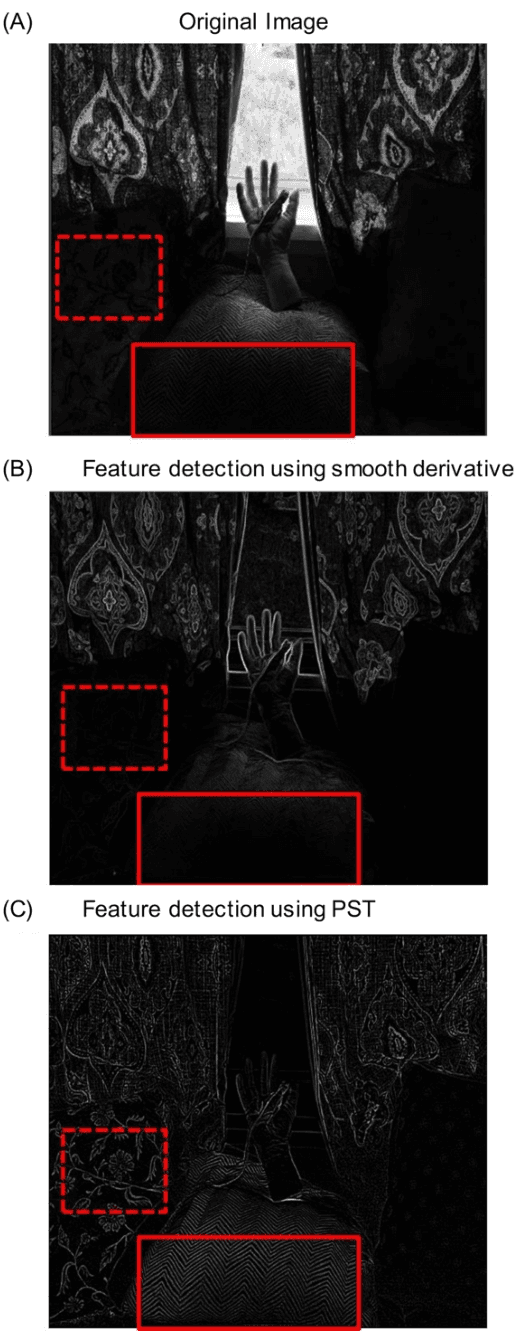

Abstract:One of the major open problems in computer vision is detection of features in visually impaired images. In this paper, we describe a potential solution using Phase Stretch Transform, a new computational approach for image analysis, edge detection and resolution enhancement that is inspired by the physics of the photonic time stretch technique. We mathematically derive the intrinsic nonlinear transfer function and demonstrate how it leads to (1) superior performance at low contrast levels and (2) a reconfigurable operator for hyper-dimensional classification. We prove that the Phase Stretch Transform equalizes the input image brightness across the range of intensities resulting in a high dynamic range in visually impaired images. We also show further improvement in the dynamic range by combining our method with the conventional techniques. Finally, our results show a method for computation of mathematical derivatives via group delay dispersion operations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge