Axiu Mao

CKSP: Cross-species Knowledge Sharing and Preserving for Universal Animal Activity Recognition

Oct 22, 2024Abstract:Deep learning techniques are dominating automated animal activity recognition (AAR) tasks with wearable sensors due to their high performance on large-scale labelled data. However, current deep learning-based AAR models are trained solely on datasets of individual animal species, constraining their applicability in practice and performing poorly when training data are limited. In this study, we propose a one-for-many framework, dubbed Cross-species Knowledge Sharing and Preserving (CKSP), based on sensor data of diverse animal species. Given the coexistence of generic and species-specific behavioural patterns among different species, we design a Shared-Preserved Convolution (SPConv) module. This module assigns an individual low-rank convolutional layer to each species for extracting species-specific features and employs a shared full-rank convolutional layer to learn generic features, enabling the CKSP framework to learn inter-species complementarity and alleviating data limitations via increasing data diversity. Considering the training conflict arising from discrepancies in data distributions among species, we devise a Species-specific Batch Normalization (SBN) module, that involves multiple BN layers to separately fit the distributions of different species. To validate CKSP's effectiveness, experiments are performed on three public datasets from horses, sheep, and cattle, respectively. The results show that our approach remarkably boosts the classification performance compared to the baseline method (one-for-one framework) solely trained on individual-species data, with increments of 6.04%, 2.06%, and 3.66% in accuracy, and 10.33%, 3.67%, and 7.90% in F1-score for the horse, sheep, and cattle datasets, respectively. This proves the promising capabilities of our method in leveraging multi-species data to augment classification performance.

DEeR: Deviation Eliminating and Noise Regulating for Privacy-preserving Federated Low-rank Adaptation

Oct 16, 2024

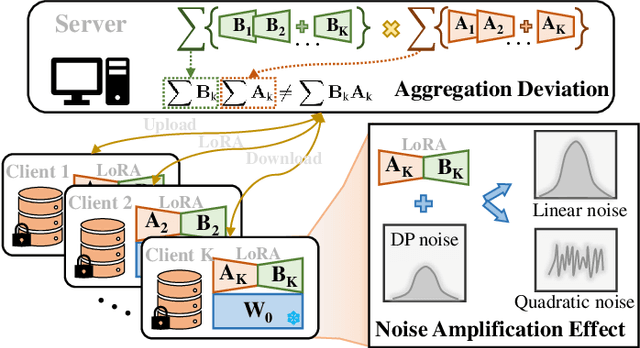

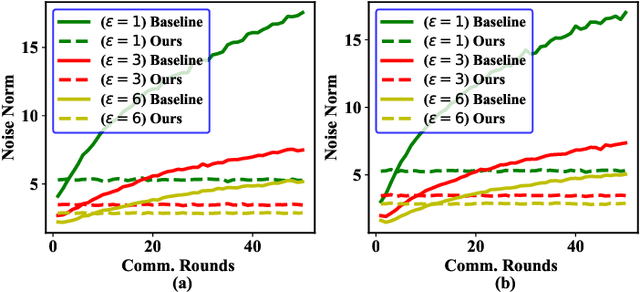

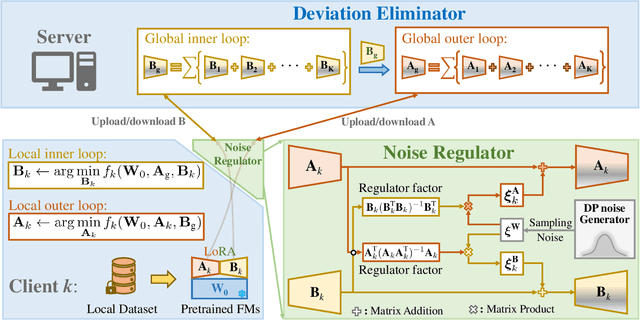

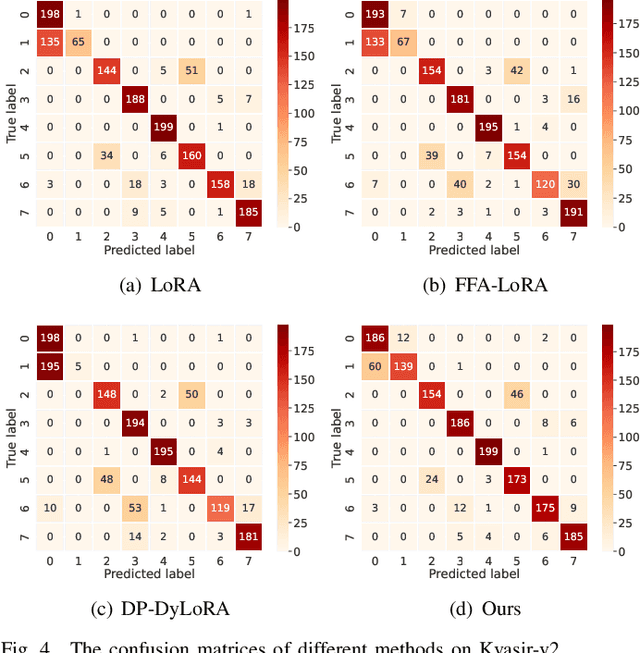

Abstract:Integrating low-rank adaptation (LoRA) with federated learning (FL) has received widespread attention recently, aiming to adapt pretrained foundation models (FMs) to downstream medical tasks via privacy-preserving decentralized training. However, owing to the direct combination of LoRA and FL, current methods generally undergo two problems, i.e., aggregation deviation, and differential privacy (DP) noise amplification effect. To address these problems, we propose a novel privacy-preserving federated finetuning framework called \underline{D}eviation \underline{E}liminating and Nois\underline{e} \underline{R}egulating (DEeR). Specifically, we firstly theoretically prove that the necessary condition to eliminate aggregation deviation is guaranteing the equivalence between LoRA parameters of clients. Based on the theoretical insight, a deviation eliminator is designed to utilize alternating minimization algorithm to iteratively optimize the zero-initialized and non-zero-initialized parameter matrices of LoRA, ensuring that aggregation deviation always be zeros during training. Furthermore, we also conduct an in-depth analysis of the noise amplification effect and find that this problem is mainly caused by the ``linear relationship'' between DP noise and LoRA parameters. To suppress the noise amplification effect, we propose a noise regulator that exploits two regulator factors to decouple relationship between DP and LoRA, thereby achieving robust privacy protection and excellent finetuning performance. Additionally, we perform comprehensive ablated experiments to verify the effectiveness of the deviation eliminator and noise regulator. DEeR shows better performance on public medical datasets in comparison with state-of-the-art approaches. The code is available at https://github.com/CUHK-AIM-Group/DEeR.

Occlusion-Resistant Instance Segmentation of Piglets in Farrowing Pens Using Center Clustering Network

Jun 04, 2022Abstract:Computer vision enables the development of new approaches to monitor the behavior, health, and welfare of animals. Instance segmentation is a high-precision method in computer vision for detecting individual animals of interest. This method can be used for in-depth analysis of animals, such as examining their subtle interactive behaviors, from videos and images. However, existing deep-learning-based instance segmentation methods have been mostly developed based on public datasets, which largely omit heavy occlusion problems; therefore, these methods have limitations in real-world applications involving object occlusions, such as farrowing pen systems used on pig farms in which the farrowing crates often impede the sow and piglets. In this paper, we propose a novel occlusion-resistant Center Clustering Network for instance segmentation, dubbed as CClusnet-Inseg. Specifically, CClusnet-Inseg uses each pixel to predict object centers and trace these centers to form masks based on clustering results, which consists of a network for segmentation and center offset vector map, Density-Based Spatial Clustering of Applications with Noise (DBSCAN) algorithm, Centers-to-Mask (C2M) and Remain-Centers-to-Mask (RC2M) algorithms, and a pseudo-occlusion generator (POG). In all, 4,600 images were extracted from six videos collected from six farrowing pens to train and validate our method. CClusnet-Inseg achieves a mean average precision (mAP) of 83.6; it outperformed YOLACT++ and Mask R-CNN, which had mAP values of 81.2 and 74.7, respectively. We conduct comprehensive ablation studies to demonstrate the advantages and effectiveness of core modules of our method. In addition, we apply CClusnet-Inseg to multi-object tracking for animal monitoring, and the predicted object center that is a conjunct output could serve as an occlusion-resistant representation of the location of an object.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge