Aurelien De Truchis

DUNIA: Pixel-Sized Embeddings via Cross-Modal Alignment for Earth Observation Applications

Feb 24, 2025

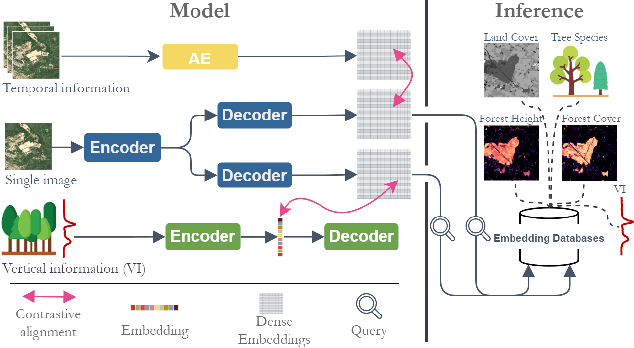

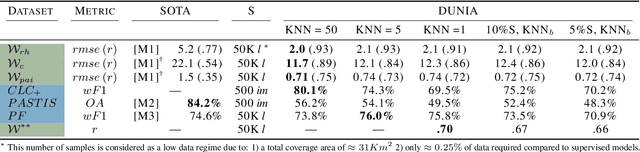

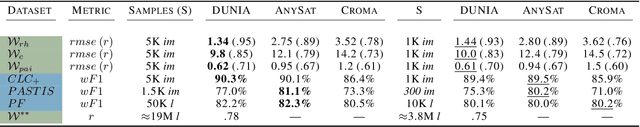

Abstract:Significant efforts have been directed towards adapting self-supervised multimodal learning for Earth observation applications. However, existing methods produce coarse patch-sized embeddings, limiting their effectiveness and integration with other modalities like LiDAR. To close this gap, we present DUNIA, an approach to learn pixel-sized embeddings through cross-modal alignment between images and full-waveform LiDAR data. As the model is trained in a contrastive manner, the embeddings can be directly leveraged in the context of a variety of environmental monitoring tasks in a zero-shot setting. In our experiments, we demonstrate the effectiveness of the embeddings for seven such tasks (canopy height mapping, fractional canopy cover, land cover mapping, tree species identification, plant area index, crop type classification, and per-pixel waveform-based vertical structure mapping). The results show that the embeddings, along with zero-shot classifiers, often outperform specialized supervised models, even in low data regimes. In the fine-tuning setting, we show strong low-shot capabilities with performances near or better than state-of-the-art on five out of six tasks.

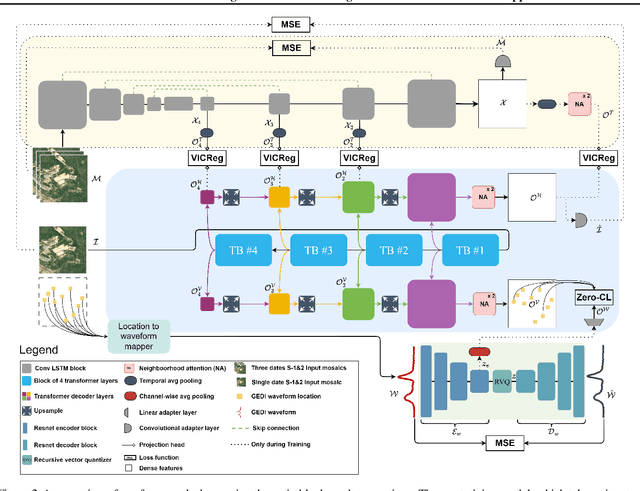

High-resolution canopy height map in the Landes forest based on GEDI, Sentinel-1, and Sentinel-2 data with a deep learning approach

Dec 20, 2022Abstract:In intensively managed forests in Europe, where forests are divided into stands of small size and may show heterogeneity within stands, a high spatial resolution (10 - 20 meters) is arguably needed to capture the differences in canopy height. In this work, we developed a deep learning model based on multi-stream remote sensing measurements to create a high-resolution canopy height map over the "Landes de Gascogne" forest in France, a large maritime pine plantation of 13,000 km$^2$ with flat terrain and intensive management. This area is characterized by even-aged and mono-specific stands, of a typical length of a few hundred meters, harvested every 35 to 50 years. Our deep learning U-Net model uses multi-band images from Sentinel-1 and Sentinel-2 with composite time averages as input to predict tree height derived from GEDI waveforms. The evaluation is performed with external validation data from forest inventory plots and a stereo 3D reconstruction model based on Skysat imagery available at specific locations. We trained seven different U-net models based on a combination of Sentinel-1 and Sentinel-2 bands to evaluate the importance of each instrument in the dominant height retrieval. The model outputs allow us to generate a 10 m resolution canopy height map of the whole "Landes de Gascogne" forest area for 2020 with a mean absolute error of 2.02 m on the Test dataset. The best predictions were obtained using all available satellite layers from Sentinel-1 and Sentinel-2 but using only one satellite source also provided good predictions. For all validation datasets in coniferous forests, our model showed better metrics than previous canopy height models available in the same region.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge