Aryeh Englander

Modeling Transformative AI Risks (MTAIR) Project -- Summary Report

Jun 19, 2022

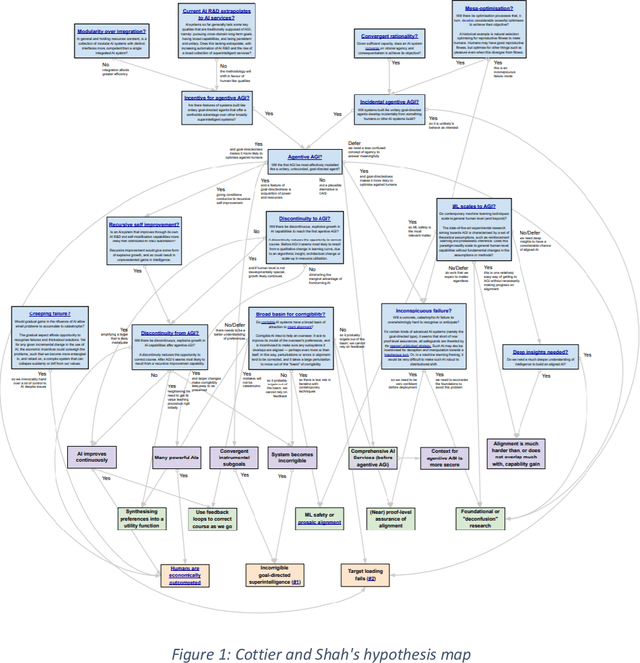

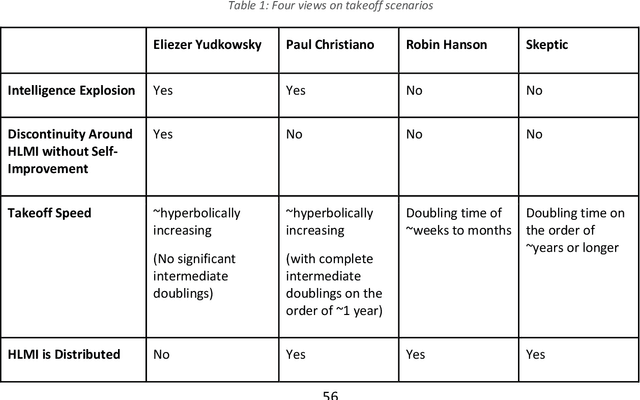

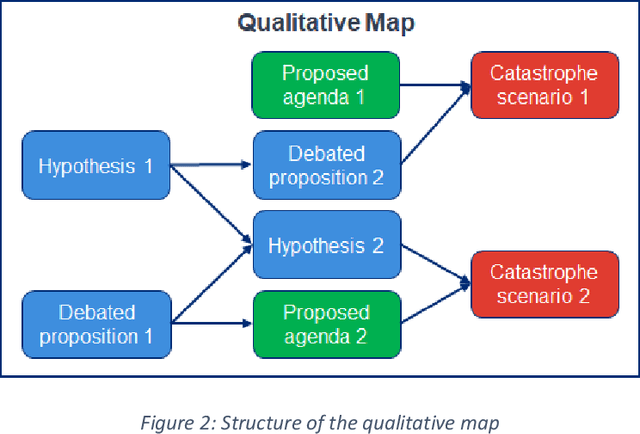

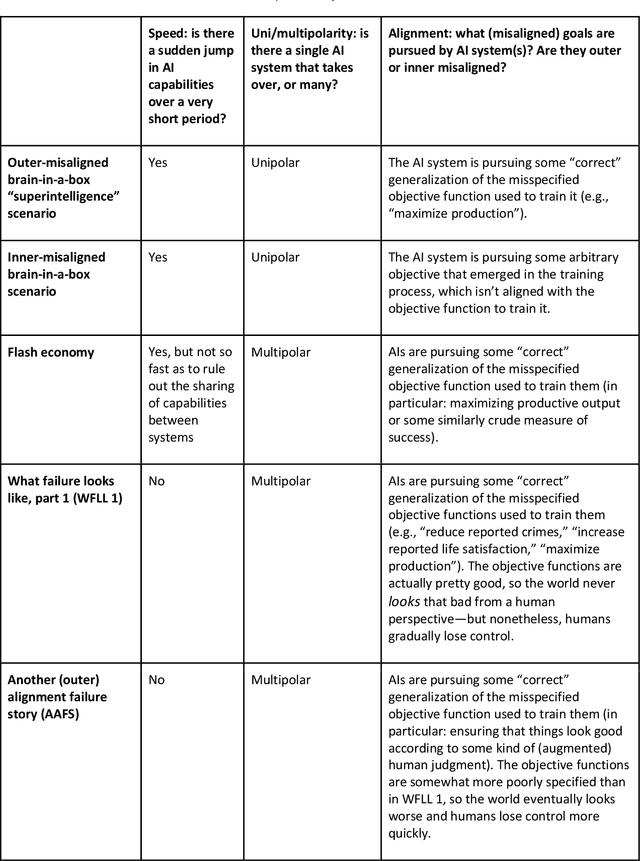

Abstract:This report outlines work by the Modeling Transformative AI Risk (MTAIR) project, an attempt to map out the key hypotheses, uncertainties, and disagreements in debates about catastrophic risks from advanced AI, and the relationships between them. This builds on an earlier diagram by Ben Cottier and Rohin Shah which laid out some of the crucial disagreements ("cruxes") visually, with some explanation. Based on an extensive literature review and engagement with experts, the report explains a model of the issues involved, and the initial software-based implementation that can incorporate probability estimates or other quantitative factors to enable exploration, planning, and/or decision support. By gathering information from various debates and discussions into a single more coherent presentation, we hope to enable better discussions and debates about the issues involved. The model starts with a discussion of reasoning via analogies and general prior beliefs about artificial intelligence. Following this, it lays out a model of different paths and enabling technologies for high-level machine intelligence, and a model of how advances in the capabilities of these systems might proceed, including debates about self-improvement, discontinuous improvements, and the possibility of distributed, non-agentic high-level intelligence or slower improvements. The model also looks specifically at the question of learned optimization, and whether machine learning systems will create mesa-optimizers. The impact of different safety research on the previous sets of questions is then examined, to understand whether and how research could be useful in enabling safer systems. Finally, we discuss a model of different failure modes and loss of control or takeover scenarios.

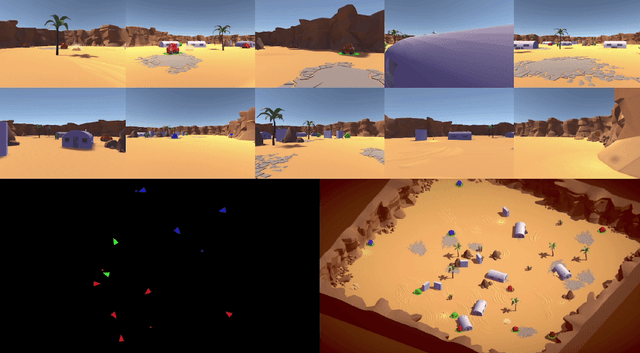

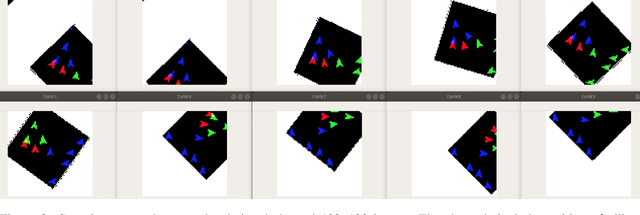

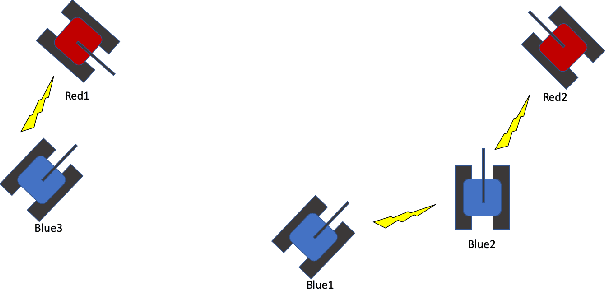

TanksWorld: A Multi-Agent Environment for AI Safety Research

Feb 25, 2020

Abstract:The ability to create artificial intelligence (AI) capable of performing complex tasks is rapidly outpacing our ability to ensure the safe and assured operation of AI-enabled systems. Fortunately, a landscape of AI safety research is emerging in response to this asymmetry and yet there is a long way to go. In particular, recent simulation environments created to illustrate AI safety risks are relatively simple or narrowly-focused on a particular issue. Hence, we see a critical need for AI safety research environments that abstract essential aspects of complex real-world applications. In this work, we introduce the AI safety TanksWorld as an environment for AI safety research with three essential aspects: competing performance objectives, human-machine teaming, and multi-agent competition. The AI safety TanksWorld aims to accelerate the advancement of safe multi-agent decision-making algorithms by providing a software framework to support competitions with both system performance and safety objectives. As a work in progress, this paper introduces our research objectives and learning environment with reference code and baseline performance metrics to follow in a future work.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge