Anna Glazkova

Evaluating LLM Prompts for Data Augmentation in Multi-label Classification of Ecological Texts

Nov 22, 2024

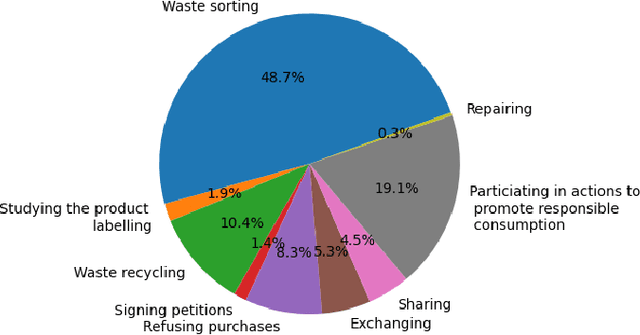

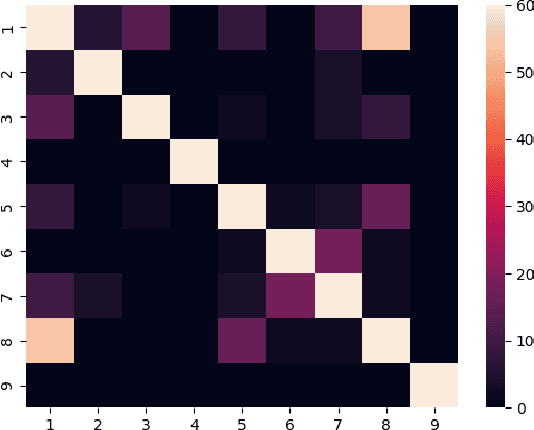

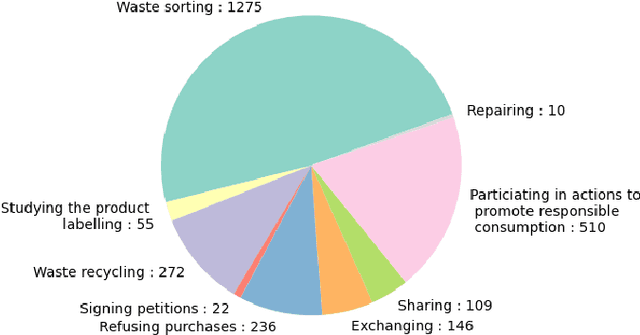

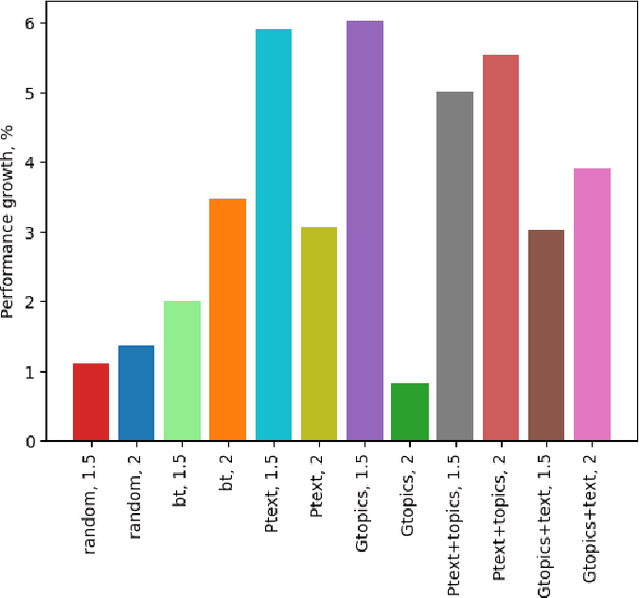

Abstract:Large language models (LLMs) play a crucial role in natural language processing (NLP) tasks, improving the understanding, generation, and manipulation of human language across domains such as translating, summarizing, and classifying text. Previous studies have demonstrated that instruction-based LLMs can be effectively utilized for data augmentation to generate diverse and realistic text samples. This study applied prompt-based data augmentation to detect mentions of green practices in Russian social media. Detecting green practices in social media aids in understanding their prevalence and helps formulate recommendations for scaling eco-friendly actions to mitigate environmental issues. We evaluated several prompts for augmenting texts in a multi-label classification task, either by rewriting existing datasets using LLMs, generating new data, or combining both approaches. Our results revealed that all strategies improved classification performance compared to the models fine-tuned only on the original dataset, outperforming baselines in most cases. The best results were obtained with the prompt that paraphrased the original text while clearly indicating the relevant categories.

Key Algorithms for Keyphrase Generation: Instruction-Based LLMs for Russian Scientific Keyphrases

Oct 23, 2024Abstract:Keyphrase selection is a challenging task in natural language processing that has a wide range of applications. Adapting existing supervised and unsupervised solutions for the Russian language faces several limitations due to the rich morphology of Russian and the limited number of training datasets available. Recent studies conducted on English texts show that large language models (LLMs) successfully address the task of generating keyphrases. LLMs allow achieving impressive results without task-specific fine-tuning, using text prompts instead. In this work, we access the performance of prompt-based methods for generating keyphrases for Russian scientific abstracts. First, we compare the performance of zero-shot and few-shot prompt-based methods, fine-tuned models, and unsupervised methods. Then we assess strategies for selecting keyphrase examples in a few-shot setting. We present the outcomes of human evaluation of the generated keyphrases and analyze the strengths and weaknesses of the models through expert assessment. Our results suggest that prompt-based methods can outperform common baselines even using simple text prompts.

Exploring Fine-tuned Generative Models for Keyphrase Selection: A Case Study for Russian

Sep 18, 2024Abstract:Keyphrase selection plays a pivotal role within the domain of scholarly texts, facilitating efficient information retrieval, summarization, and indexing. In this work, we explored how to apply fine-tuned generative transformer-based models to the specific task of keyphrase selection within Russian scientific texts. We experimented with four distinct generative models, such as ruT5, ruGPT, mT5, and mBART, and evaluated their performance in both in-domain and cross-domain settings. The experiments were conducted on the texts of Russian scientific abstracts from four domains: mathematics & computer science, history, medicine, and linguistics. The use of generative models, namely mBART, led to gains in in-domain performance (up to 4.9% in BERTScore, 9.0% in ROUGE-1, and 12.2% in F1-score) over three keyphrase extraction baselines for the Russian language. Although the results for cross-domain usage were significantly lower, they still demonstrated the capability to surpass baseline performances in several cases, underscoring the promising potential for further exploration and refinement in this research field.

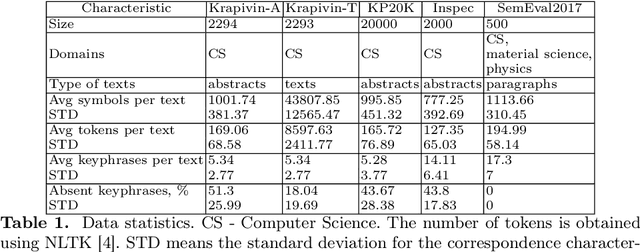

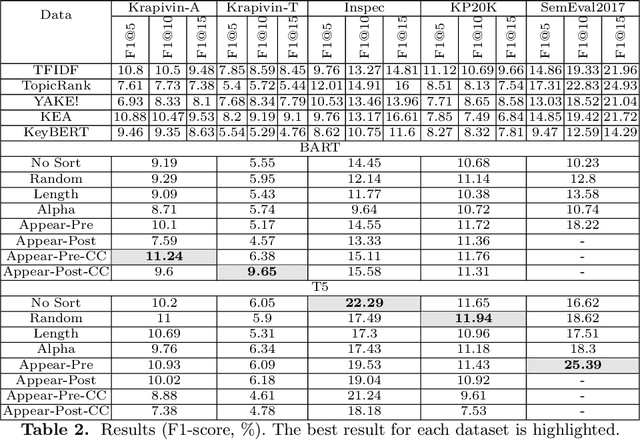

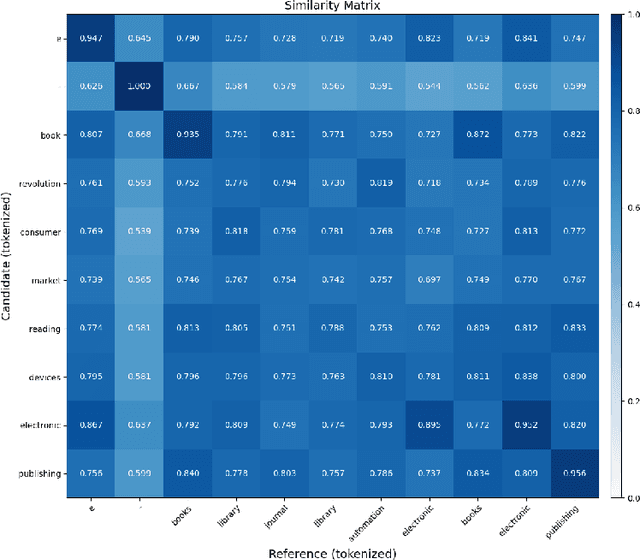

Cross-Domain Robustness of Transformer-based Keyphrase Generation

Dec 17, 2023Abstract:Modern models for text generation show state-of-the-art results in many natural language processing tasks. In this work, we explore the effectiveness of abstractive text summarization models for keyphrase selection. A list of keyphrases is an important element of a text in databases and repositories of electronic documents. In our experiments, abstractive text summarization models fine-tuned for keyphrase generation show quite high results for a target text corpus. However, in most cases, the zero-shot performance on other corpora and domains is significantly lower. We investigate cross-domain limitations of abstractive text summarization models for keyphrase generation. We present an evaluation of the fine-tuned BART models for the keyphrase selection task across six benchmark corpora for keyphrase extraction including scientific texts from two domains and news texts. We explore the role of transfer learning between different domains to improve the BART model performance on small text corpora. Our experiments show that preliminary fine-tuning on out-of-domain corpora can be effective under conditions of a limited number of samples.

tmn at #SMM4H 2023: Comparing Text Preprocessing Techniques for Detecting Tweets Self-reporting a COVID-19 Diagnosis

Nov 01, 2023

Abstract:The paper describes a system developed for Task 1 at SMM4H 2023. The goal of the task is to automatically distinguish tweets that self-report a COVID-19 diagnosis (for example, a positive test, clinical diagnosis, or hospitalization) from those that do not. We investigate the use of different techniques for preprocessing tweets using four transformer-based models. The ensemble of fine-tuned language models obtained an F1-score of 84.5%, which is 4.1% higher than the average value.

tmn at SemEval-2023 Task 9: Multilingual Tweet Intimacy Detection using XLM-T, Google Translate, and Ensemble Learning

Apr 08, 2023

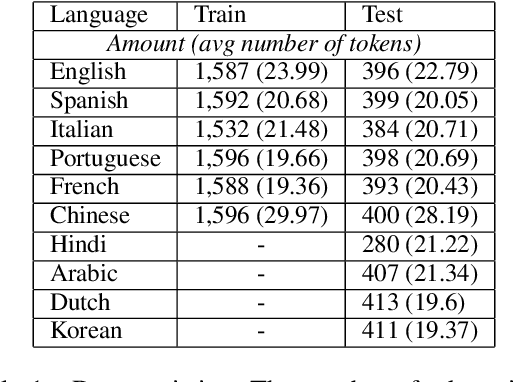

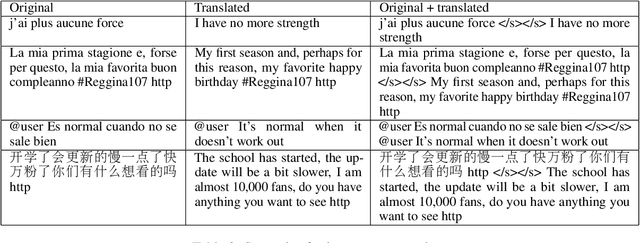

Abstract:The paper describes a transformer-based system designed for SemEval-2023 Task 9: Multilingual Tweet Intimacy Analysis. The purpose of the task was to predict the intimacy of tweets in a range from 1 (not intimate at all) to 5 (very intimate). The official training set for the competition consisted of tweets in six languages (English, Spanish, Italian, Portuguese, French, and Chinese). The test set included the given six languages as well as external data with four languages not presented in the training set (Hindi, Arabic, Dutch, and Korean). We presented a solution based on an ensemble of XLM-T, a multilingual RoBERTa model adapted to the Twitter domain. To improve the performance of unseen languages, each tweet was supplemented by its English translation. We explored the effectiveness of translated data for the languages seen in fine-tuning compared to unseen languages and estimated strategies for using translated data in transformer-based models. Our solution ranked 4th on the leaderboard while achieving an overall Pearson's r of 0.599 over the test set. The proposed system improves up to 0.088 Pearson's r over a score averaged across all 45 submissions.

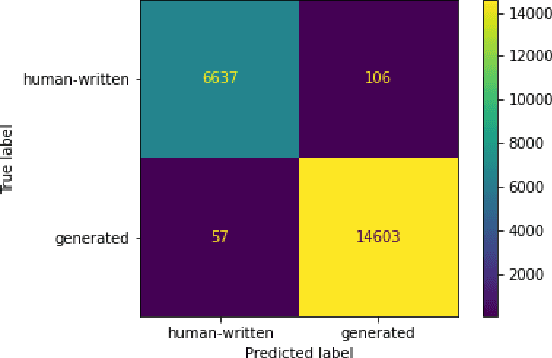

Detecting Generated Scientific Papers using an Ensemble of Transformer Models

Sep 17, 2022

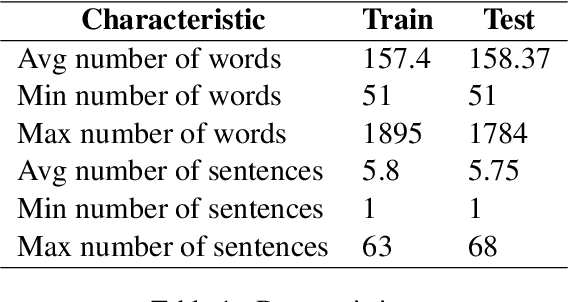

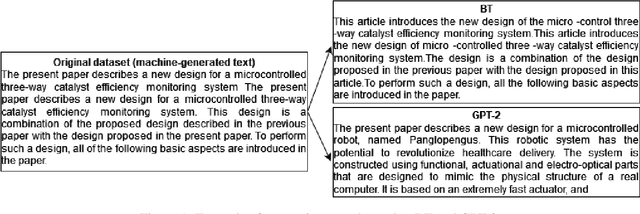

Abstract:The paper describes neural models developed for the DAGPap22 shared task hosted at the Third Workshop on Scholarly Document Processing. This shared task targets the automatic detection of generated scientific papers. Our work focuses on comparing different transformer-based models as well as using additional datasets and techniques to deal with imbalanced classes. As a final submission, we utilized an ensemble of SciBERT, RoBERTa, and DeBERTa fine-tuned using random oversampling technique. Our model achieved 99.24% in terms of F1-score. The official evaluation results have put our system at the third place.

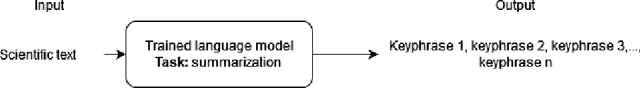

Applying Transformer-based Text Summarization for Keyphrase Generation

Sep 08, 2022

Abstract:Keyphrases are crucial for searching and systematizing scholarly documents. Most current methods for keyphrase extraction are aimed at the extraction of the most significant words in the text. But in practice, the list of keyphrases often includes words that do not appear in the text explicitly. In this case, the list of keyphrases represents an abstractive summary of the source text. In this paper, we experiment with popular transformer-based models for abstractive text summarization using four benchmark datasets for keyphrase extraction. We compare the results obtained with the results of common unsupervised and supervised methods for keyphrase extraction. Our evaluation shows that summarization models are quite effective in generating keyphrases in the terms of the full-match F1-score and BERTScore. However, they produce a lot of words that are absent in the author's list of keyphrases, which makes summarization models ineffective in terms of ROUGE-1. We also investigate several ordering strategies to concatenate target keyphrases. The results showed that the choice of strategy affects the performance of keyphrase generation.

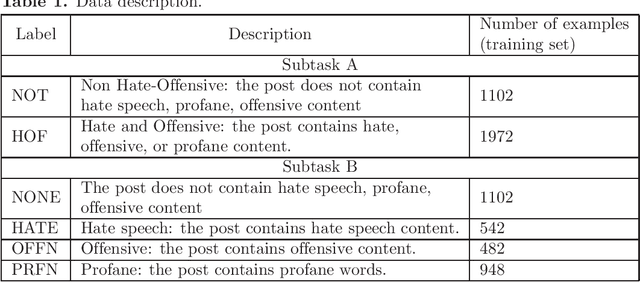

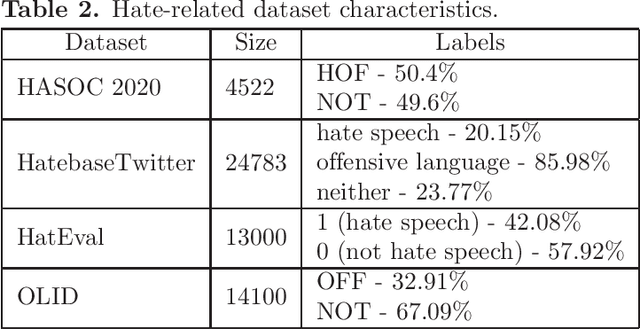

Fine-tuning of Pre-trained Transformers for Hate, Offensive, and Profane Content Detection in English and Marathi

Oct 25, 2021

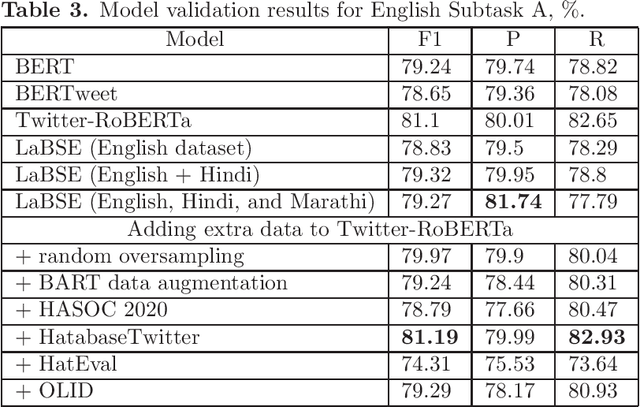

Abstract:This paper describes neural models developed for the Hate Speech and Offensive Content Identification in English and Indo-Aryan Languages Shared Task 2021. Our team called neuro-utmn-thales participated in two tasks on binary and fine-grained classification of English tweets that contain hate, offensive, and profane content (English Subtasks A & B) and one task on identification of problematic content in Marathi (Marathi Subtask A). For English subtasks, we investigate the impact of additional corpora for hate speech detection to fine-tune transformer models. We also apply a one-vs-rest approach based on Twitter-RoBERTa to discrimination between hate, profane and offensive posts. Our models ranked third in English Subtask A with the F1-score of 81.99% and ranked second in English Subtask B with the F1-score of 65.77%. For the Marathi tasks, we propose a system based on the Language-Agnostic BERT Sentence Embedding (LaBSE). This model achieved the second result in Marathi Subtask A obtaining an F1 of 88.08%.

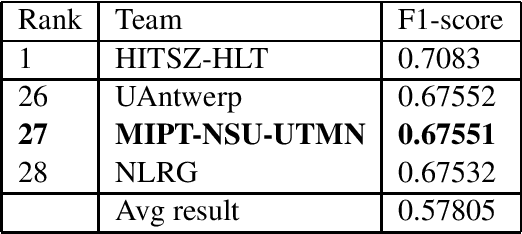

MIPT-NSU-UTMN at SemEval-2021 Task 5: Ensembling Learning with Pre-trained Language Models for Toxic Spans Detection

Apr 10, 2021

Abstract:This paper describes our system for SemEval-2021 Task 5 on Toxic Spans Detection. We developed ensemble models using BERT-based neural architectures and post-processing to combine tokens into spans. We evaluated several pre-trained language models using various ensemble techniques for toxic span identification and achieved sizable improvements over our baseline fine-tuned BERT models. Finally, our system obtained a F1-score of 67.55% on test data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge