Ankita Paul

Learning Glioblastoma Tumor Heterogeneity Using Brain Inspired Topological Neural Networks

Feb 11, 2026Abstract:Accurate prognosis for Glioblastoma (GBM) using deep learning (DL) is hindered by extreme spatial and structural heterogeneity. Moreover, inconsistent MRI acquisition protocols across institutions hinder generalizability of models. Conventional transformer and DL pipelines often fail to capture the multi-scale morphological diversity such as fragmented necrotic cores, infiltrating margins, and disjoint enhancing components leading to scanner-specific artifacts and poor cross-site prognosis. We propose TopoGBM, a learning framework designed to capture heterogeneity-preserved, scanner-robust representations from multi-parametric 3D MRI. Central to our approach is a 3D convolutional autoencoder regularized by a topological regularization that preserves the complex, non-Euclidean invariants of the tumor's manifold within a compressed latent space. By enforcing these topological priors, TopoGBM explicitly models the high-variance structural signatures characteristic of aggressive GBM. Evaluated across heterogeneous cohorts (UPENN, UCSF, RHUH) and external validation on TCGA, TopoGBM achieves better performance (C-index 0.67 test, 0.58 validation), outperforming baselines that degrade under domain shift. Mechanistic interpretability analysis reveals that reconstruction residuals are highly localized to pathologically heterogeneous zones, with tumor-restricted and healthy tissue error significantly low (Test: 0.03, Validation: 0.09). Furthermore, occlusion-based attribution localizes approximately 50% of the prognostic signal to the tumor and the diverse peritumoral microenvironment advocating clinical reliability of the unsupervised learning method. Our findings demonstrate that incorporating topological priors enables the learning of morphology-faithful embeddings that capture tumor heterogeneity while maintaining cross-institutional robustness.

Learning in Feedback-driven Recurrent Spiking Neural Networks using full-FORCE Training

May 26, 2022

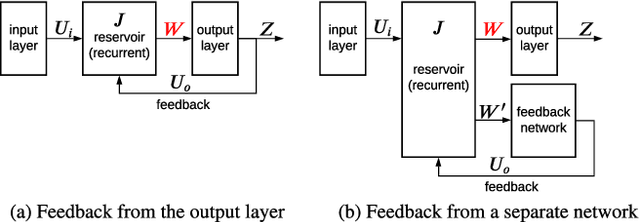

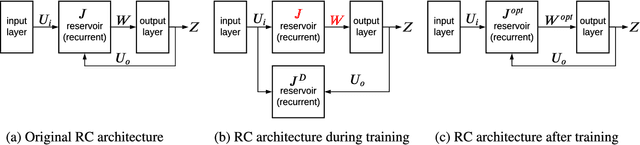

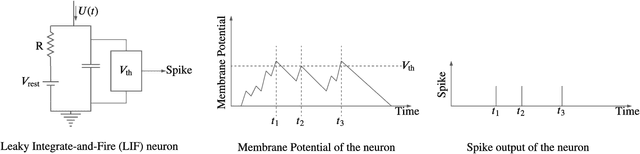

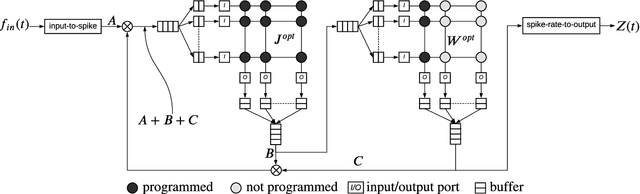

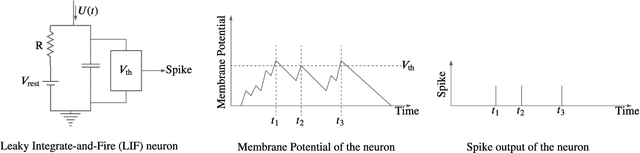

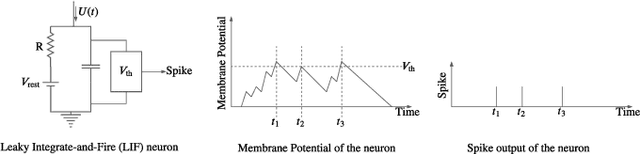

Abstract:Feedback-driven recurrent spiking neural networks (RSNNs) are powerful computational models that can mimic dynamical systems. However, the presence of a feedback loop from the readout to the recurrent layer de-stabilizes the learning mechanism and prevents it from converging. Here, we propose a supervised training procedure for RSNNs, where a second network is introduced only during the training, to provide hint for the target dynamics. The proposed training procedure consists of generating targets for both recurrent and readout layers (i.e., for a full RSNN system). It uses the recursive least square-based First-Order and Reduced Control Error (FORCE) algorithm to fit the activity of each layer to its target. The proposed full-FORCE training procedure reduces the amount of modifications needed to keep the error between the output and target close to zero. These modifications control the feedback loop, which causes the training to converge. We demonstrate the improved performance and noise robustness of the proposed full-FORCE training procedure to model 8 dynamical systems using RSNNs with leaky integrate and fire (LIF) neurons and rate coding. For energy-efficient hardware implementation, an alternative time-to-first-spike (TTFS) coding is implemented for the full- FORCE training procedure. Compared to rate coding, full-FORCE with TTFS coding generates fewer spikes and facilitates faster convergence to the target dynamics.

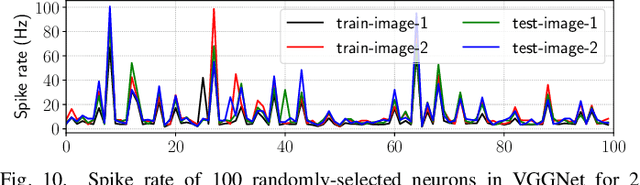

A Design Methodology for Fault-Tolerant Computing using Astrocyte Neural Networks

Apr 06, 2022

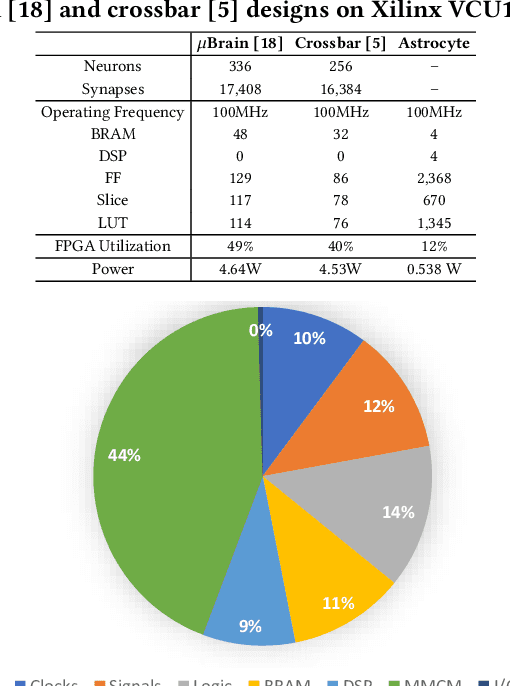

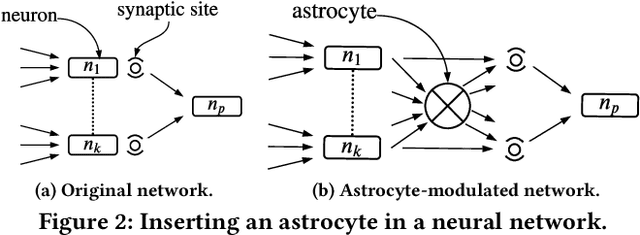

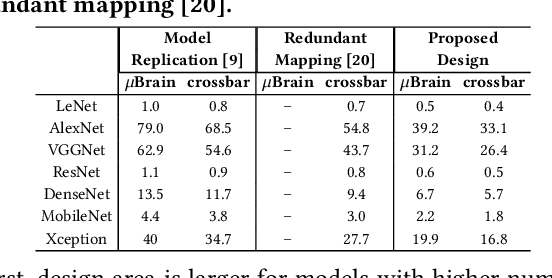

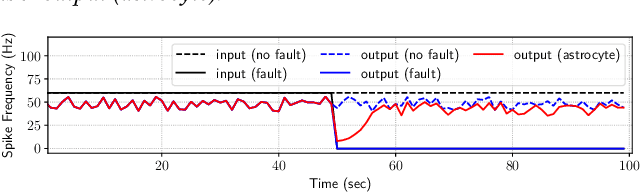

Abstract:We propose a design methodology to facilitate fault tolerance of deep learning models. First, we implement a many-core fault-tolerant neuromorphic hardware design, where neuron and synapse circuitries in each neuromorphic core are enclosed with astrocyte circuitries, the star-shaped glial cells of the brain that facilitate self-repair by restoring the spike firing frequency of a failed neuron using a closed-loop retrograde feedback signal. Next, we introduce astrocytes in a deep learning model to achieve the required degree of tolerance to hardware faults. Finally, we use a system software to partition the astrocyte-enabled model into clusters and implement them on the proposed fault-tolerant neuromorphic design. We evaluate this design methodology using seven deep learning inference models and show that it is both area and power efficient.

Energy-Efficient Respiratory Anomaly Detection in Premature Newborn Infants

Feb 21, 2022

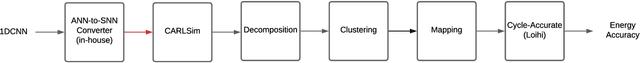

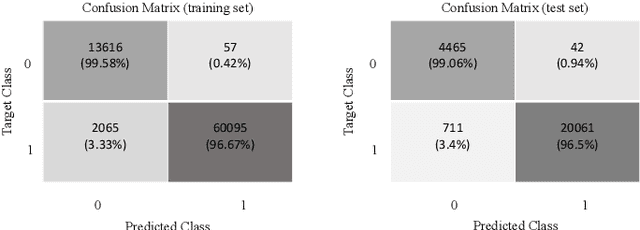

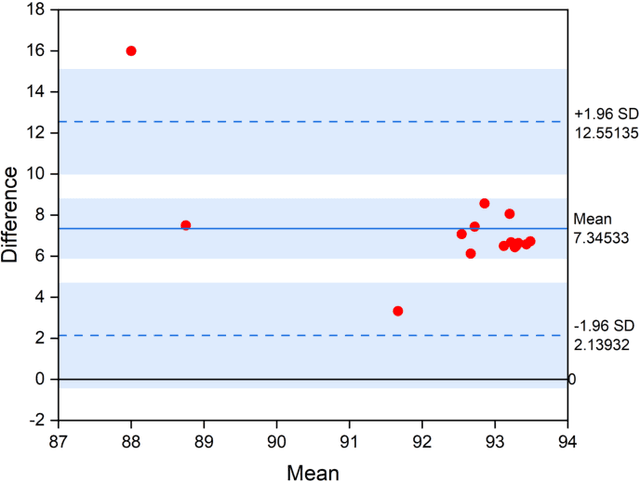

Abstract:Precise monitoring of respiratory rate in premature infants is essential to initiate medical interventions as required. Wired technologies can be invasive and obtrusive to the patients. We propose a Deep Learning enabled wearable monitoring system for premature newborn infants, where respiratory cessation is predicted using signals that are collected wirelessly from a non-invasive wearable Bellypatch put on infant's body. We propose a five-stage design pipeline involving data collection and labeling, feature scaling, model selection with hyperparameter tuning, model training and validation, model testing and deployment. The model used is a 1-D Convolutional Neural Network (1DCNN) architecture with 1 convolutional layer, 1 pooling layer and 3 fully-connected layers, achieving 97.15% accuracy. To address energy limitations of wearable processing, several quantization techniques are explored and their performance and energy consumption are analyzed. We propose a novel Spiking-Neural-Network(SNN) based respiratory classification solution, which can be implemented on event-driven neuromorphic hardware. We propose an approach to convert the analog operations of our baseline 1DCNN to their spiking equivalent. We perform a design-space exploration using the parameters of the converted SNN to generate inference solutions having different accuracy and energy footprints. We select a solution that achieves 93.33% accuracy with 18 times lower energy compared with baseline 1DCNN model. Additionally the proposed SNN solution achieves similar accuracy but with 4 times less energy.

Implementing Spiking Neural Networks on Neuromorphic Architectures: A Review

Feb 17, 2022

Abstract:Recently, both industry and academia have proposed several different neuromorphic systems to execute machine learning applications that are designed using Spiking Neural Networks (SNNs). With the growing complexity on design and technology fronts, programming such systems to admit and execute a machine learning application is becoming increasingly challenging. Additionally, neuromorphic systems are required to guarantee real-time performance, consume lower energy, and provide tolerance to logic and memory failures. Consequently, there is a clear need for system software frameworks that can implement machine learning applications on current and emerging neuromorphic systems, and simultaneously address performance, energy, and reliability. Here, we provide a comprehensive overview of such frameworks proposed for both, platform-based design and hardware-software co-design. We highlight challenges and opportunities that the future holds in the area of system software technology for neuromorphic computing.

On the Mitigation of Read Disturbances in Neuromorphic Inference Hardware

Jan 27, 2022

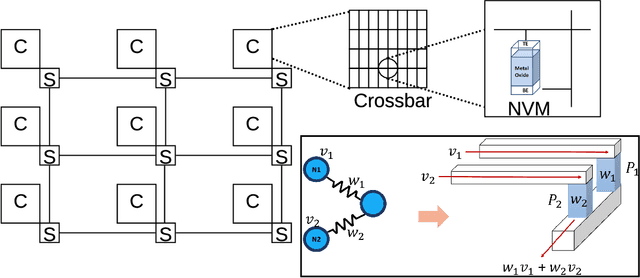

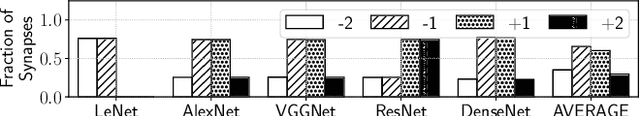

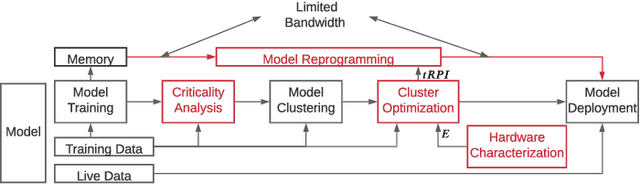

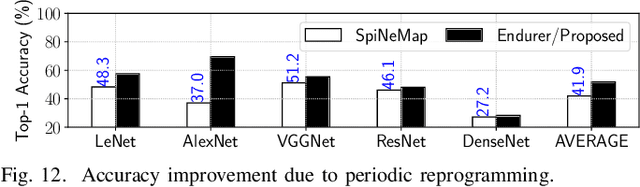

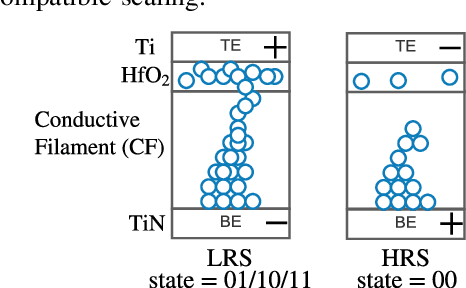

Abstract:Non-Volatile Memory (NVM) cells are used in neuromorphic hardware to store model parameters, which are programmed as resistance states. NVMs suffer from the read disturb issue, where the programmed resistance state drifts upon repeated access of a cell during inference. Resistance drifts can lower the inference accuracy. To address this, it is necessary to periodically reprogram model parameters (a high overhead operation). We study read disturb failures of an NVM cell. Our analysis show both a strong dependency on model characteristics such as synaptic activation and criticality, and on the voltage used to read resistance states during inference. We propose a system software framework to incorporate such dependencies in programming model parameters on NVM cells of a neuromorphic hardware. Our framework consists of a convex optimization formulation which aims to implement synaptic weights that have more activations and are critical, i.e., those that have high impact on accuracy on NVM cells that are exposed to lower voltages during inference. In this way, we increase the time interval between two consecutive reprogramming of model parameters. We evaluate our system software with many emerging inference models on a neuromorphic hardware simulator and show a significant reduction in the system overhead.

Design Technology Co-Optimization for Neuromorphic Computing

Oct 15, 2021

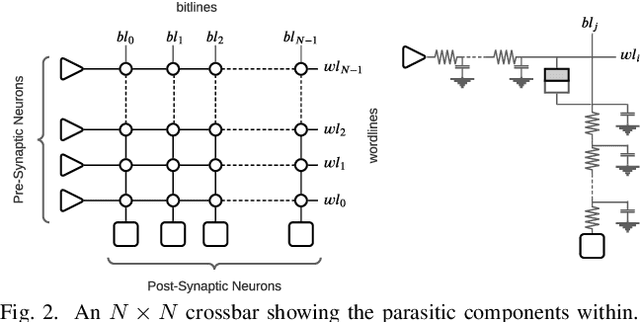

Abstract:We present a design-technology tradeoff analysis in implementing machine-learning inference on the processing cores of a Non-Volatile Memory (NVM)-based many-core neuromorphic hardware. Through detailed circuit-level simulations for scaled process technology nodes, we show the negative impact of design scaling on read endurance of NVMs, which directly impacts their inference lifetime. At a finer granularity, the inference lifetime of a core depends on 1) the resistance state of synaptic weights programmed on the core (design) and 2) the voltage variation inside the core that is introduced by the parasitic components on current paths (technology). We show that such design and technology characteristics can be incorporated in a design flow to significantly improve the inference lifetime.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge