Anjali Mazumder

Accountability Capture: How Record-Keeping to Support AI Transparency and Accountability (Re)shapes Algorithmic Oversight

Oct 06, 2025

Abstract:Accountability regimes typically encourage record-keeping to enable the transparency that supports oversight, investigation, contestation, and redress. However, implementing such record-keeping can introduce considerations, risks, and consequences, which so far remain under-explored. This paper examines how record-keeping practices bring algorithmic systems within accountability regimes, providing a basis to observe and understand their effects. For this, we introduce, describe, and elaborate 'accountability capture' -- the re-configuration of socio-technical processes and the associated downstream effects relating to record-keeping for algorithmic accountability. Surveying 100 practitioners, we evidence and characterise record-keeping issues in practice, identifying their alignment with accountability capture. We further document widespread record-keeping practices, tensions between internal and external accountability requirements, and evidence of employee resistance to practices imposed through accountability capture. We discuss these and other effects for surveillance, privacy, and data protection, highlighting considerations for algorithmic accountability communities. In all, we show that implementing record-keeping to support transparency in algorithmic accountability regimes can itself bring wider implications -- an issue requiring greater attention from practitioners, researchers, and policymakers alike.

Advancing Data Justice Research and Practice: An Integrated Literature Review

Apr 06, 2022

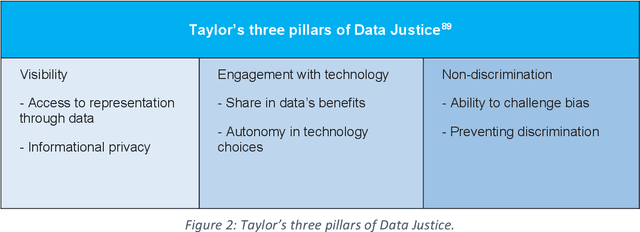

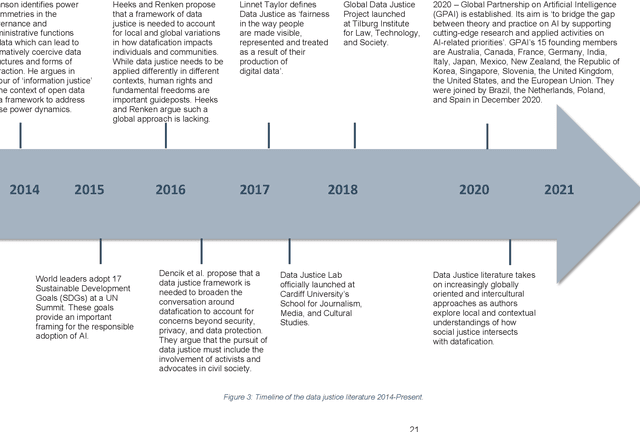

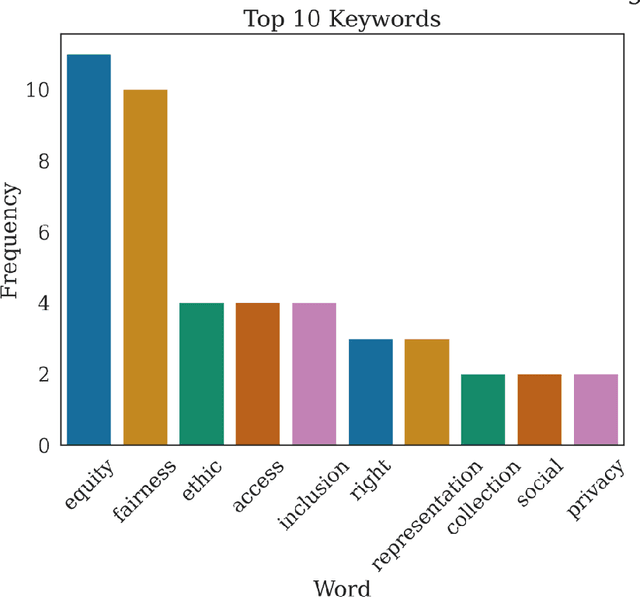

Abstract:The Advancing Data Justice Research and Practice (ADJRP) project aims to widen the lens of current thinking around data justice and to provide actionable resources that will help policymakers, practitioners, and impacted communities gain a broader understanding of what equitable, freedom-promoting, and rights-sustaining data collection, governance, and use should look like in increasingly dynamic and global data innovation ecosystems. In this integrated literature review we hope to lay the conceptual groundwork needed to support this aspiration. The introduction motivates the broadening of data justice that is undertaken by the literature review which follows. First, we address how certain limitations of the current study of data justice drive the need for a re-location of data justice research and practice. We map out the strengths and shortcomings of the contemporary state of the art and then elaborate on the challenges faced by our own effort to broaden the data justice perspective in the decolonial context. The body of the literature review covers seven thematic areas. For each theme, the ADJRP team has systematically collected and analysed key texts in order to tell the critical empirical story of how existing social structures and power dynamics present challenges to data justice and related justice fields. In each case, this critical empirical story is also supplemented by the transformational story of how activists, policymakers, and academics are challenging longstanding structures of inequity to advance social justice in data innovation ecosystems and adjacent areas of technological practice.

CCC/Code 8.7: Applying AI in the Fight Against Modern Slavery

Jun 24, 2021Abstract:On any given day, tens of millions of people find themselves trapped in instances of modern slavery. The terms "human trafficking," "trafficking in persons," and "modern slavery" are sometimes used interchangeably to refer to both sex trafficking and forced labor. Human trafficking occurs when a trafficker compels someone to provide labor or services through the use of force, fraud, and/or coercion. The wide range of stakeholders in human trafficking presents major challenges. Direct stakeholders are law enforcement, NGOs and INGOs, businesses, local/planning government authorities, and survivors. Viewed from a very high level, all stakeholders share in a rich network of interactions that produce and consume enormous amounts of information. The problems of making efficient use of such information for the purposes of fighting trafficking while at the same time adhering to community standards of privacy and ethics are formidable. At the same time they help us, technologies that increase surveillance of populations can also undermine basic human rights. In early March 2020, the Computing Community Consortium (CCC), in collaboration with the Code 8.7 Initiative, brought together over fifty members of the computing research community along with anti-slavery practitioners and survivors to lay out a research roadmap. The primary goal was to explore ways in which long-range research in artificial intelligence (AI) could be applied to the fight against human trafficking. Building on the kickoff Code 8.7 conference held at the headquarters of the United Nations in February 2019, the focus for this workshop was to link the ambitious goals outlined in the A 20-Year Community Roadmap for Artificial Intelligence Research in the US (AI Roadmap) to challenges vital in achieving the UN's Sustainable Development Goal Target 8.7, the elimination of modern slavery.

Does "AI" stand for augmenting inequality in the era of covid-19 healthcare?

Apr 30, 2021Abstract:Among the most damaging characteristics of the covid-19 pandemic has been its disproportionate effect on disadvantaged communities. As the outbreak has spread globally, factors such as systemic racism, marginalisation, and structural inequality have created path dependencies that have led to poor health outcomes. These social determinants of infectious disease and vulnerability to disaster have converged to affect already disadvantaged communities with higher levels of economic instability, disease exposure, infection severity, and death. Artificial intelligence (AI) technologies are an important part of the health informatics toolkit used to fight contagious disease. AI is well known, however, to be susceptible to algorithmic biases that can entrench and augment existing inequality. Uncritically deploying AI in the fight against covid-19 thus risks amplifying the pandemic's adverse effects on vulnerable groups, exacerbating health inequity. In this paper, we claim that AI systems can introduce or reflect bias and discrimination in three ways: in patterns of health discrimination that become entrenched in datasets, in data representativeness, and in human choices made during the design, development, and deployment of these systems. We highlight how the use of AI technologies threaten to exacerbate the disparate effect of covid-19 on marginalised, under-represented, and vulnerable groups, particularly black, Asian, and other minoritised ethnic people, older populations, and those of lower socioeconomic status. We conclude that, to mitigate the compounding effects of AI on inequalities associated with covid-19, decision makers, technology developers, and health officials must account for the potential biases and inequities at all stages of the AI process.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge