Angela Jin

(Beyond) Reasonable Doubt: Challenges that Public Defenders Face in Scrutinizing AI in Court

Mar 13, 2024

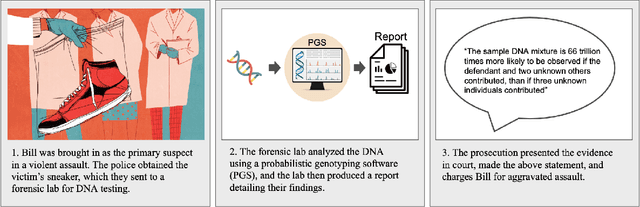

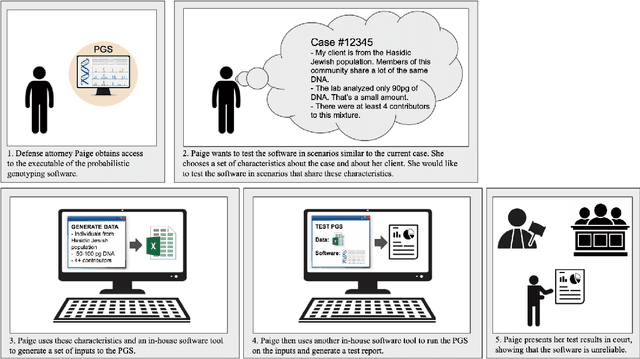

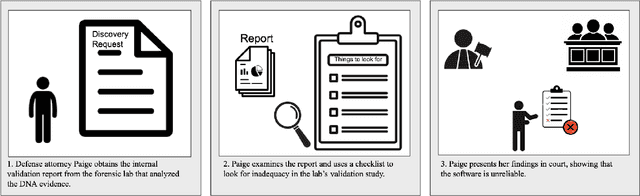

Abstract:Accountable use of AI systems in high-stakes settings relies on making systems contestable. In this paper we study efforts to contest AI systems in practice by studying how public defenders scrutinize AI in court. We present findings from interviews with 17 people in the U.S. public defense community to understand their perceptions of and experiences scrutinizing computational forensic software (CFS) -- automated decision systems that the government uses to convict and incarcerate, such as facial recognition, gunshot detection, and probabilistic genotyping tools. We find that our participants faced challenges assessing and contesting CFS reliability due to difficulties (a) navigating how CFS is developed and used, (b) overcoming judges and jurors' non-critical perceptions of CFS, and (c) gathering CFS expertise. To conclude, we provide recommendations that center the technical, social, and institutional context to better position interventions such as performance evaluations to support contestability in practice.

Adversarial Scrutiny of Evidentiary Statistical Software

Jun 19, 2022

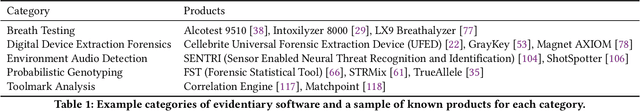

Abstract:The U.S. criminal legal system increasingly relies on software output to convict and incarcerate people. In a large number of cases each year, the government makes these consequential decisions based on evidence from statistical software -- such as probabilistic genotyping, environmental audio detection, and toolmark analysis tools -- that defense counsel cannot fully cross-examine or scrutinize. This undermines the commitments of the adversarial criminal legal system, which relies on the defense's ability to probe and test the prosecution's case to safeguard individual rights. Responding to this need to adversarially scrutinize output from such software, we propose robust adversarial testing as an audit framework to examine the validity of evidentiary statistical software. We define and operationalize this notion of robust adversarial testing for defense use by drawing on a large body of recent work in robust machine learning and algorithmic fairness. We demonstrate how this framework both standardizes the process for scrutinizing such tools and empowers defense lawyers to examine their validity for instances most relevant to the case at hand. We further discuss existing structural and institutional challenges within the U.S. criminal legal system that may create barriers for implementing this and other such audit frameworks and close with a discussion on policy changes that could help address these concerns.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge