Andrew Kirby

Retuve: Automated Multi-Modality Analysis of Hip Dysplasia with Open Source AI

Apr 08, 2025Abstract:Developmental dysplasia of the hip (DDH) poses significant diagnostic challenges, hindering timely intervention. Current screening methodologies lack standardization, and AI-driven studies suffer from reproducibility issues due to limited data and code availability. To address these limitations, we introduce Retuve, an open-source framework for multi-modality DDH analysis, encompassing both ultrasound (US) and X-ray imaging. Retuve provides a complete and reproducible workflow, offering open datasets comprising expert-annotated US and X-ray images, pre-trained models with training code and weights, and a user-friendly Python Application Programming Interface (API). The framework integrates segmentation and landmark detection models, enabling automated measurement of key diagnostic parameters such as the alpha angle and acetabular index. By adhering to open-source principles, Retuve promotes transparency, collaboration, and accessibility in DDH research. This initiative has the potential to democratize DDH screening, facilitate early diagnosis, and ultimately improve patient outcomes by enabling widespread screening and early intervention. The GitHub repository/code can be found here: https://github.com/radoss-org/retuve

Validated respiratory drug deposition predictions from 2D and 3D medical images with statistical shape models and convolutional neural networks

Mar 02, 2023Abstract:For the one billion sufferers of respiratory disease, managing their disease with inhalers crucially influences their quality of life. Generic treatment plans could be improved with the aid of computational models that account for patient-specific features such as breathing pattern, lung pathology and morphology. Therefore, we aim to develop and validate an automated computational framework for patient-specific deposition modelling. To that end, an image processing approach is proposed that could produce 3D patient respiratory geometries from 2D chest X-rays and 3D CT images. We evaluated the airway and lung morphology produced by our image processing framework, and assessed deposition compared to in vivo data. The 2D-to-3D image processing reproduces airway diameter to 9% median error compared to ground truth segmentations, but is sensitive to outliers of up to 33% due to lung outline noise. Predicted regional deposition gave 5% median error compared to in vivo measurements. The proposed framework is capable of providing patient-specific deposition measurements for varying treatments, to determine which treatment would best satisfy the needs imposed by each patient (such as disease and lung/airway morphology). Integration of patient-specific modelling into clinical practice as an additional decision-making tool could optimise treatment plans and lower the burden of respiratory diseases.

Accuracy and Performance Comparison of Video Action Recognition Approaches

Aug 20, 2020

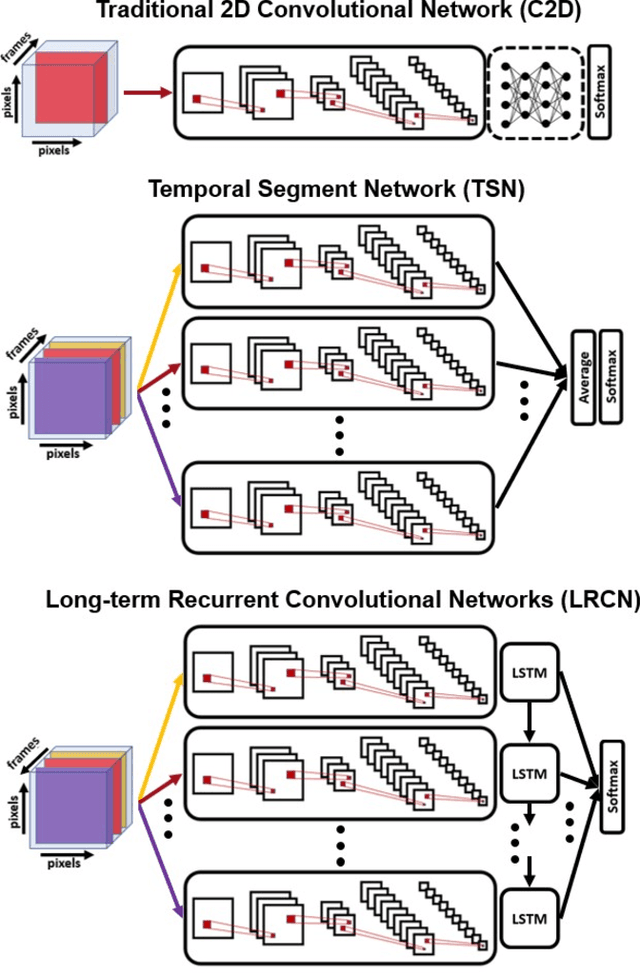

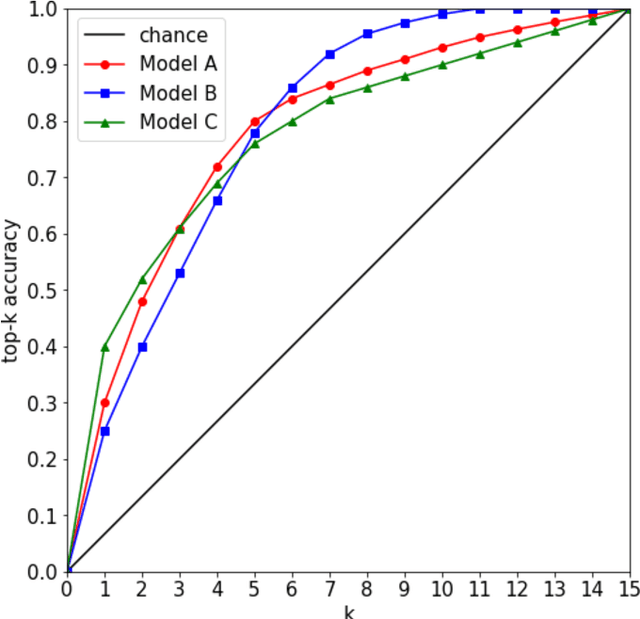

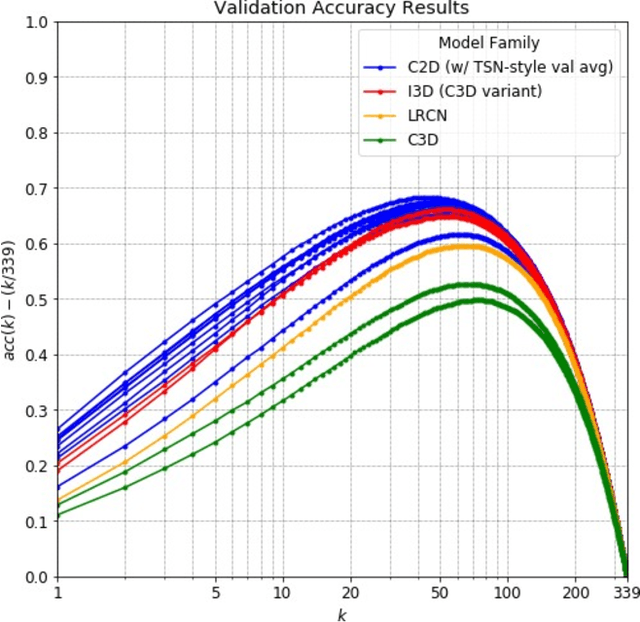

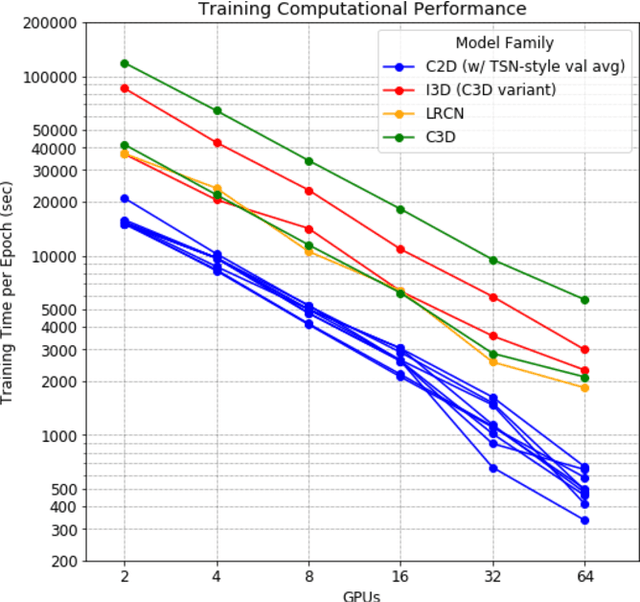

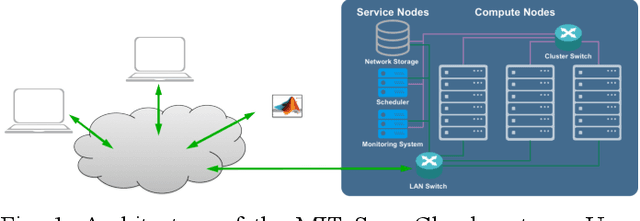

Abstract:Over the past few years, there has been significant interest in video action recognition systems and models. However, direct comparison of accuracy and computational performance results remain clouded by differing training environments, hardware specifications, hyperparameters, pipelines, and inference methods. This article provides a direct comparison between fourteen off-the-shelf and state-of-the-art models by ensuring consistency in these training characteristics in order to provide readers with a meaningful comparison across different types of video action recognition algorithms. Accuracy of the models is evaluated using standard Top-1 and Top-5 accuracy metrics in addition to a proposed new accuracy metric. Additionally, we compare computational performance of distributed training from two to sixty-four GPUs on a state-of-the-art HPC system.

Benchmarking network fabrics for data distributed training of deep neural networks

Aug 18, 2020

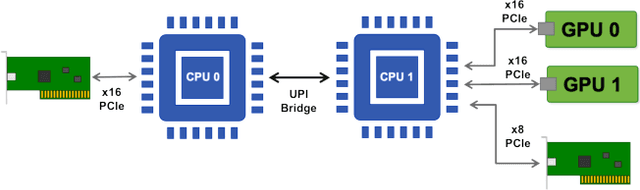

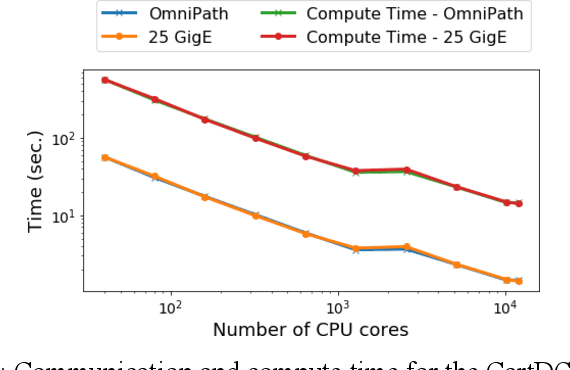

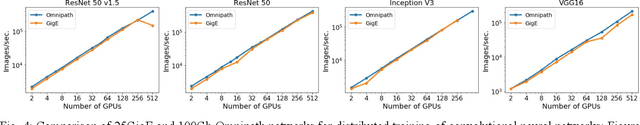

Abstract:Artificial Intelligence/Machine Learning applications require the training of complex models on large amounts of labelled data. The large computational requirements for training deep models have necessitated the development of new methods for faster training. One such approach is the data parallel approach, where the training data is distributed across multiple compute nodes. This approach is simple to implement and supported by most of the commonly used machine learning frameworks. The data parallel approach leverages MPI for communicating gradients across all nodes. In this paper, we examine the effects of using different physical hardware interconnects and network-related software primitives for enabling data distributed deep learning. We compare the effect of using GPUDirect and NCCL on Ethernet and OmniPath fabrics. Our results show that using Ethernet-based networking in shared HPC systems does not have a significant effect on the training times for commonly used deep neural network architectures or traditional HPC applications such as Computational Fluid Dynamics.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge