Andreas Kassler

Spatial PDE-aware Selective State-space with Nested Memory for Mobile Traffic Grid Forecasting

Mar 12, 2026Abstract:Traffic forecasting in cellular networks is a challenging spatiotemporal prediction problem due to strong temporal dependencies, spatial heterogeneity across cells, and the need for scalability to large network deployments. Traditional cell-specific models incur prohibitive training and maintenance costs, while global models often fail to capture heterogeneous spatial dynamics. Recent spatiotemporal architectures based on attention or graph neural networks improve accuracy but introduce high computational overhead, limiting their applicability in large-scale or real-time settings. We study spatiotemporal grid forecasting, where each time step is a 2D lattice of traffic values, and predict the next grid patch using previous patches. We propose NeST-S6, a convolutional selective state-space model (SSM) with a spatial PDE-aware core, implemented in a nested learning paradigm: convolutional local spatial mixing feeds a spatial PDE-aware SSM core, while a nested-learning long-term memory is updated by a learned optimizer when one-step prediction errors indicate unmodeled dynamics. On the mobile-traffic grid (Milan dataset) at three resolutions (202, 502, 1002), NeST-S6 attains lower errors than a strong Mamba-family baseline in both single-step and 6-step autoregressive rollouts. Under drift stress tests, our model's nested memory lowers MAE by 48-65% over a no-memory ablation. NeST-S6 also speeds full-grid reconstruction by 32 times and reduces MACs by 4.3 times compared to competitive per-pixel scanning models, while achieving 61% lower per-pixel RMSE.

RNM-TD3: N:M Semi-structured Sparse Reinforcement Learning From Scratch

Feb 16, 2026Abstract:Sparsity is a well-studied technique for compressing deep neural networks (DNNs) without compromising performance. In deep reinforcement learning (DRL), neural networks with up to 5% of their original weights can still be trained with minimal performance loss compared to their dense counterparts. However, most existing methods rely on unstructured fine-grained sparsity, which limits hardware acceleration opportunities due to irregular computation patterns. Structured coarse-grained sparsity enables hardware acceleration, yet typically degrades performance and increases pruning complexity. In this work, we present, to the best of our knowledge, the first study on N:M structured sparsity in RL, which balances compression, performance, and hardware efficiency. Our framework enforces row-wise N:M sparsity throughout training for all networks in off-policy RL (TD3), maintaining compatibility with accelerators that support N:M sparse matrix operations. Experiments on continuous-control benchmarks show that RNM-TD3, our N:M sparse agent, outperforms its dense counterpart at 50%-75% sparsity (e.g., 2:4 and 1:4), achieving up to a 14% increase in performance at 2:4 sparsity on the Ant environment. RNM-TD3 remains competitive even at 87.5% sparsity (1:8), while enabling potential training speedups.

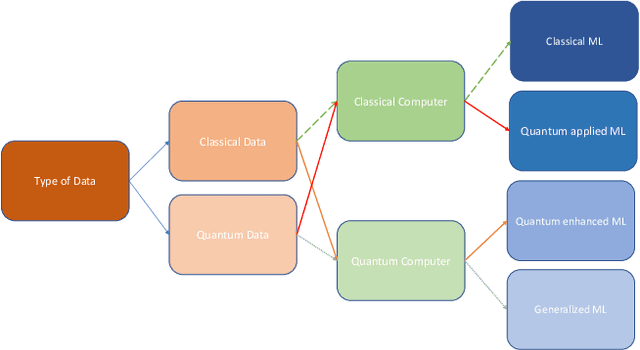

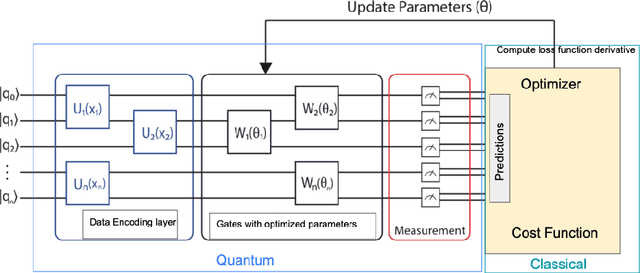

Quantum Machine Learning in Climate Change and Sustainability: a Review

Oct 13, 2023

Abstract:Climate change and its impact on global sustainability are critical challenges, demanding innovative solutions that combine cutting-edge technologies and scientific insights. Quantum machine learning (QML) has emerged as a promising paradigm that harnesses the power of quantum computing to address complex problems in various domains including climate change and sustainability. In this work, we survey existing literature that applies quantum machine learning to solve climate change and sustainability-related problems. We review promising QML methodologies that have the potential to accelerate decarbonization including energy systems, climate data forecasting, climate monitoring, and hazardous events predictions. We discuss the challenges and current limitations of quantum machine learning approaches and provide an overview of potential opportunities and future work to leverage QML-based methods in the important area of climate change research.

DA-LSTM: A Dynamic Drift-Adaptive Learning Framework for Interval Load Forecasting with LSTM Networks

May 15, 2023Abstract:Load forecasting is a crucial topic in energy management systems (EMS) due to its vital role in optimizing energy scheduling and enabling more flexible and intelligent power grid systems. As a result, these systems allow power utility companies to respond promptly to demands in the electricity market. Deep learning (DL) models have been commonly employed in load forecasting problems supported by adaptation mechanisms to cope with the changing pattern of consumption by customers, known as concept drift. A drift magnitude threshold should be defined to design change detection methods to identify drifts. While the drift magnitude in load forecasting problems can vary significantly over time, existing literature often assumes a fixed drift magnitude threshold, which should be dynamically adjusted rather than fixed during system evolution. To address this gap, in this paper, we propose a dynamic drift-adaptive Long Short-Term Memory (DA-LSTM) framework that can improve the performance of load forecasting models without requiring a drift threshold setting. We integrate several strategies into the framework based on active and passive adaptation approaches. To evaluate DA-LSTM in real-life settings, we thoroughly analyze the proposed framework and deploy it in a real-world problem through a cloud-based environment. Efficiency is evaluated in terms of the prediction performance of each approach and computational cost. The experiments show performance improvements on multiple evaluation metrics achieved by our framework compared to baseline methods from the literature. Finally, we present a trade-off analysis between prediction performance and computational costs.

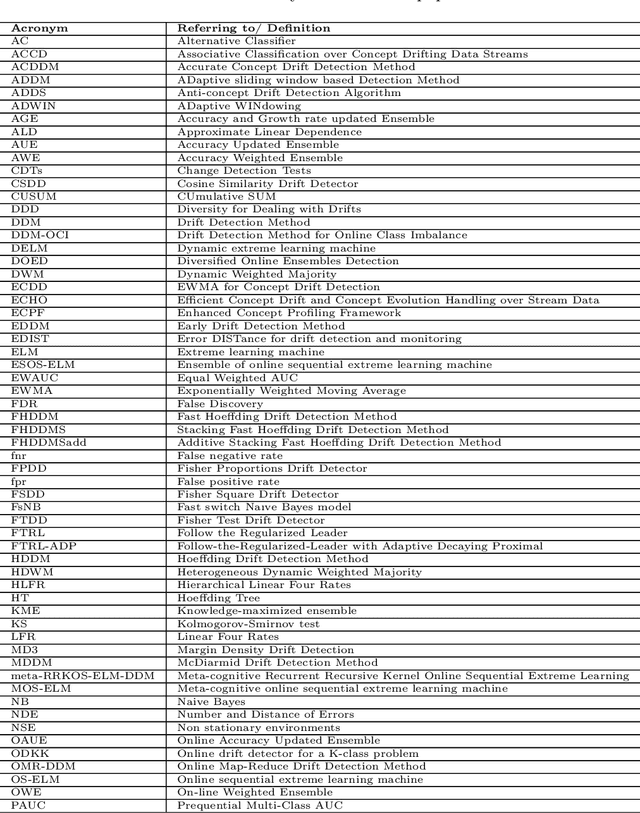

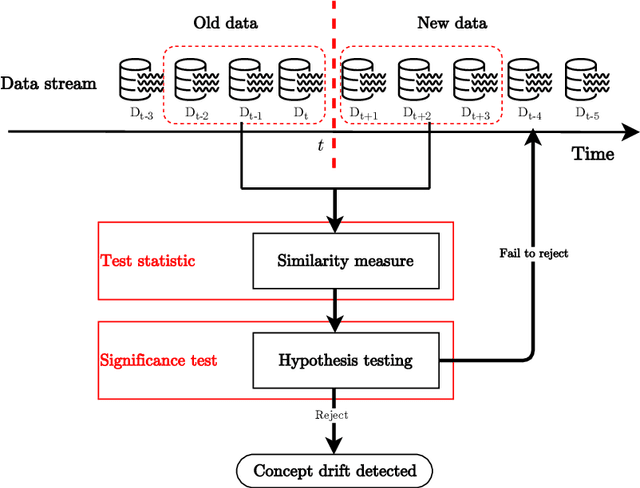

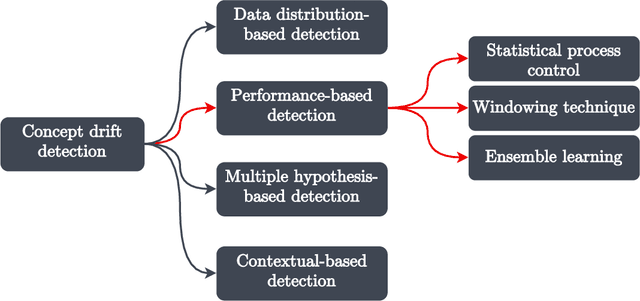

From Concept Drift to Model Degradation: An Overview on Performance-Aware Drift Detectors

Mar 21, 2022

Abstract:The dynamicity of real-world systems poses a significant challenge to deployed predictive machine learning (ML) models. Changes in the system on which the ML model has been trained may lead to performance degradation during the system's life cycle. Recent advances that study non-stationary environments have mainly focused on identifying and addressing such changes caused by a phenomenon called concept drift. Different terms have been used in the literature to refer to the same type of concept drift and the same term for various types. This lack of unified terminology is set out to create confusion on distinguishing between different concept drift variants. In this paper, we start by grouping concept drift types by their mathematical definitions and survey the different terms used in the literature to build a consolidated taxonomy of the field. We also review and classify performance-based concept drift detection methods proposed in the last decade. These methods utilize the predictive model's performance degradation to signal substantial changes in the systems. The classification is outlined in a hierarchical diagram to provide an orderly navigation between the methods. We present a comprehensive analysis of the main attributes and strategies for tracking and evaluating the model's performance in the predictive system. The paper concludes by discussing open research challenges and possible research directions.

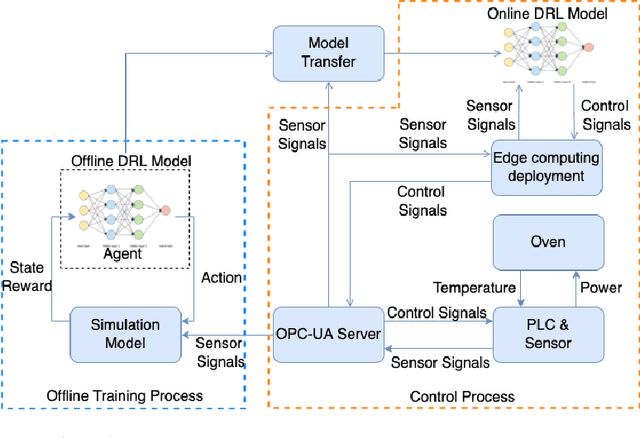

Using Deep Reinforcement Learning for Zero Defect Smart Forging

Jan 25, 2022

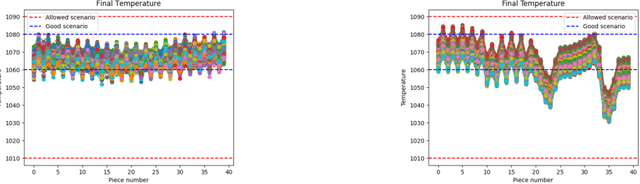

Abstract:Defects during production may lead to material waste, which is a significant challenge for many companies as it reduces revenue and negatively impacts sustainability and the environment. An essential reason for material waste is a low degree of automation, especially in industries that currently have a low degree of digitalization, such as steel forging. Those industries typically rely on heavy and old machinery such as large induction ovens that are mostly controlled manually or using well-known recipes created by experts. However, standard recipes may fail when unforeseen events happen, such as an unplanned stop in production, which may lead to overheating and thus material degradation during the forging process. In this paper, we develop a digital twin-based optimization strategy for the heating process for a forging line to automate the development of an optimal control policy that adjusts the power for the heating coils in an induction oven based on temperature data observed from pyrometers. We design a digital twin-based deep reinforcement learning (DTRL) framework and train two different deep reinforcement learning (DRL) models for the heating phase using a digital twin of the forging line. The twin is based on a simulator that contains a heating transfer and movement model, which is used as an environment for the DRL training. Our evaluation shows that both models significantly reduce the temperature unevenness and can help to automate the traditional heating process.

Trajectory Optimization for Cooperative Dual-band UAV Swarms

Jul 25, 2018

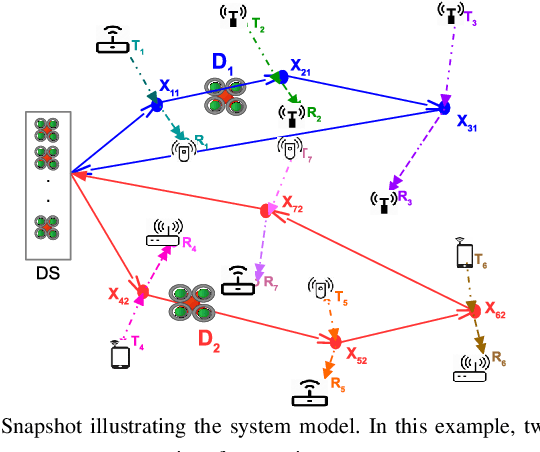

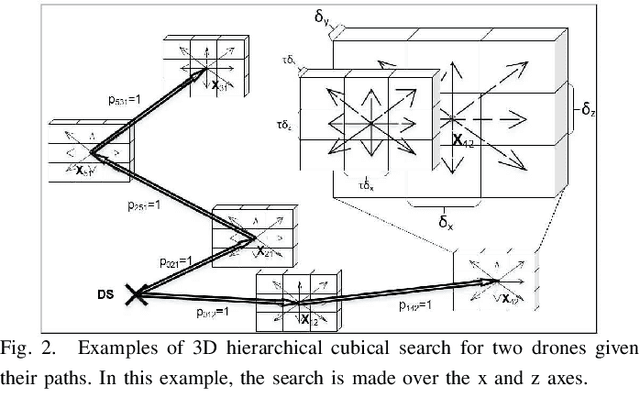

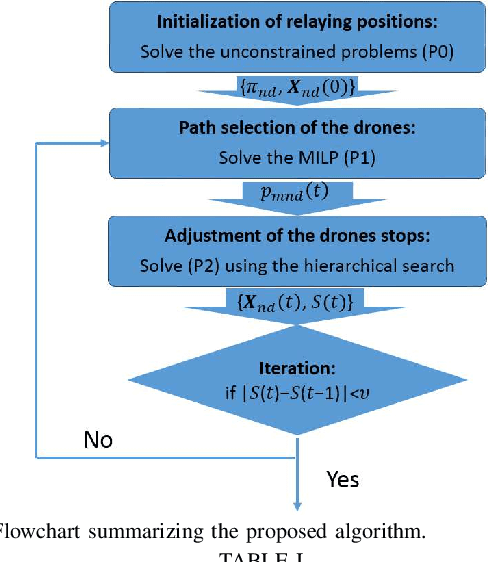

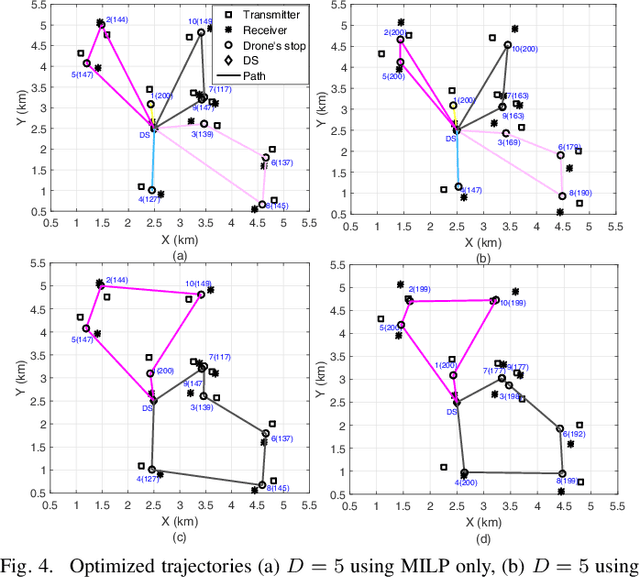

Abstract:Unmanned aerial vehicles (UAVs) have gained a lot of popularity in diverse wireless communication fields. They can act as high-altitude flying relays to support communications between ground nodes due to their ability to provide line-of-sight links. With the flourishing Internet of Things, several types of new applications are emerging. In this paper, we focus on bandwidth hungry and delay-tolerant applications where multiple pairs of transceivers require the support of UAVs to complete their transmissions. To do so, the UAVs have the possibility to employ two different bands namely the typical microwave and the high-rate millimeter wave bands. In this paper, we develop a generic framework to assign UAVs to supported transceivers and optimize their trajectories such that a weighted function of the total service time is minimized. Taking into account both the communication time needed to relay the message and the flying time of the UAVs, a mixed non-linear programming problem aiming at finding the stops at which the UAVs hover to forward the data to the receivers is formulated. An iterative approach is then developed to solve the problem. First, a mixed linear programming problem is optimally solved to determine the path of each available UAV. Then, a hierarchical iterative search is executed to enhance the UAV stops' locations and reduce the service time. The behavior of the UAVs and the benefits of the proposed framework are showcased for selected scenarios.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge