Andrea Eichenseer

Motion Estimation for Fisheye Video With an Application to Temporal Resolution Enhancement

Mar 01, 2023Abstract:Surveying wide areas with only one camera is a typical scenario in surveillance and automotive applications. Ultra wide-angle fisheye cameras employed to that end produce video data with characteristics that differ significantly from conventional rectilinear imagery as obtained by perspective pinhole cameras. Those characteristics are not considered in typical image and video processing algorithms such as motion estimation, where translation is assumed to be the predominant kind of motion. This contribution introduces an adapted technique for use in block-based motion estimation that takes into the account the projection function of fisheye cameras and thus compensates for the non-perspective properties of fisheye videos. By including suitable projections, the translational motion model that would otherwise only hold for perspective material is exploited, leading to improved motion estimation results without altering the source material. In addition, we discuss extensions that allow for a better prediction of the peripheral image areas, where motion estimation falters due to spatial constraints, and further include calibration information to account for lens properties deviating from the theoretical function. Simulations and experiments are conducted on synthetic as well as real-world fisheye video sequences that are part of a data set created in the context of this paper. Average synthetic and real-world gains of 1.45 and 1.51 dB in luminance PSNR are achieved compared against conventional block matching. Furthermore, the proposed fisheye motion estimation method is successfully applied to motion compensated temporal resolution enhancement, where average gains amount to 0.79 and 0.76 dB.

Disparity estimation for fisheye images with an application to intermediate view synthesis

Dec 02, 2022

Abstract:To obtain depth information from a stereo camera setup, a common way is to conduct disparity estimation between the two views; the disparity map thus generated may then also be used to synthesize arbitrary intermediate views. A straightforward approach to disparity estimation is block matching, which performs well with perspective data. When dealing with non-perspective imagery such as obtained from ultra wide-angle fisheye cameras, however, block matching meets its limits. In this paper, an adapted disparity estimation approach for fisheye images is introduced. The proposed method exploits knowledge about the fisheye projection function to transform the fisheye coordinate grid to a corresponding perspective mesh. Offsets between views can thus be determined more accurately, resulting in more reliable disparity maps. By re-projecting the perspective mesh to the fisheye domain, the original fisheye field of view is retained. The benefit of the proposed method is demonstrated in the context of intermediate view synthesis, for which both objectively evaluated as well as visually convincing results are provided.

Motion estimation for fisheye video sequences combining perspective projection with camera calibration information

Dec 02, 2022Abstract:Fisheye cameras prove a convenient means in surveillance and automotive applications as they provide a very wide field of view for capturing their surroundings. Contrary to typical rectilinear imagery, however, fisheye video sequences follow a different mapping from the world coordinates to the image plane which is not considered in standard video processing techniques. In this paper, we present a motion estimation method for real-world fisheye videos by combining perspective projection with knowledge about the underlying fisheye projection. The latter is obtained by camera calibration since actual lenses rarely follow exact models. Furthermore, we introduce a re-mapping for ultra-wide angles which would otherwise lead to wrong motion compensation results for the fisheye boundary. Both concepts extend an existing hybrid motion estimation method for equisolid fisheye video sequences that decides between traditional and fisheye block matching in a block-based manner. Compared to that method, the proposed calibration and re-mapping extensions yield gains of up to 0.58 dB in luminance PSNR for real-world fisheye video sequences. Overall gains amount to up to 3.32 dB compared to traditional block matching.

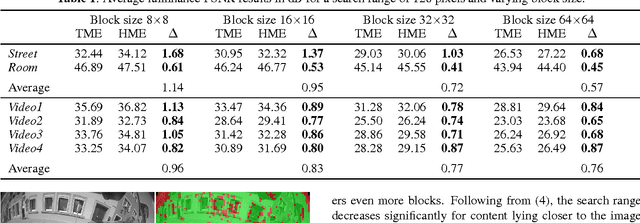

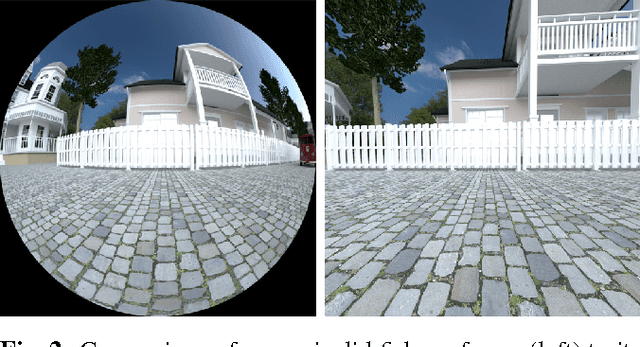

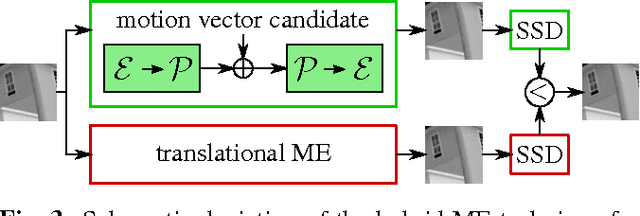

A hybrid motion estimation technique for fisheye video sequences based on equisolid re-projection

Nov 30, 2022

Abstract:Capturing large fields of view with only one camera is an important aspect in surveillance and automotive applications, but the wide-angle fisheye imagery thus obtained exhibits very special characteristics that may not be very well suited for typical image and video processing methods such as motion estimation. This paper introduces a motion estimation method that adapts to the typical radial characteristics of fisheye video sequences by making use of an equisolid re-projection after moving part of the motion vector search into the perspective domain via a corresponding back-projection. By combining this approach with conventional translational motion estimation and compensation, average gains in luminance PSNR of up to 1.14 dB are achieved for synthetic fish-eye sequences and up to 0.96 dB for real-world data. Maximum gains for selected frame pairs amount to 2.40 dB and 1.39 dB for synthetic and real-world data, respectively.

Coding of distortion-corrected fisheye video sequences using H.265/HEVC

Nov 30, 2022Abstract:Images and videos captured by fisheye cameras exhibit strong radial distortions due to their large field of view. Conventional intra-frame as well as inter-frame prediction techniques as employed in hybrid video coding schemes are not designed to cope with such distortions, however. So far, captured fish-eye data has been coded and stored without consideration to any loss in efficiency resulting from radial distortion. This paper investigates the effects on the coding efficiency when applying distortion correction as a pre-processing step as opposed to the state-of-the-art method of post-processing. Both methods make use of the latest video coding standard H.265/HEVC and are compared with regard to objective as well as subjective video quality. It is shown that a maximum PSNR gain of 1.91 dB for intra-frame and 1.37 dB for inter-frame coding is achieved when using the pre-processing method. Average gains amount to 1.16 dB and 0.95 dB for intra-frame and inter-frame coding, respectively.

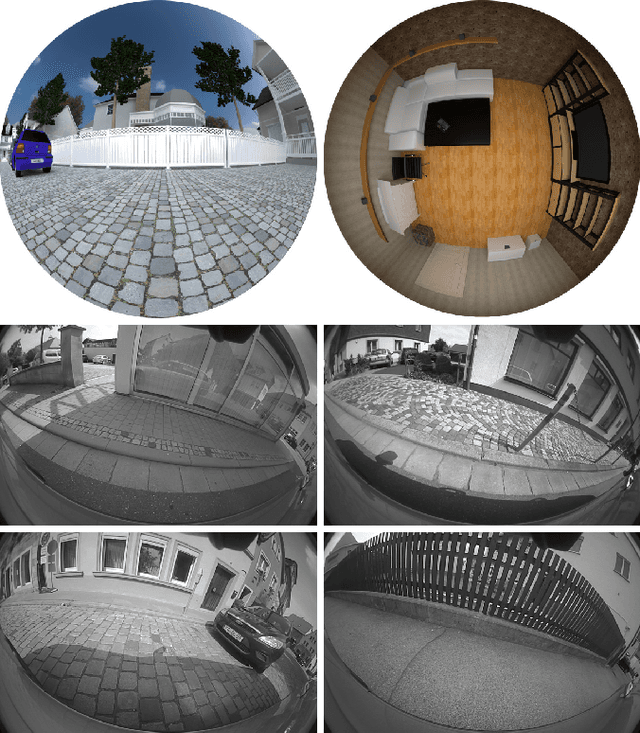

A data set providing synthetic and real-world fisheye video sequences

Nov 30, 2022Abstract:In video surveillance as well as automotive applications, so-called fisheye cameras are often employed to capture a very wide angle of view. As such cameras depend on projections quite different from the classical perspective projection, the resulting fisheye image and video data correspondingly exhibits non-rectilinear image characteristics. Typical image and video processing algorithms, however, are not designed for these fisheye characteristics. To be able to develop and evaluate algorithms specifically adapted to fisheye images and videos, a corresponding test data set is therefore introduced in this paper. The first of those sequences were generated during the authors' own work on motion estimation for fish-eye videos and further sequences have gradually been added to create a more extensive collection. The data set now comprises synthetically generated fisheye sequences, ranging from simple patterns to more complex scenes, as well as fisheye video sequences captured with an actual fisheye camera. For the synthetic sequences, exact information on the lens employed is available, thus facilitating both verification and evaluation of any adapted algorithms. For the real-world sequences, we provide calibration data as well as the settings used during acquisition. The sequences are freely available via www.lms.lnt.de/fisheyedataset/.

Temporal error concealment for fisheye video sequences based on equisolid re-projection

Nov 21, 2022

Abstract:Wide-angle video sequences obtained by fisheye cameras exhibit characteristics that may not very well comply with standard image and video processing techniques such as error concealment. This paper introduces a temporal error concealment technique designed for the inherent characteristics of equisolid fisheye video sequences by applying a re-projection into the equisolid domain after conducting part of the error concealment in the perspective domain. Combining this technique with conventional decoder motion vector estimation achieves average gains of 0.71 dB compared against pure decoder motion vector estimation for the test sequences used. Maximum gains amount to up to 2.04 dB for selected frames.

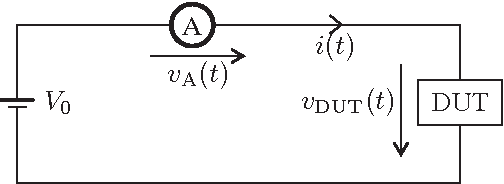

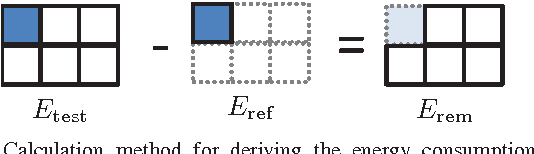

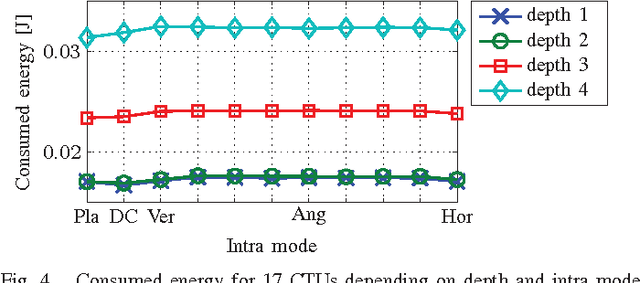

Modeling the Energy Consumption of HEVC Intra Decoding

Mar 03, 2022

Abstract:Battery life is one of the major limitations to mobile device use, which makes research on energy efficient soft- and hardware an important task. This paper investigates the energy required by a CPU when decoding compressed bitstream videos on mobile platforms. A model is derived that describes the energy consumption of the new HEVC decoder for intra coded videos. We show that the relative estimation error of the model is smaller than 3.2% and that the model can be used to build encoders aiming at minimizing decoding energy.

* 4 pages, 7 figures

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge