Amy Esler

Video Human Segmentation using Fuzzy Object Models and its Application to Body Pose Estimation of Toddlers for Behavior Studies

May 29, 2013

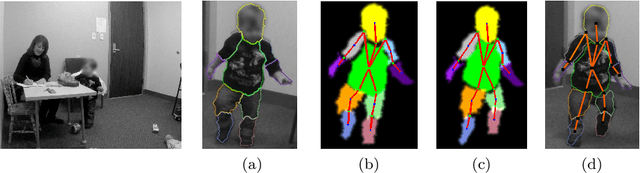

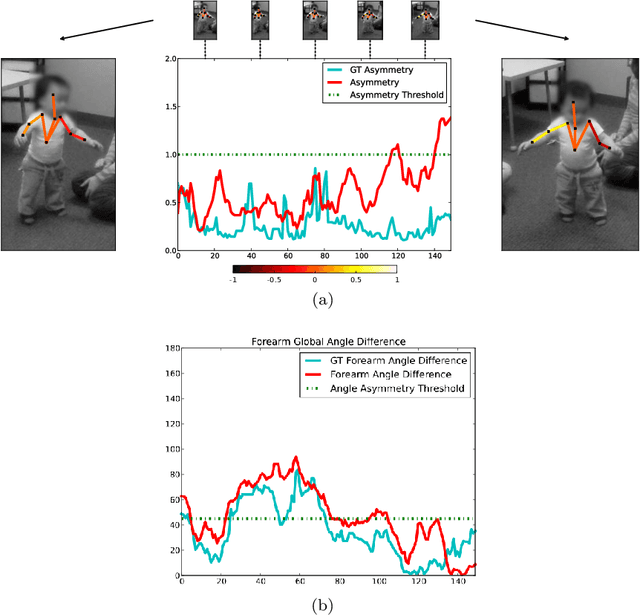

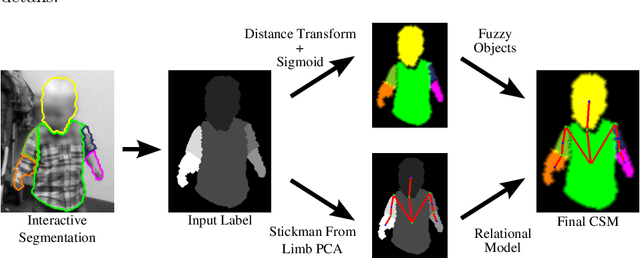

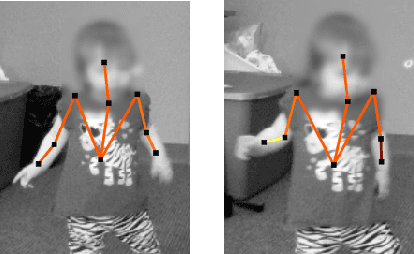

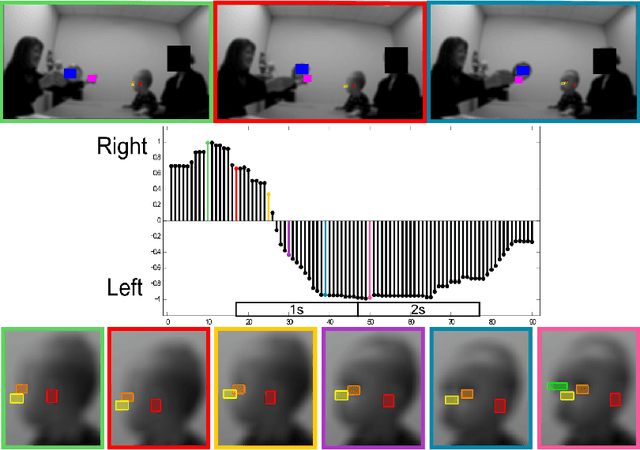

Abstract:Video object segmentation is a challenging problem due to the presence of deformable, connected, and articulated objects, intra- and inter-object occlusions, object motion, and poor lighting. Some of these challenges call for object models that can locate a desired object and separate it from its surrounding background, even when both share similar colors and textures. In this work, we extend a fuzzy object model, named cloud system model (CSM), to handle video segmentation, and evaluate it for body pose estimation of toddlers at risk of autism. CSM has been successfully used to model the parts of the brain (cerebrum, left and right brain hemispheres, and cerebellum) in order to automatically locate and separate them from each other, the connected brain stem, and the background in 3D MR-images. In our case, the objects are articulated parts (2D projections) of the human body, which can deform, cause self-occlusions, and move along the video. The proposed CSM extension handles articulation by connecting the individual clouds, body parts, of the system using a 2D stickman model. The stickman representation naturally allows us to extract 2D body pose measures of arm asymmetry patterns during unsupported gait of toddlers, a possible behavioral marker of autism. The results show that our method can provide insightful knowledge to assist the specialist's observations during real in-clinic assessments.

Computer vision tools for the non-invasive assessment of autism-related behavioral markers

Nov 08, 2012

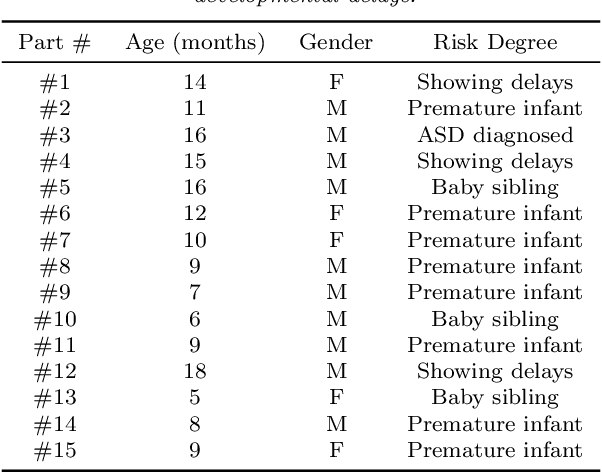

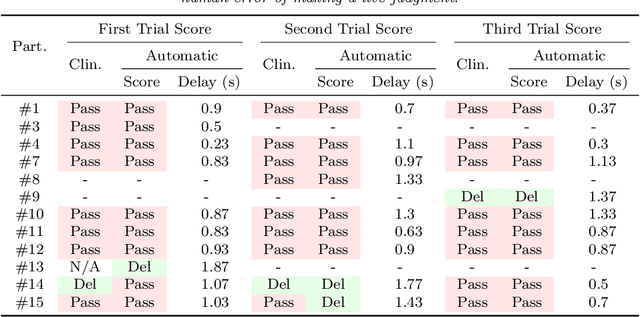

Abstract:The early detection of developmental disorders is key to child outcome, allowing interventions to be initiated that promote development and improve prognosis. Research on autism spectrum disorder (ASD) suggests behavioral markers can be observed late in the first year of life. Many of these studies involved extensive frame-by-frame video observation and analysis of a child's natural behavior. Although non-intrusive, these methods are extremely time-intensive and require a high level of observer training; thus, they are impractical for clinical and large population research purposes. Diagnostic measures for ASD are available for infants but are only accurate when used by specialists experienced in early diagnosis. This work is a first milestone in a long-term multidisciplinary project that aims at helping clinicians and general practitioners accomplish this early detection/measurement task automatically. We focus on providing computer vision tools to measure and identify ASD behavioral markers based on components of the Autism Observation Scale for Infants (AOSI). In particular, we develop algorithms to measure three critical AOSI activities that assess visual attention. We augment these AOSI activities with an additional test that analyzes asymmetrical patterns in unsupported gait. The first set of algorithms involves assessing head motion by tracking facial features, while the gait analysis relies on joint foreground segmentation and 2D body pose estimation in video. We show results that provide insightful knowledge to augment the clinician's behavioral observations obtained from real in-clinic assessments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge