Amadeus Aristo Winarto

Explaining Adversarial Vulnerability with a Data Sparsity Hypothesis

Mar 01, 2021

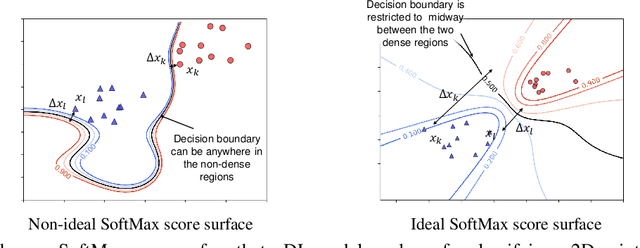

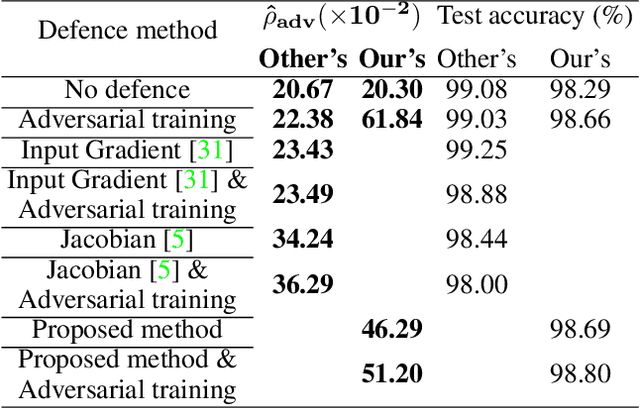

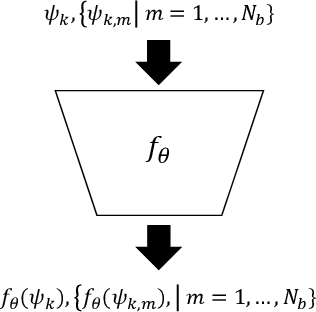

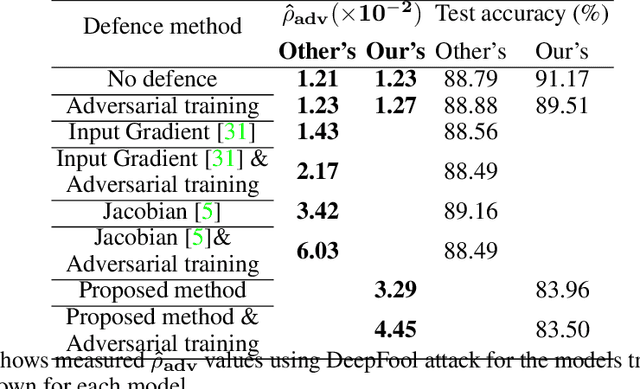

Abstract:Despite many proposed algorithms to provide robustness to deep learning (DL) models, DL models remain susceptible to adversarial attacks. We hypothesize that the adversarial vulnerability of DL models stems from two factors. The first factor is data sparsity which is that in the high dimensional data space, there are large regions outside the support of the data distribution. The second factor is the existence of many redundant parameters in the DL models. Owing to these factors, different models are able to come up with different decision boundaries with comparably high prediction accuracy. The appearance of the decision boundaries in the space outside the support of the data distribution does not affect the prediction accuracy of the model. However, they make an important difference in the adversarial robustness of the model. We propose that the ideal decision boundary should be as far as possible from the support of the data distribution.\par In this paper, we develop a training framework for DL models to learn such decision boundaries spanning the space around the class distributions further from the data points themselves. Semi-supervised learning was deployed to achieve this objective by leveraging unlabeled data generated in the space outside the support of the data distribution. We measure adversarial robustness of the models trained using this training framework against well-known adversarial attacks We found that our results, other regularization methods and adversarial training also support our hypothesis of data sparcity. We show that the unlabeled data generated by noise using our framework is almost as effective as unlabeled data, sourced from existing data sets or generated by synthesis algorithms, on adversarial robustness. Our code is available at https://github.com/MahsaPaknezhad/AdversariallyRobustTraining.

Fence GAN: Towards Better Anomaly Detection

Apr 02, 2019

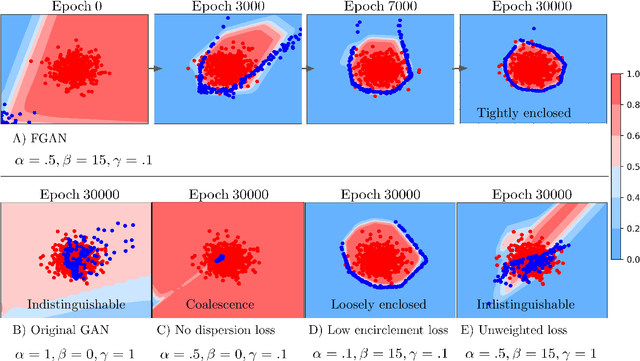

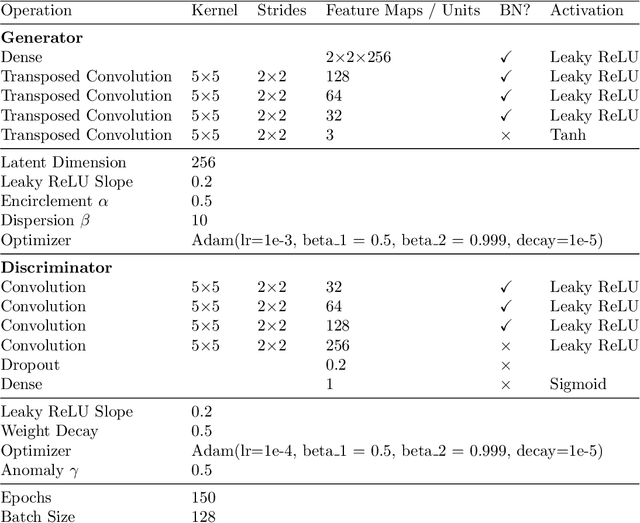

Abstract:Anomaly detection is a classical problem where the aim is to detect anomalous data that do not belong to the normal data distribution. Current state-of-the-art methods for anomaly detection on complex high-dimensional data are based on the generative adversarial network (GAN). However, the traditional GAN loss is not directly aligned with the anomaly detection objective: it encourages the distribution of the generated samples to overlap with the real data and so the resulting discriminator has been found to be ineffective as an anomaly detector. In this paper, we propose simple modifications to the GAN loss such that the generated samples lie at the boundary of the real data distribution. With our modified GAN loss, our anomaly detection method, called Fence GAN (FGAN), directly uses the discriminator score as an anomaly threshold. Our experimental results using the MNIST, CIFAR10 and KDD99 datasets show that Fence GAN yields the best anomaly classification accuracy compared to state-of-the-art methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge