Alexander Mock

Mesh-based Object Tracking for Dynamic Semantic 3D Scene Graphs via Ray Tracing

Aug 09, 2024

Abstract:In this paper, we present a novel method for 3D geometric scene graph generation using range sensors and RGB cameras. We initially detect instance-wise keypoints with a YOLOv8s model to compute 6D pose estimates of known objects by solving PnP. We use a ray tracing approach to track a geometric scene graph consisting of mesh models of object instances. In contrast to classical point-to-point matching, this leads to more robust results, especially under occlusions between objects instances. We show that using this hybrid strategy leads to robust self-localization, pre-segmentation of the range sensor data and accurate pose tracking of objects using the same environmental representation. All detected objects are integrated into a semantic scene graph. This scene graph then serves as a front end to a semantic mapping framework to allow spatial reasoning.

FeatSense -- A Feature-based Registration Algorithm with GPU-accelerated TSDF-Mapping Backend for NVIDIA Jetson Boards

Oct 09, 2023Abstract:This paper presents FeatSense, a feature-based GPU-accelerated SLAM system for high resolution LiDARs, combined with a map generation algorithm for real-time generation of large Truncated Signed Distance Fields (TSDFs) on embedded hardware. FeatSense uses LiDAR point cloud features for odometry estimation and point cloud registration. The registered point clouds are integrated into a global Truncated Signed Distance Field (TSDF) representation. FeatSense is intended to run on embedded systems with integrated GPU-accelerator like NVIDIA Jetson boards. In this paper, we present a real-time capable TSDF-SLAM system specially tailored for close coupled CPU/GPU systems. The implementation is evaluated in various structured and unstructured environments and benchmarked against existing reference datasets. The main contribution of this paper is the ability to register up to 128 scan lines of an Ouster OS1-128 LiDAR at 10Hz on a NVIDIA AGX Xavier while achieving a TSDF map generation speedup by a factor of 100 compared to previous work on the same power budget.

Towards 6D MCL for LiDARs in 3D TSDF Maps on Embedded Systems with GPUs

Oct 06, 2023Abstract:Monte Carlo Localization is a widely used approach in the field of mobile robotics. While this problem has been well studied in the 2D case, global localization in 3D maps with six degrees of freedom has so far been too computationally demanding. Hence, no mobile robot system has yet been presented in literature that is able to solve it in real-time. The computationally most intensive step is the evaluation of the sensor model, but it also offers high parallelization potential. This work investigates the massive parallelization of the evaluation of particles in truncated signed distance fields for three-dimensional laser scanners on embedded GPUs. The implementation on the GPU is 30 times as fast and more than 50 times more energy efficient compared to a CPU implementation.

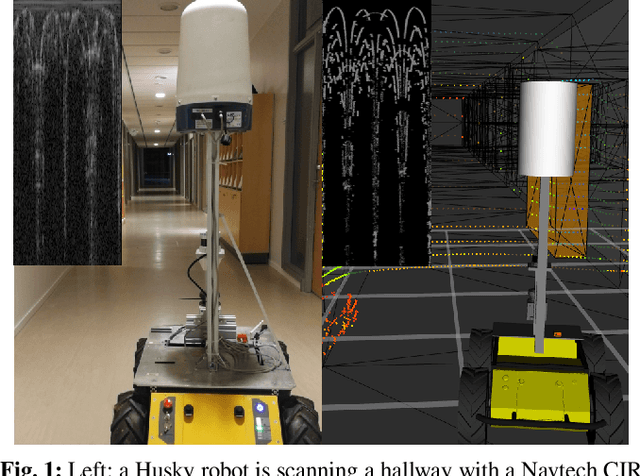

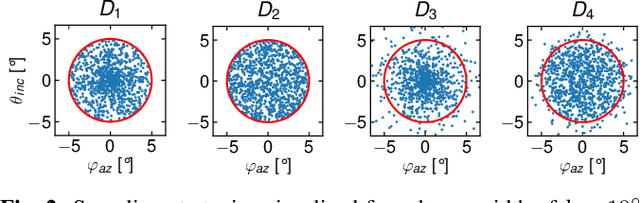

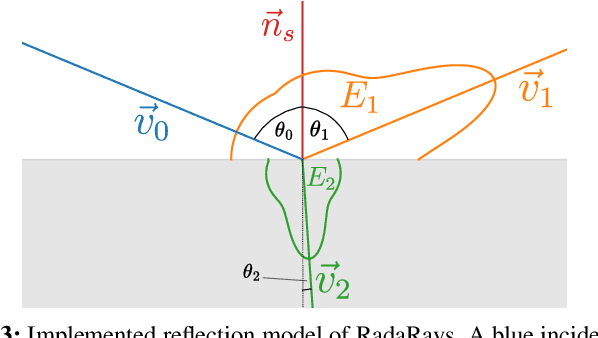

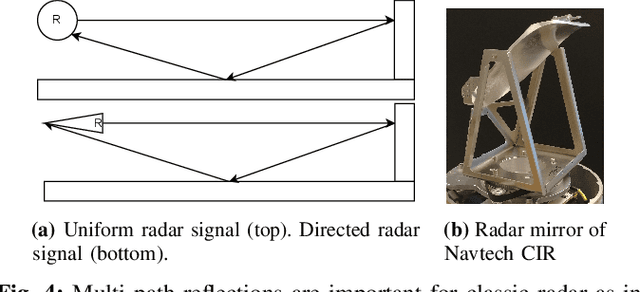

RadaRays: Real-time Simulation of Rotating FMCW Radar for Mobile Robotics via Hardware-accelerated Ray Tracing

Oct 05, 2023

Abstract:RadaRays allows for the accurate modeling and simulation of rotating FMCW radar sensors in complex environments, including the simulation of reflection, refraction, and scattering of radar waves. Our software is able to handle large numbers of objects and materials, making it suitable for use in a variety of mobile robotics applications. We demonstrate the effectiveness of RadaRays through a series of experiments and show that it can more accurately reproduce the behavior of FMCW radar sensors in a variety of environments, compared to the ray casting-based lidar-like simulations that are commonly used in simulators for autonomous driving such as CARLA. Our experiments additionally serve as valuable reference point for researchers to evaluate their own radar simulations. By using RadaRays, developers can significantly reduce the time and cost associated with prototyping and testing FMCW radar-based algorithms. We also provide a Gazebo plugin that makes our work accessible to the mobile robotics community.

MICP-L: Fast parallel simulative Range Sensor to Mesh registration for Robot Localization

Oct 25, 2022Abstract:Triangle mesh-based maps have proven to be a powerful 3D representation of the environment, allowing robots to navigate using universal methods, indoors as well as in challenging outdoor environments with tunnels, hills and varying slopes. However, any robot that navigates autonomously necessarily requires stable, accurate, and continuous localization in such a mesh map where it plans its paths and missions. We present MICP-L, a novel and very fast \textit{Mesh ICP Localization} method that can register one or more range sensors directly on a triangle mesh map to continuously localize a robot, determining its 6D pose in the map. Correspondences between a range sensor and the mesh are found through simulations accelerated with the latest RTX hardware. With MICP-L, a correction can be performed quickly and in parallel even with combined data from different range sensor models. With this work, we aim to significantly advance the development in the field of mesh-based environment representation for autonomous robotic applications. MICP-L is open source and fully integrated with ROS and tf.

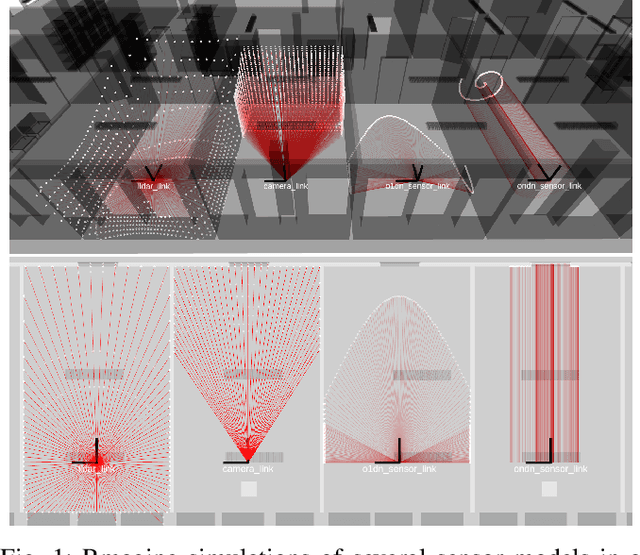

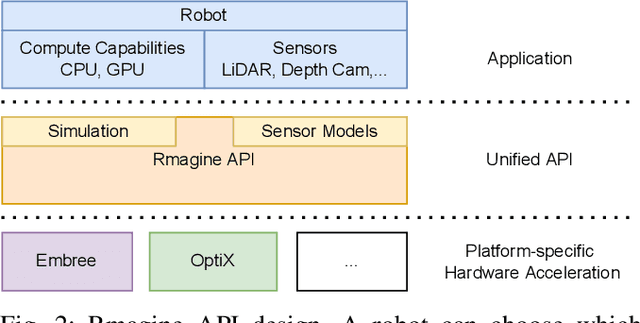

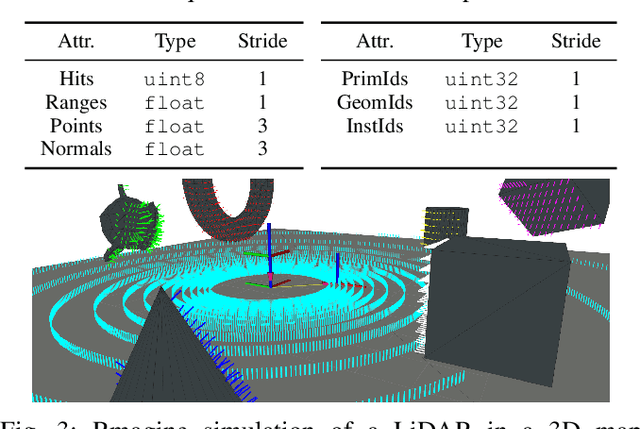

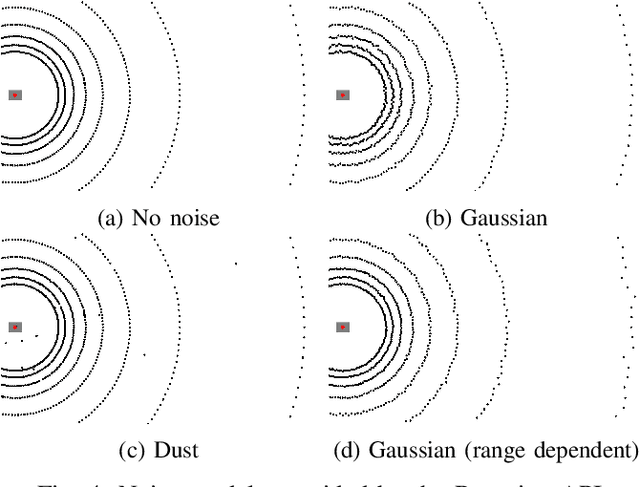

Rmagine: 3D Range Sensor Simulation in Polygonal Maps via Raytracing for Embedded Hardware on Mobile Robots

Sep 27, 2022

Abstract:Sensor simulation has emerged as a promising and powerful technique to find solutions to many real-world robotic tasks like localization and pose tracking.However, commonly used simulators have high hardware requirements and are therefore used mostly on high-end computers. In this paper, we present an approach to simulate range sensors directly on embedded hardware of mobile robots that use triangle meshes as environment map. This library called Rmagine allows a robot to simulate sensor data for arbitrary range sensors directly on board via raytracing. Since robots typically only have limited computational resources, the Rmagine aims at being flexible and lightweight, while scaling well even to large environment maps. It runs on several platforms like Laptops or embedded computing boards like Nvidia Jetson by putting an unified API over the specific proprietary libraries provided by the hardware manufacturers. This work is designed to support the future development of robotic applications depending on simulation of range data that could previously not be computed in reasonable time on mobile systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge