Alexander Gaskell

Logically Consistent Adversarial Attacks for Soft Theorem Provers

Apr 29, 2022

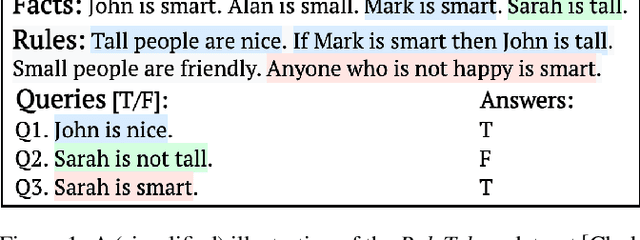

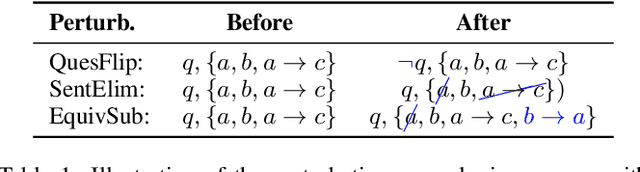

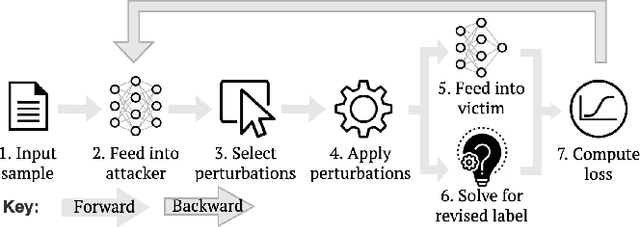

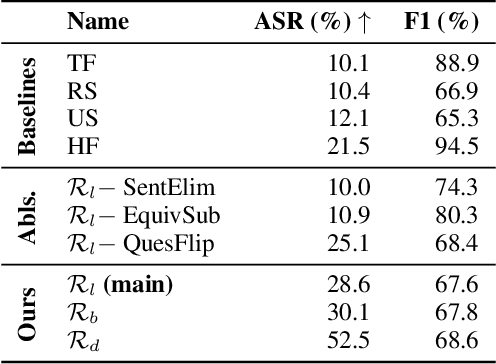

Abstract:Recent efforts within the AI community have yielded impressive results towards "soft theorem proving" over natural language sentences using language models. We propose a novel, generative adversarial framework for probing and improving these models' reasoning capabilities. Adversarial attacks in this domain suffer from the logical inconsistency problem, whereby perturbations to the input may alter the label. Our Logically consistent AdVersarial Attacker, LAVA, addresses this by combining a structured generative process with a symbolic solver, guaranteeing logical consistency. Our framework successfully generates adversarial attacks and identifies global weaknesses common across multiple target models. Our analyses reveal naive heuristics and vulnerabilities in these models' reasoning capabilities, exposing an incomplete grasp of logical deduction under logic programs. Finally, in addition to effective probing of these models, we show that training on the generated samples improves the target model's performance.

Evaluating the COVID-19 Identification ResNet (CIdeR) on the INTERSPEECH COVID-19 from Audio Challenges

Jul 30, 2021

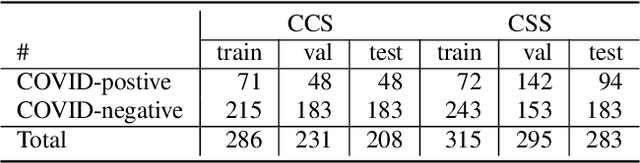

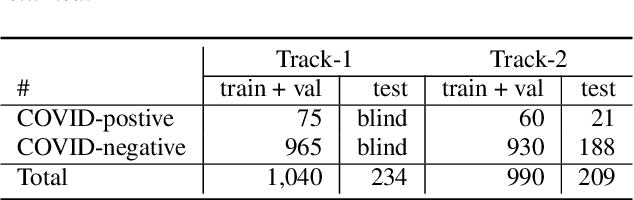

Abstract:We report on cross-running the recent COVID-19 Identification ResNet (CIdeR) on the two Interspeech 2021 COVID-19 diagnosis from cough and speech audio challenges: ComParE and DiCOVA. CIdeR is an end-to-end deep learning neural network originally designed to classify whether an individual is COVID-positive or COVID-negative based on coughing and breathing audio recordings from a published crowdsourced dataset. In the current study, we demonstrate the potential of CIdeR at binary COVID-19 diagnosis from both the COVID-19 Cough and Speech Sub-Challenges of INTERSPEECH 2021, ComParE and DiCOVA. CIdeR achieves significant improvements over several baselines.

Detecting COVID-19 from Breathing and Coughing Sounds using Deep Neural Networks

Dec 29, 2020

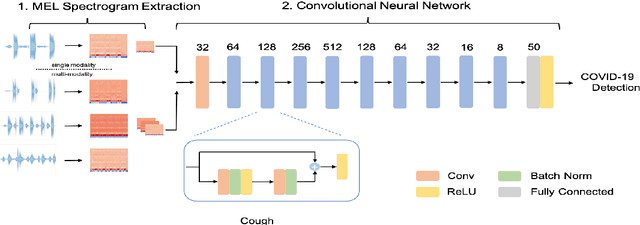

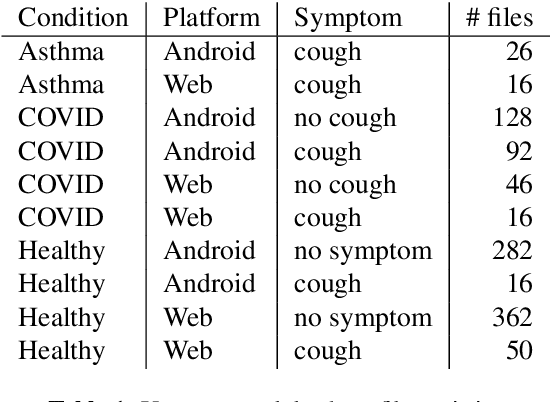

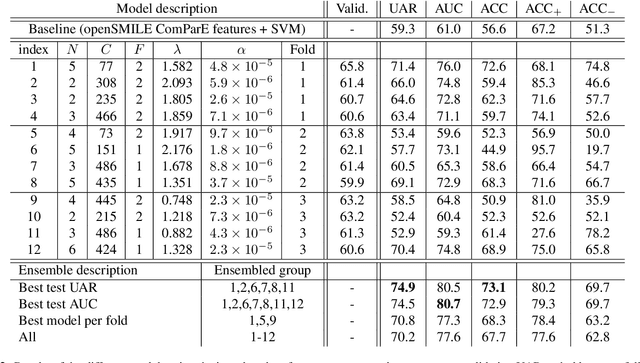

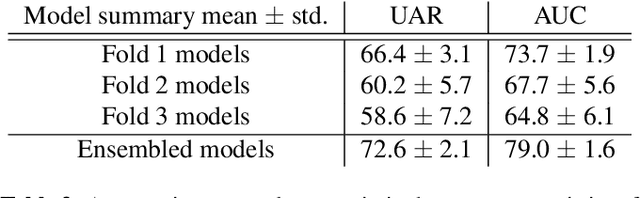

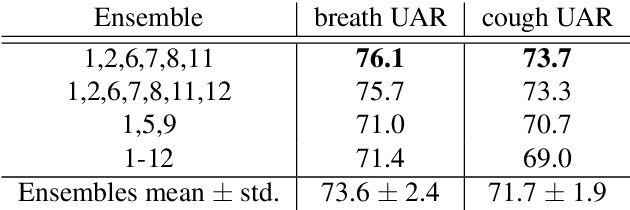

Abstract:The COVID-19 pandemic has affected the world unevenly; while industrial economies have been able to produce the tests necessary to track the spread of the virus and mostly avoided complete lockdowns, developing countries have faced issues with testing capacity. In this paper, we explore the usage of deep learning models as a ubiquitous, low-cost, pre-testing method for detecting COVID-19 from audio recordings of breathing or coughing taken with mobile devices or via the web. We adapt an ensemble of Convolutional Neural Networks that utilise raw breathing and coughing audio and spectrograms to classify if a speaker is infected with COVID-19 or not. The different models are obtained via automatic hyperparameter tuning using Bayesian Optimisation combined with HyperBand. The proposed method outperforms a traditional baseline approach by a large margin. Ultimately, it achieves an Unweighted Average Recall (UAR) of 74.9%, or an Area Under ROC Curve (AUC) of 80.7% by ensembling neural networks, considering the best test set result across breathing and coughing in a strictly subject independent manner. In isolation, breathing sounds thereby appear slightly better suited than coughing ones (76.1% vs 73.7% UAR).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge