Alex Kolmus

Generative Poisoning Using Random Discriminators

Nov 02, 2022

Abstract:We introduce ShortcutGen, a new data poisoning attack that generates sample-dependent, error-minimizing perturbations by learning a generator. The key novelty of ShortcutGen is the use of a randomly-initialized discriminator, which provides spurious shortcuts needed for generating poisons. Different from recent, iterative methods, our ShortcutGen can generate perturbations with only one forward pass in a label-free manner, and compared to the only existing generative method, DeepConfuse, our ShortcutGen is faster and simpler to train while remaining competitive. We also demonstrate that integrating a simple augmentation strategy can further boost the robustness of ShortcutGen against early stopping, and combining augmentation and non-augmentation leads to new state-of-the-art results in terms of final validation accuracy, especially in the challenging, transfer scenario. Lastly, we speculate, through uncovering its working mechanism, that learning a more general representation space could allow ShortcutGen to work for unseen data.

Going Grayscale: The Road to Understanding and Improving Unlearnable Examples

Nov 25, 2021

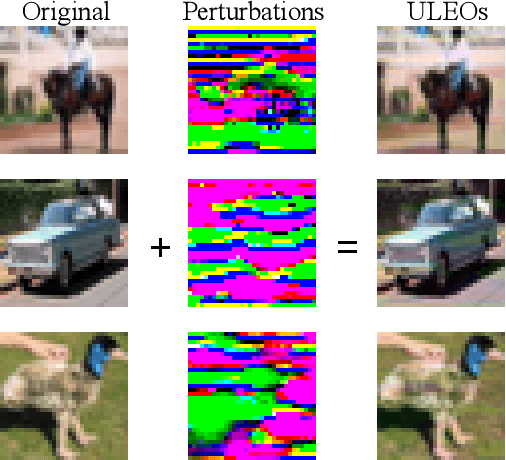

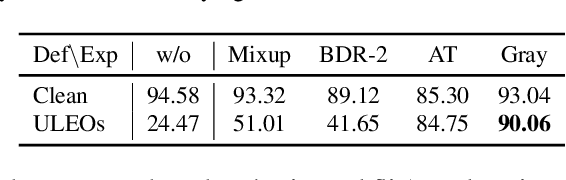

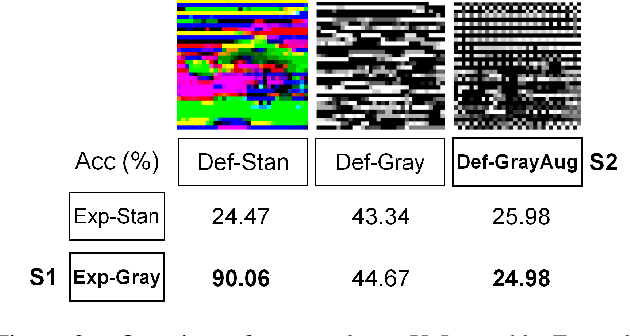

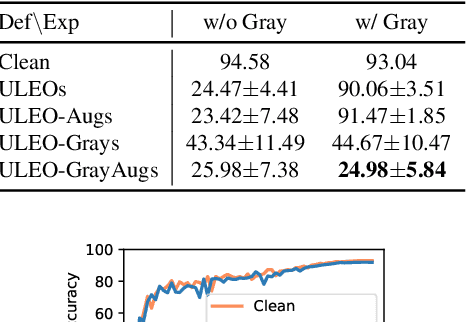

Abstract:Recent work has shown that imperceptible perturbations can be applied to craft unlearnable examples (ULEs), i.e. images whose content cannot be used to improve a classifier during training. In this paper, we reveal the road that researchers should follow for understanding ULEs and improving ULEs as they were originally formulated (ULEOs). The paper makes four contributions. First, we show that ULEOs exploit color and, consequently, their effects can be mitigated by simple grayscale pre-filtering, without resorting to adversarial training. Second, we propose an extension to ULEOs, which is called ULEO-GrayAugs, that forces the generated ULEs away from channel-wise color perturbations by making use of grayscale knowledge and data augmentations during optimization. Third, we show that ULEOs generated using Multi-Layer Perceptrons (MLPs) are effective in the case of complex Convolutional Neural Network (CNN) classifiers, suggesting that CNNs suffer specific vulnerability to ULEs. Fourth, we demonstrate that when a classifier is trained on ULEOs, adversarial training will prevent a drop in accuracy measured both on clean images and on adversarial images. Taken together, our contributions represent a substantial advance in the state of art of unlearnable examples, but also reveal important characteristics of their behavior that must be better understood in order to achieve further improvements.

Swift sky localization of gravitational waves using deep learning seeded importance sampling

Nov 01, 2021

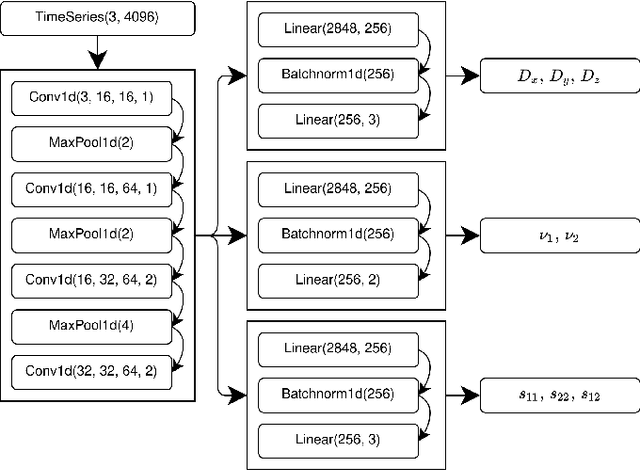

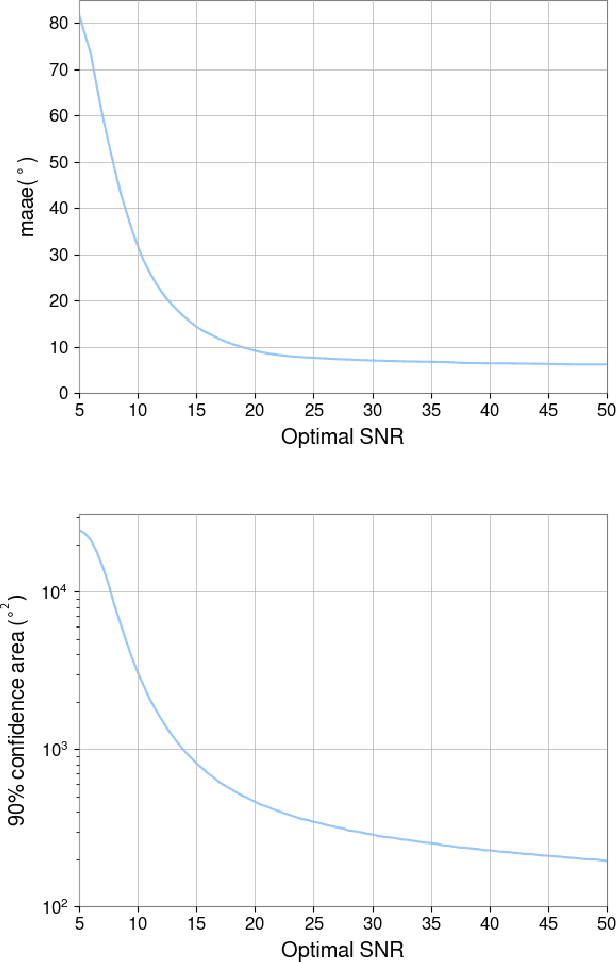

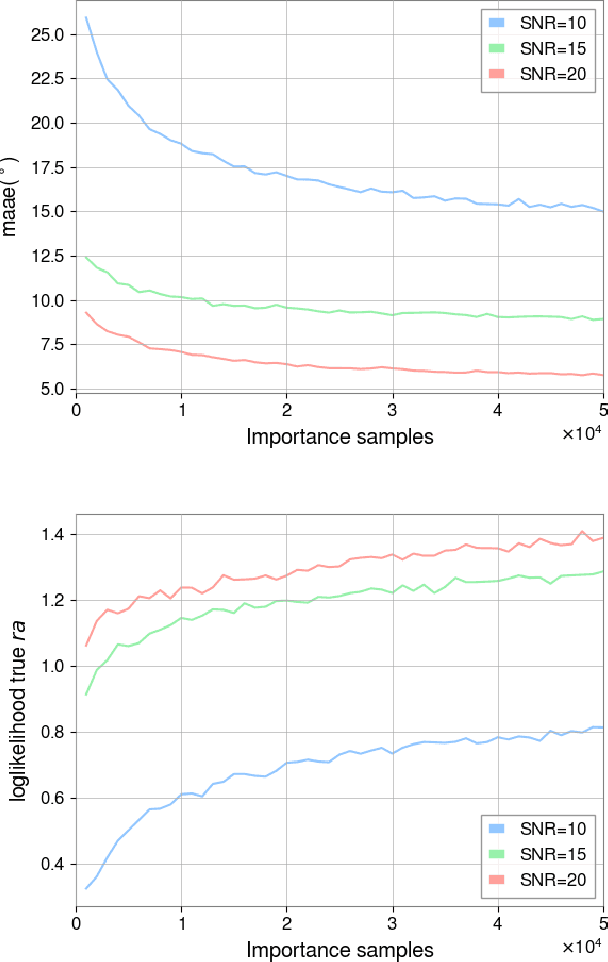

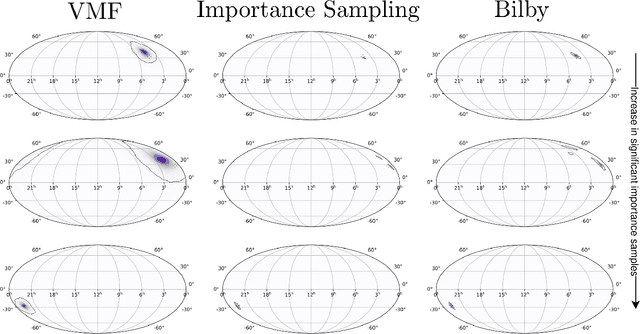

Abstract:Fast, highly accurate, and reliable inference of the sky origin of gravitational waves would enable real-time multi-messenger astronomy. Current Bayesian inference methodologies, although highly accurate and reliable, are slow. Deep learning models have shown themselves to be accurate and extremely fast for inference tasks on gravitational waves, but their output is inherently questionable due to the blackbox nature of neural networks. In this work, we join Bayesian inference and deep learning by applying importance sampling on an approximate posterior generated by a multi-headed convolutional neural network. The neural network parametrizes Von Mises-Fisher and Gaussian distributions for the sky coordinates and two masses for given simulated gravitational wave injections in the LIGO and Virgo detectors. We generate skymaps for unseen gravitational-wave events that highly resemble predictions generated using Bayesian inference in a few minutes. Furthermore, we can detect poor predictions from the neural network, and quickly flag them.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge