Get our free extension to see links to code for papers anywhere online!Free add-on: code for papers everywhere!Free add-on: See code for papers anywhere!

Ajinkya Mulay

Towards Quantifying the Carbon Emissions of Differentially Private Machine Learning

Jul 14, 2021Figures and Tables:

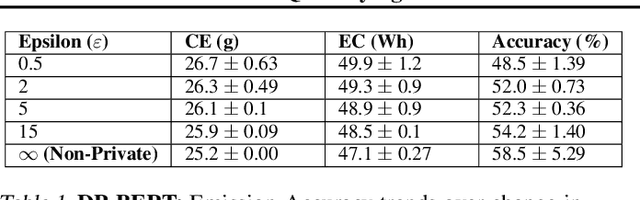

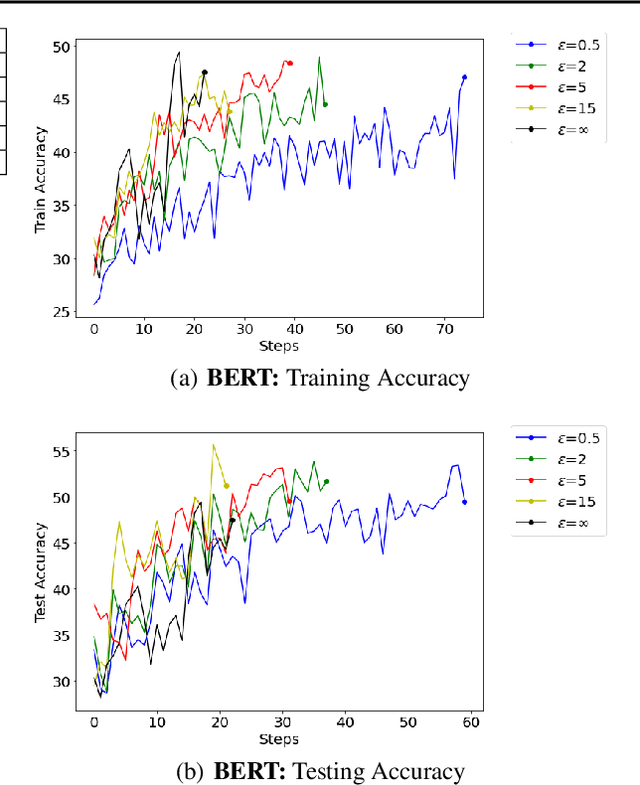

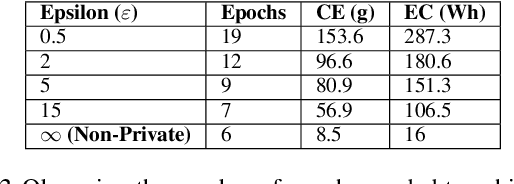

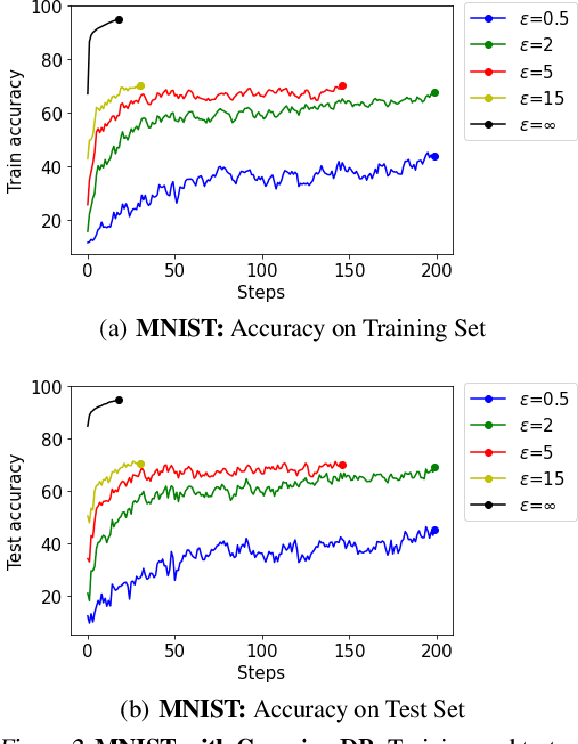

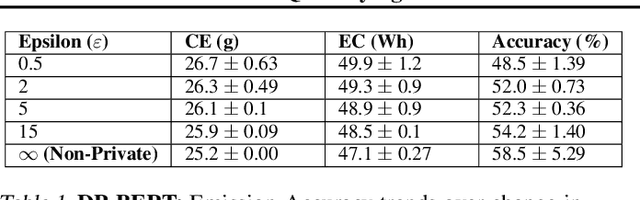

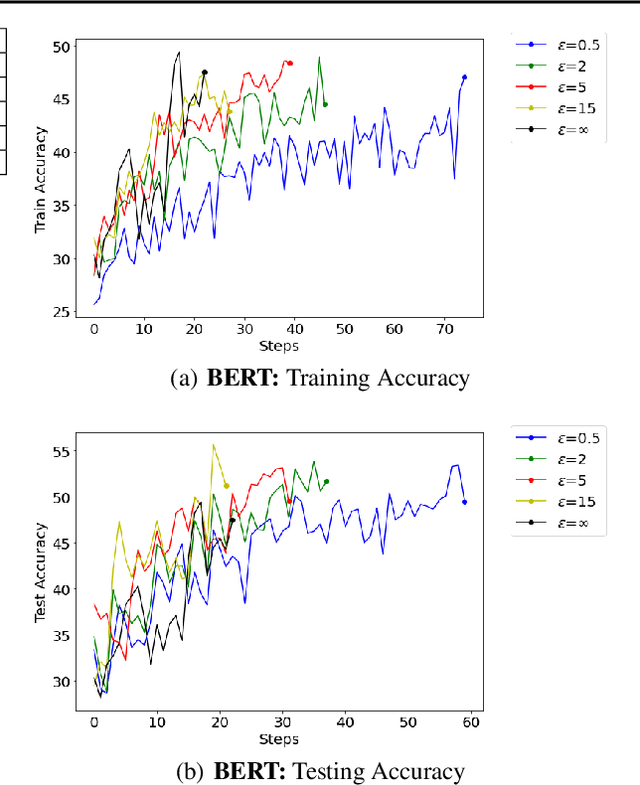

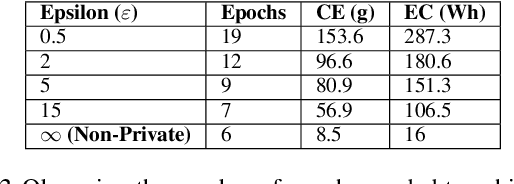

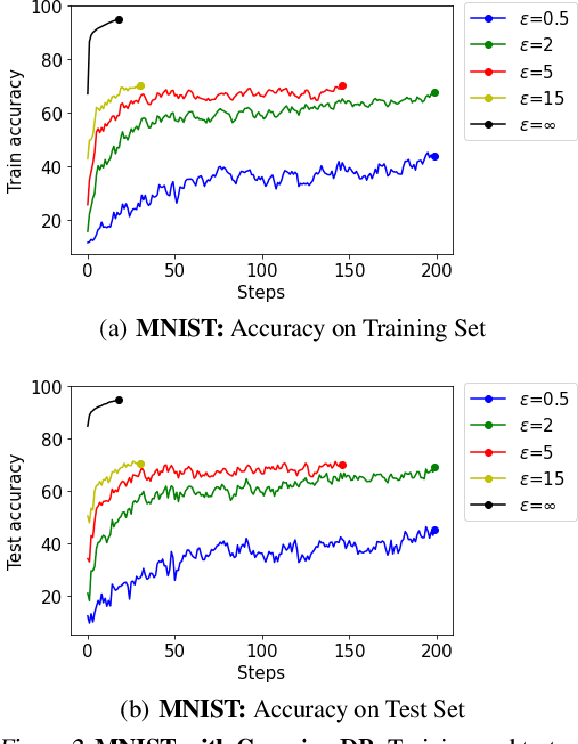

Abstract:In recent years, machine learning techniques utilizing large-scale datasets have achieved remarkable performance. Differential privacy, by means of adding noise, provides strong privacy guarantees for such learning algorithms. The cost of differential privacy is often a reduced model accuracy and a lowered convergence speed. This paper investigates the impact of differential privacy on learning algorithms in terms of their carbon footprint due to either longer run-times or failed experiments. Through extensive experiments, further guidance is provided on choosing the noise levels which can strike a balance between desired privacy levels and reduced carbon emissions.

* 4+3 pages; 6 figures; 8 tables. Accepted at SRML workshop at ICML'21

Via

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge