Ahmad Lotfi

ArtBrain: An Explainable end-to-end Toolkit for Classification and Attribution of AI-Generated Art and Style

Dec 02, 2024Abstract:Recently, the quality of artworks generated using Artificial Intelligence (AI) has increased significantly, resulting in growing difficulties in detecting synthetic artworks. However, limited studies have been conducted on identifying the authenticity of synthetic artworks and their source. This paper introduces AI-ArtBench, a dataset featuring 185,015 artistic images across 10 art styles. It includes 125,015 AI-generated images and 60,000 pieces of human-created artwork. This paper also outlines a method to accurately detect AI-generated images and trace them to their source model. This work proposes a novel Convolutional Neural Network model based on the ConvNeXt model called AttentionConvNeXt. AttentionConvNeXt was implemented and trained to differentiate between the source of the artwork and its style with an F1-Score of 0.869. The accuracy of attribution to the generative model reaches 0.999. To combine the scientific contributions arising from this study, a web-based application named ArtBrain was developed to enable both technical and non-technical users to interact with the model. Finally, this study presents the results of an Artistic Turing Test conducted with 50 participants. The findings reveal that humans could identify AI-generated images with an accuracy of approximately 58%, while the model itself achieved a significantly higher accuracy of around 99%.

Enhanced Online Grooming Detection Employing Context Determination and Message-Level Analysis

Sep 12, 2024

Abstract:Online Grooming (OG) is a prevalent threat facing predominately children online, with groomers using deceptive methods to prey on the vulnerability of children on social media/messaging platforms. These attacks can have severe psychological and physical impacts, including a tendency towards revictimization. Current technical measures are inadequate, especially with the advent of end-to-end encryption which hampers message monitoring. Existing solutions focus on the signature analysis of child abuse media, which does not effectively address real-time OG detection. This paper proposes that OG attacks are complex, requiring the identification of specific communication patterns between adults and children. It introduces a novel approach leveraging advanced models such as BERT and RoBERTa for Message-Level Analysis and a Context Determination approach for classifying actor interactions, including the introduction of Actor Significance Thresholds and Message Significance Thresholds. The proposed method aims to enhance accuracy and robustness in detecting OG by considering the dynamic and multi-faceted nature of these attacks. Cross-dataset experiments evaluate the robustness and versatility of our approach. This paper's contributions include improved detection methodologies and the potential for application in various scenarios, addressing gaps in current literature and practices.

A Deep Learning Method for Classification of Biophilic Artworks

Mar 08, 2024

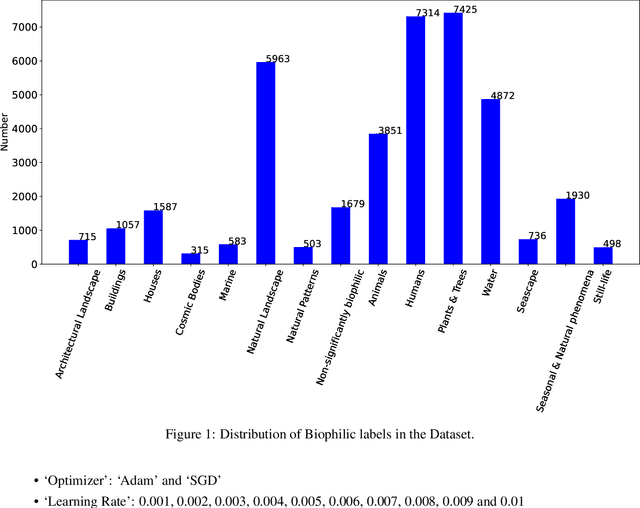

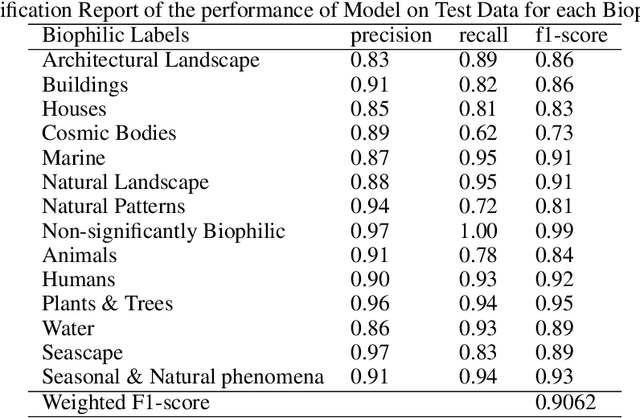

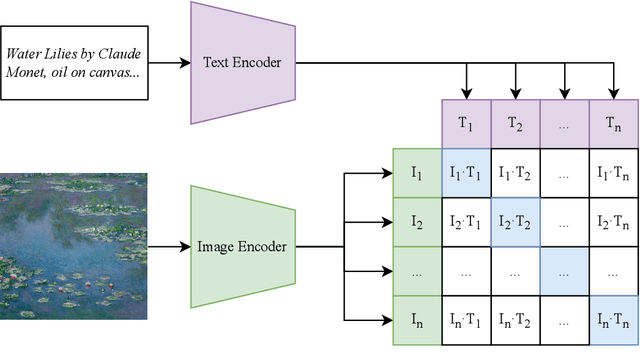

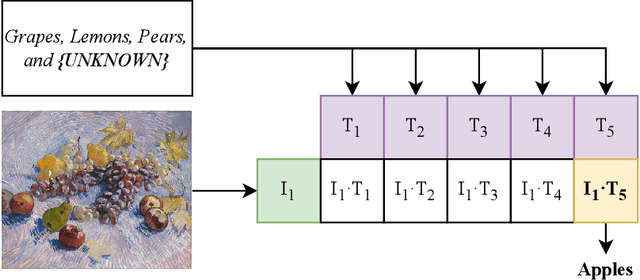

Abstract:Biophilia is an innate love for living things and nature itself that has been associated with a positive impact on mental health and well-being. This study explores the application of deep learning methods for the classification of Biophilic artwork, in order to learn and explain the different Biophilic characteristics present in a visual representation of a painting. Using the concept of Biophilia that postulates the deep connection of human beings with nature, we use an artificially intelligent algorithm to recognise the different patterns underlying the Biophilic features in an artwork. Our proposed method uses a lower-dimensional representation of an image and a decoder model to extract salient features of the image of each Biophilic trait, such as plants, water bodies, seasons, animals, etc., based on learnt factors such as shape, texture, and illumination. The proposed classification model is capable of extracting Biophilic artwork that not only helps artists, collectors, and researchers studying to interpret and exploit the effects of mental well-being on exposure to nature-inspired visual aesthetics but also enables a methodical exploration of the study of Biophilia and Biophilic artwork for aesthetic preferences. Using the proposed algorithms, we have also created a gallery of Biophilic collections comprising famous artworks from different European and American art galleries, which will soon be published on the Vieunite@ online community.

UDEEP: Edge-based Computer Vision for In-Situ Underwater Crayfish and Plastic Detection

Dec 21, 2023Abstract:Invasive signal crayfish have a detrimental impact on ecosystems. They spread the fungal-type crayfish plague disease (Aphanomyces astaci) that is lethal to the native white clawed crayfish, the only native crayfish species in Britain. Invasive signal crayfish extensively burrow, causing habitat destruction, erosion of river banks and adverse changes in water quality, while also competing with native species for resources and leading to declines in native populations. Moreover, pollution exacerbates the vulnerability of White-clawed crayfish, with their populations declining by over 90% in certain English counties, making them highly susceptible to extinction. To safeguard aquatic ecosystems, it is imperative to address the challenges posed by invasive species and discarded plastics in the United Kingdom's river ecosystem's. The UDEEP platform can play a crucial role in environmental monitoring by performing on-the-fly classification of Signal crayfish and plastic debris while leveraging the efficacy of AI, IoT devices and the power of edge computing (i.e., NJN). By providing accurate data on the presence, spread and abundance of these species, the UDEEP platform can contribute to monitoring efforts and aid in mitigating the spread of invasive species.

Real-time Detection of AI-Generated Speech for DeepFake Voice Conversion

Aug 24, 2023

Abstract:There are growing implications surrounding generative AI in the speech domain that enable voice cloning and real-time voice conversion from one individual to another. This technology poses a significant ethical threat and could lead to breaches of privacy and misrepresentation, thus there is an urgent need for real-time detection of AI-generated speech for DeepFake Voice Conversion. To address the above emerging issues, the DEEP-VOICE dataset is generated in this study, comprised of real human speech from eight well-known figures and their speech converted to one another using Retrieval-based Voice Conversion. Presenting as a binary classification problem of whether the speech is real or AI-generated, statistical analysis of temporal audio features through t-testing reveals that there are significantly different distributions. Hyperparameter optimisation is implemented for machine learning models to identify the source of speech. Following the training of 208 individual machine learning models over 10-fold cross validation, it is found that the Extreme Gradient Boosting model can achieve an average classification accuracy of 99.3% and can classify speech in real-time, at around 0.004 milliseconds given one second of speech. All data generated for this study is released publicly for future research on AI speech detection.

CIFAKE: Image Classification and Explainable Identification of AI-Generated Synthetic Images

Mar 24, 2023

Abstract:Recent technological advances in synthetic data have enabled the generation of images with such high quality that human beings cannot tell the difference between real-life photographs and Artificial Intelligence (AI) generated images. Given the critical necessity of data reliability and authentication, this article proposes to enhance our ability to recognise AI-generated images through computer vision. Initially, a synthetic dataset is generated that mirrors the ten classes of the already available CIFAR-10 dataset with latent diffusion which provides a contrasting set of images for comparison to real photographs. The model is capable of generating complex visual attributes, such as photorealistic reflections in water. The two sets of data present as a binary classification problem with regard to whether the photograph is real or generated by AI. This study then proposes the use of a Convolutional Neural Network (CNN) to classify the images into two categories; Real or Fake. Following hyperparameter tuning and the training of 36 individual network topologies, the optimal approach could correctly classify the images with 92.98% accuracy. Finally, this study implements explainable AI via Gradient Class Activation Mapping to explore which features within the images are useful for classification. Interpretation reveals interesting concepts within the image, in particular, noting that the actual entity itself does not hold useful information for classification; instead, the model focuses on small visual imperfections in the background of the images. The complete dataset engineered for this study, referred to as the CIFAKE dataset, is made publicly available to the research community for future work.

Dynamic Training of Liquid State Machines

Feb 06, 2023

Abstract:Spiking Neural Networks (SNNs) emerged as a promising solution in the field of Artificial Neural Networks (ANNs), attracting the attention of researchers due to their ability to mimic the human brain and process complex information with remarkable speed and accuracy. This research aimed to optimise the training process of Liquid State Machines (LSMs), a recurrent architecture of SNNs, by identifying the most effective weight range to be assigned in SNN to achieve the least difference between desired and actual output. The experimental results showed that by using spike metrics and a range of weights, the desired output and the actual output of spiking neurons could be effectively optimised, leading to improved performance of SNNs. The results were tested and confirmed using three different weight initialisation approaches, with the best results obtained using the Barabasi-Albert random graph method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge