Advaith V. Sethuraman

SLIM-VDB: A Real-Time 3D Probabilistic Semantic Mapping Framework

Dec 15, 2025Abstract:This paper introduces SLIM-VDB, a new lightweight semantic mapping system with probabilistic semantic fusion for closed-set or open-set dictionaries. Advances in data structures from the computer graphics community, such as OpenVDB, have demonstrated significantly improved computational and memory efficiency in volumetric scene representation. Although OpenVDB has been used for geometric mapping in robotics applications, semantic mapping for scene understanding with OpenVDB remains unexplored. In addition, existing semantic mapping systems lack support for integrating both fixed-category and open-language label predictions within a single framework. In this paper, we propose a novel 3D semantic mapping system that leverages the OpenVDB data structure and integrates a unified Bayesian update framework for both closed- and open-set semantic fusion. Our proposed framework, SLIM-VDB, achieves significant reduction in both memory and integration times compared to current state-of-the-art semantic mapping approaches, while maintaining comparable mapping accuracy. An open-source C++ codebase with a Python interface is available at https://github.com/umfieldrobotics/slim-vdb.

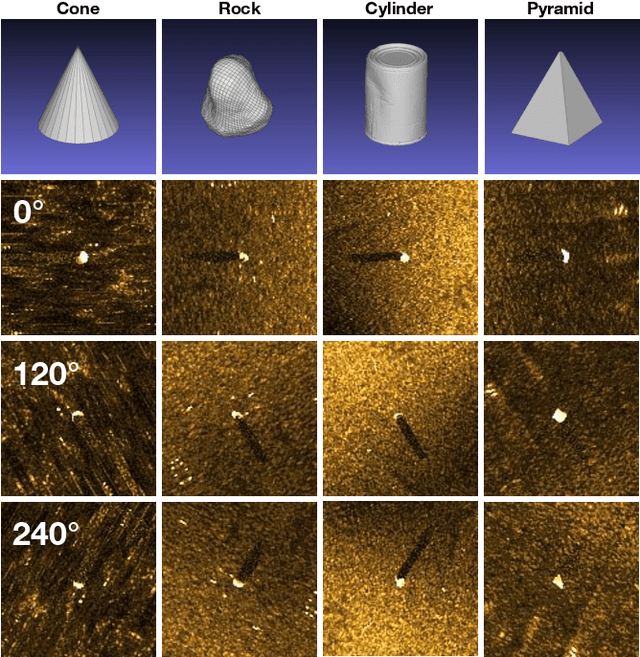

SonarSplat: Novel View Synthesis of Imaging Sonar via Gaussian Splatting

Mar 31, 2025

Abstract:In this paper, we present SonarSplat, a novel Gaussian splatting framework for imaging sonar that demonstrates realistic novel view synthesis and models acoustic streaking phenomena. Our method represents the scene as a set of 3D Gaussians with acoustic reflectance and saturation properties. We develop a novel method to efficiently rasterize learned Gaussians to produce a range/azimuth image that is faithful to the acoustic image formation model of imaging sonar. In particular, we develop a novel approach to model azimuth streaking in a Gaussian splatting framework. We evaluate SonarSplat using real-world datasets of sonar images collected from an underwater robotic platform in a controlled test tank and in a real-world river environment. Compared to the state-of-the-art, SonarSplat offers improved image synthesis capabilities (+2.5 dB PSNR). We also demonstrate that SonarSplat can be leveraged for azimuth streak removal and 3D scene reconstruction.

OceanSim: A GPU-Accelerated Underwater Robot Perception Simulation Framework

Mar 03, 2025Abstract:Underwater simulators offer support for building robust underwater perception solutions. Significant work has recently been done to develop new simulators and to advance the performance of existing underwater simulators. Still, there remains room for improvement on physics-based underwater sensor modeling and rendering efficiency. In this paper, we propose OceanSim, a high-fidelity GPU-accelerated underwater simulator to address this research gap. We propose advanced physics-based rendering techniques to reduce the sim-to-real gap for underwater image simulation. We develop OceanSim to fully leverage the computing advantages of GPUs and achieve real-time imaging sonar rendering and fast synthetic data generation. We evaluate the capabilities and realism of OceanSim using real-world data to provide qualitative and quantitative results. The project page for OceanSim is https://umfieldrobotics.github.io/OceanSim.

VAIR: Visuo-Acoustic Implicit Representations for Low-Cost, Multi-Modal Transparent Surface Reconstruction in Indoor Scenes

Nov 07, 2024

Abstract:Mobile robots operating indoors must be prepared to navigate challenging scenes that contain transparent surfaces. This paper proposes a novel method for the fusion of acoustic and visual sensing modalities through implicit neural representations to enable dense reconstruction of transparent surfaces in indoor scenes. We propose a novel model that leverages generative latent optimization to learn an implicit representation of indoor scenes consisting of transparent surfaces. We demonstrate that we can query the implicit representation to enable volumetric rendering in image space or 3D geometry reconstruction (point clouds or mesh) with transparent surface prediction. We evaluate our method's effectiveness qualitatively and quantitatively on a new dataset collected using a custom, low-cost sensing platform featuring RGB-D cameras and ultrasonic sensors. Our method exhibits significant improvement over state-of-the-art for transparent surface reconstruction.

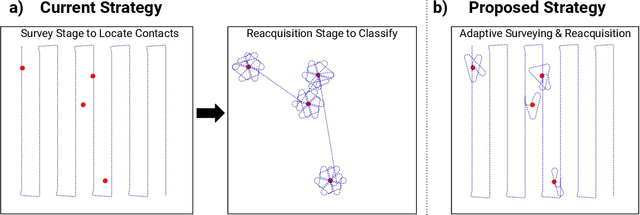

Learning Which Side to Scan: Multi-View Informed Active Perception with Side Scan Sonar for Autonomous Underwater Vehicles

Feb 02, 2024

Abstract:Autonomous underwater vehicles often perform surveys that capture multiple views of targets in order to provide more information for human operators or automatic target recognition algorithms. In this work, we address the problem of choosing the most informative views that minimize survey time while maximizing classifier accuracy. We introduce a novel active perception framework for multi-view adaptive surveying and reacquisition using side scan sonar imagery. Our framework addresses this challenge by using a graph formulation for the adaptive survey task. We then use Graph Neural Networks (GNNs) to both classify acquired sonar views and to choose the next best view based on the collected data. We evaluate our method using simulated surveys in a high-fidelity side scan sonar simulator. Our results demonstrate that our approach is able to surpass the state-of-the-art in classification accuracy and survey efficiency. This framework is a promising approach for more efficient autonomous missions involving side scan sonar, such as underwater exploration, marine archaeology, and environmental monitoring.

Machine Learning for Shipwreck Segmentation from Side Scan Sonar Imagery: Dataset and Benchmark

Jan 25, 2024Abstract:Open-source benchmark datasets have been a critical component for advancing machine learning for robot perception in terrestrial applications. Benchmark datasets enable the widespread development of state-of-the-art machine learning methods, which require large datasets for training, validation, and thorough comparison to competing approaches. Underwater environments impose several operational challenges that hinder efforts to collect large benchmark datasets for marine robot perception. Furthermore, a low abundance of targets of interest relative to the size of the search space leads to increased time and cost required to collect useful datasets for a specific task. As a result, there is limited availability of labeled benchmark datasets for underwater applications. We present the AI4Shipwrecks dataset, which consists of 24 distinct shipwreck sites totaling 286 high-resolution labeled side scan sonar images to advance the state-of-the-art in autonomous sonar image understanding. We leverage the unique abundance of targets in Thunder Bay National Marine Sanctuary in Lake Huron, MI, to collect and compile a sonar imagery benchmark dataset through surveys with an autonomous underwater vehicle (AUV). We consulted with expert marine archaeologists for the labeling of robotically gathered data. We then leverage this dataset to perform benchmark experiments for comparison of state-of-the-art supervised segmentation methods, and we present insights on opportunities and open challenges for the field. The dataset and benchmarking tools will be released as an open-source benchmark dataset to spur innovation in machine learning for Great Lakes and ocean exploration. The dataset and accompanying software are available at https://umfieldrobotics.github.io/ai4shipwrecks/.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge