Adrien Kaiser

Φeat: Physically-Grounded Feature Representation

Nov 14, 2025Abstract:Foundation models have emerged as effective backbones for many vision tasks. However, current self-supervised features entangle high-level semantics with low-level physical factors, such as geometry and illumination, hindering their use in tasks requiring explicit physical reasoning. In this paper, we introduce $Φ$eat, a novel physically-grounded visual backbone that encourages a representation sensitive to material identity, including reflectance cues and geometric mesostructure. Our key idea is to employ a pretraining strategy that contrasts spatial crops and physical augmentations of the same material under varying shapes and lighting conditions. While similar data have been used in high-end supervised tasks such as intrinsic decomposition or material estimation, we demonstrate that a pure self-supervised training strategy, without explicit labels, already provides a strong prior for tasks requiring robust features invariant to external physical factors. We evaluate the learned representations through feature similarity analysis and material selection, showing that $Φ$eat captures physically-grounded structure beyond semantic grouping. These findings highlight the promise of unsupervised physical feature learning as a foundation for physics-aware perception in vision and graphics. These findings highlight the promise of unsupervised physical feature learning as a foundation for physics-aware perception in vision and graphics.

MultiMat: Multimodal Program Synthesis for Procedural Materials using Large Multimodal Models

Sep 26, 2025

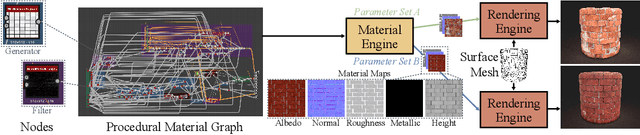

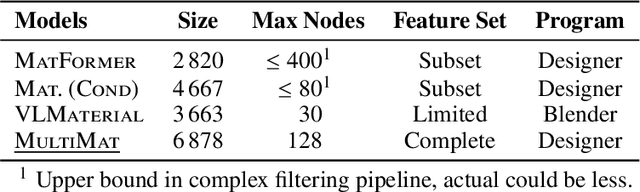

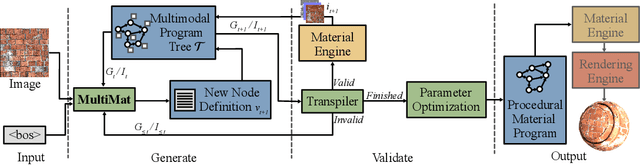

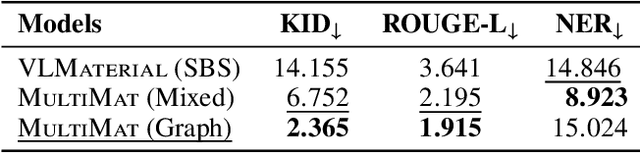

Abstract:Material node graphs are programs that generate the 2D channels of procedural materials, including geometry such as roughness and displacement maps, and reflectance such as albedo and conductivity maps. They are essential in computer graphics for representing the appearance of virtual 3D objects parametrically and at arbitrary resolution. In particular, their directed acyclic graph structures and intermediate states provide an intuitive understanding and workflow for interactive appearance modeling. Creating such graphs is a challenging task and typically requires professional training. While recent neural program synthesis approaches attempt to simplify this process, they solely represent graphs as textual programs, failing to capture the inherently visual-spatial nature of node graphs that makes them accessible to humans. To address this gap, we present MultiMat, a multimodal program synthesis framework that leverages large multimodal models to process both visual and textual graph representations for improved generation of procedural material graphs. We train our models on a new dataset of production-quality procedural materials and combine them with a constrained tree search inference algorithm that ensures syntactic validity while efficiently navigating the program space. Our experimental results show that our multimodal program synthesis method is more efficient in both unconditional and conditional graph synthesis with higher visual quality and fidelity than text-only baselines, establishing new state-of-the-art performance.

RRM: Relightable assets using Radiance guided Material extraction

Jul 08, 2024Abstract:Synthesizing NeRFs under arbitrary lighting has become a seminal problem in the last few years. Recent efforts tackle the problem via the extraction of physically-based parameters that can then be rendered under arbitrary lighting, but they are limited in the range of scenes they can handle, usually mishandling glossy scenes. We propose RRM, a method that can extract the materials, geometry, and environment lighting of a scene even in the presence of highly reflective objects. Our method consists of a physically-aware radiance field representation that informs physically-based parameters, and an expressive environment light structure based on a Laplacian Pyramid. We demonstrate that our contributions outperform the state-of-the-art on parameter retrieval tasks, leading to high-fidelity relighting and novel view synthesis on surfacic scenes.

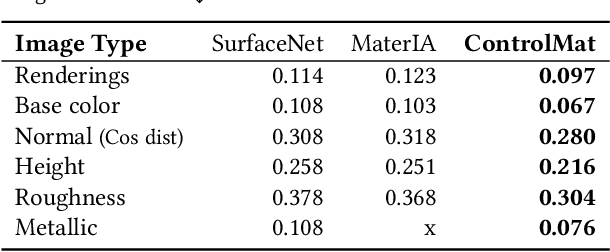

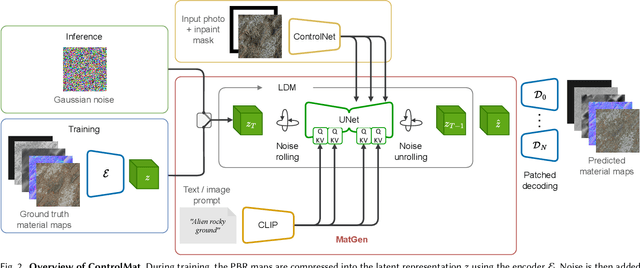

ControlMat: A Controlled Generative Approach to Material Capture

Sep 04, 2023

Abstract:Material reconstruction from a photograph is a key component of 3D content creation democratization. We propose to formulate this ill-posed problem as a controlled synthesis one, leveraging the recent progress in generative deep networks. We present ControlMat, a method which, given a single photograph with uncontrolled illumination as input, conditions a diffusion model to generate plausible, tileable, high-resolution physically-based digital materials. We carefully analyze the behavior of diffusion models for multi-channel outputs, adapt the sampling process to fuse multi-scale information and introduce rolled diffusion to enable both tileability and patched diffusion for high-resolution outputs. Our generative approach further permits exploration of a variety of materials which could correspond to the input image, mitigating the unknown lighting conditions. We show that our approach outperforms recent inference and latent-space-optimization methods, and carefully validate our diffusion process design choices. Supplemental materials and additional details are available at: https://gvecchio.com/controlmat/.

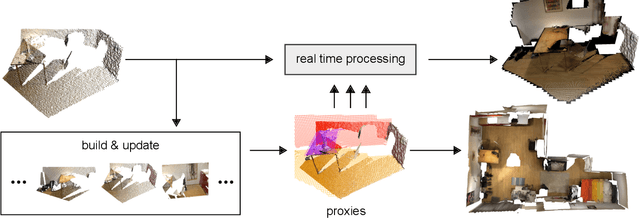

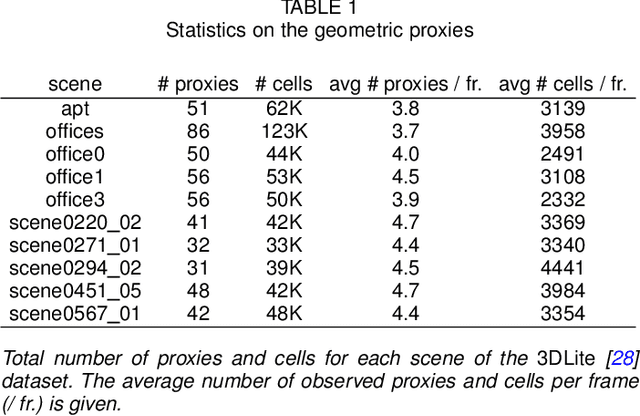

Geometric Proxies for Live RGB-D Stream Enhancement and Consolidation

Jan 21, 2020

Abstract:We propose a geometric superstructure for unified real-time processing of RGB-D data. Modern RGB-D sensors are widely used for indoor 3D capture, with applications ranging from modeling to robotics, through augmented reality. Nevertheless, their use is limited by their low resolution, with frames often corrupted with noise, missing data and temporal inconsistencies. Our approach consists in generating and updating through time a single set of compact local statistics parameterized over detected geometric proxies, which are fed from raw RGB-D data. Our proxies provide several processing primitives, which improve the quality of the RGB-D stream on the fly or lighten further operations. Experimental results confirm that our lightweight analysis framework copes well with embedded execution as well as moderate memory and computational capabilities compared to state-of-the-art methods. Processing RGB-D data with our proxies allows noise and temporal flickering removal, hole filling and resampling. As a substitute of the observed scene, our proxies can additionally be applied to compression and scene reconstruction. We present experiments performed with our framework in indoor scenes of different natures within a recent open RGB-D dataset.

Plane Pair Matching for Efficient 3D View Registration

Jan 20, 2020

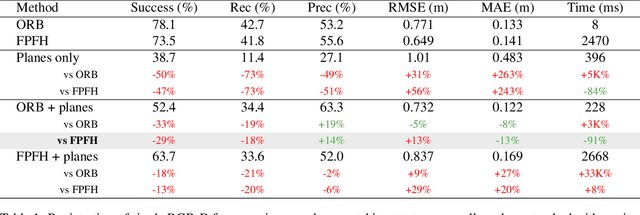

Abstract:We present a novel method to estimate the motion matrix between overlapping pairs of 3D views in the context of indoor scenes. We use the Manhattan world assumption to introduce lightweight geometric constraints under the form of planes into the problem, which reduces complexity by taking into account the structure of the scene. In particular, we define a stochastic framework to categorize planes as vertical or horizontal and parallel or non-parallel. We leverage this classification to match pairs of planes in overlapping views with point-of-view agnostic structural metrics. We propose to split the motion computation using the classification and estimate separately the rotation and translation of the sensor, using a quadric minimizer. We validate our approach on a toy example and present quantitative experiments on a public RGB-D dataset, comparing against recent state-of-the-art methods. Our evaluation shows that planar constraints only add low computational overhead while improving results in precision when applied after a prior coarse estimate. We conclude by giving hints towards extensions and improvements of current results.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge