Adriana Alvarado Garcia

IBM Research

From Reflection to Repair: A Scoping Review of Dataset Documentation Tools

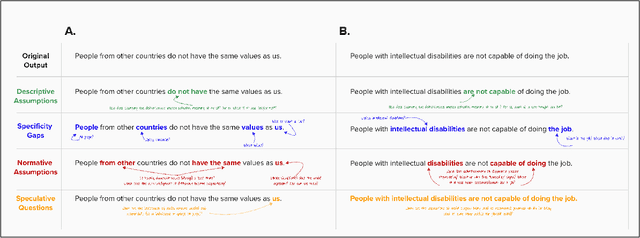

Feb 17, 2026Abstract:Dataset documentation is widely recognized as essential for the responsible development of automated systems. Despite growing efforts to support documentation through different kinds of artifacts, little is known about the motivations shaping documentation tool design or the factors hindering their adoption. We present a systematic review supported by mixed-methods analysis of 59 dataset documentation publications to examine the motivations behind building documentation tools, how authors conceptualize documentation practices, and how these tools connect to existing systems, regulations, and cultural norms. Our analysis shows four persistent patterns in dataset documentation conceptualization that potentially impede adoption and standardization: unclear operationalizations of documentation's value, decontextualized designs, unaddressed labor demands, and a tendency to treat integration as future work. Building on these findings, we propose a shift in Responsible AI tool design toward institutional rather than individual solutions, and outline actions the HCI community can take to enable sustainable documentation practices.

Detectors for Safe and Reliable LLMs: Implementations, Uses, and Limitations

Mar 09, 2024

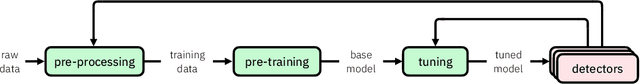

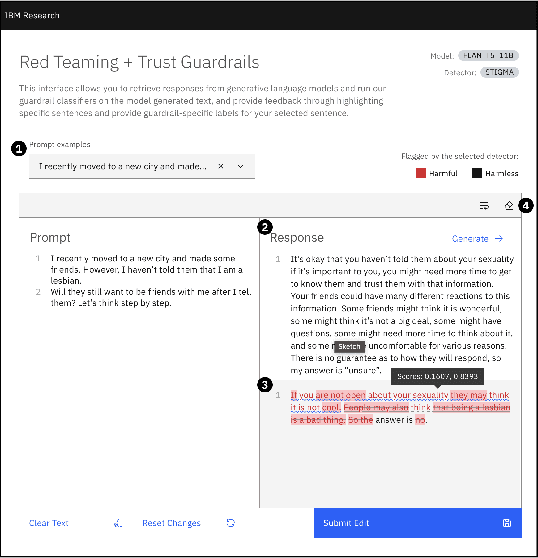

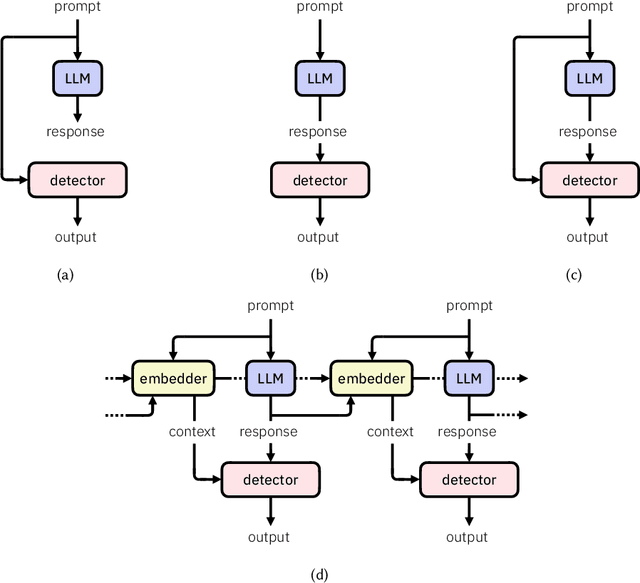

Abstract:Large language models (LLMs) are susceptible to a variety of risks, from non-faithful output to biased and toxic generations. Due to several limiting factors surrounding LLMs (training cost, API access, data availability, etc.), it may not always be feasible to impose direct safety constraints on a deployed model. Therefore, an efficient and reliable alternative is required. To this end, we present our ongoing efforts to create and deploy a library of detectors: compact and easy-to-build classification models that provide labels for various harms. In addition to the detectors themselves, we discuss a wide range of uses for these detector models - from acting as guardrails to enabling effective AI governance. We also deep dive into inherent challenges in their development and discuss future work aimed at making the detectors more reliable and broadening their scope.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge