Achille Globo

Enhancing Modern Supervised Word Sense Disambiguation Models by Semantic Lexical Resources

Feb 20, 2024

Abstract:Supervised models for Word Sense Disambiguation (WSD) currently yield to state-of-the-art results in the most popular benchmarks. Despite the recent introduction of Word Embeddings and Recurrent Neural Networks to design powerful context-related features, the interest in improving WSD models using Semantic Lexical Resources (SLRs) is mostly restricted to knowledge-based approaches. In this paper, we enhance "modern" supervised WSD models exploiting two popular SLRs: WordNet and WordNet Domains. We propose an effective way to introduce semantic features into the classifiers, and we consider using the SLR structure to augment the training data. We study the effect of different types of semantic features, investigating their interaction with local contexts encoded by means of mixtures of Word Embeddings or Recurrent Neural Networks, and we extend the proposed model into a novel multi-layer architecture for WSD. A detailed experimental comparison in the recent Unified Evaluation Framework (Raganato et al., 2017) shows that the proposed approach leads to supervised models that compare favourably with the state-of-the art.

* The 11th International Conference on Language Resources and Evaluation (LREC 2018)

Neural paraphrasing by automatically crawled and aligned sentence pairs

Feb 16, 2024

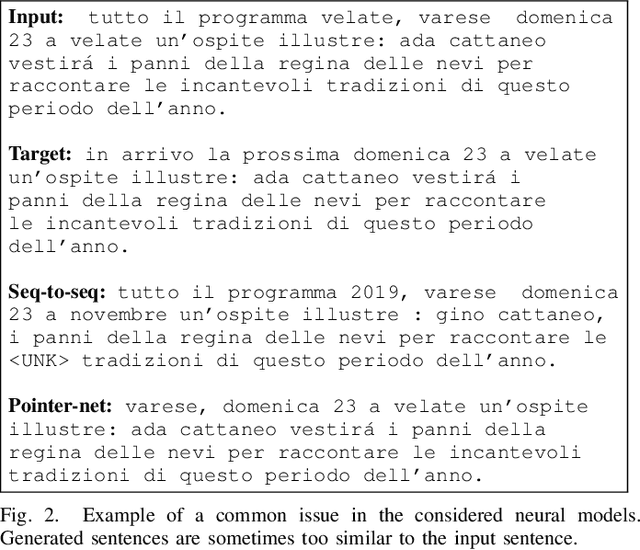

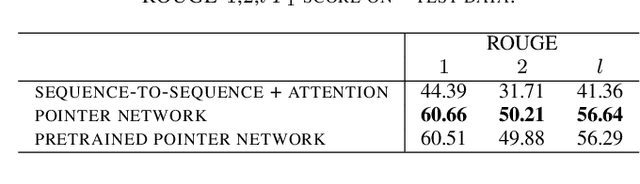

Abstract:Paraphrasing is the task of re-writing an input text using other words, without altering the meaning of the original content. Conversational systems can exploit automatic paraphrasing to make the conversation more natural, e.g., talking about a certain topic using different paraphrases in different time instants. Recently, the task of automatically generating paraphrases has been approached in the context of Natural Language Generation (NLG). While many existing systems simply consist in rule-based models, the recent success of the Deep Neural Networks in several NLG tasks naturally suggests the possibility of exploiting such networks for generating paraphrases. However, the main obstacle toward neural-network-based paraphrasing is the lack of large datasets with aligned pairs of sentences and paraphrases, that are needed to efficiently train the neural models. In this paper we present a method for the automatic generation of large aligned corpora, that is based on the assumption that news and blog websites talk about the same events using different narrative styles. We propose a similarity search procedure with linguistic constraints that, given a reference sentence, is able to locate the most similar candidate paraphrases out from millions of indexed sentences. The data generation process is evaluated in the case of the Italian language, performing experiments using pointer-based deep neural architectures.

* The 6th International Conference on Social Networks Analysis, Management and Security (SNAMS 2019)

A novel integrated industrial approach with cobots in the age of industry 4.0 through conversational interaction and computer vision

Feb 16, 2024Abstract:From robots that replace workers to robots that serve as helpful colleagues, the field of robotic automation is experiencing a new trend that represents a huge challenge for component manufacturers. The contribution starts from an innovative vision that sees an ever closer collaboration between Cobot, able to do a specific physical job with precision, the AI world, able to analyze information and support the decision-making process, and the man able to have a strategic vision of the future.

An energy-based comparative analysis of common approaches to text classification in the Legal domain

Nov 02, 2023

Abstract:Most Machine Learning research evaluates the best solutions in terms of performance. However, in the race for the best performing model, many important aspects are often overlooked when, on the contrary, they should be carefully considered. In fact, sometimes the gaps in performance between different approaches are neglectable, whereas factors such as production costs, energy consumption, and carbon footprint must take into consideration. Large Language Models (LLMs) are extensively adopted to address NLP problems in academia and industry. In this work, we present a detailed quantitative comparison of LLM and traditional approaches (e.g. SVM) on the LexGLUE benchmark, which takes into account both performance (standard indices) and alternative metrics such as timing, power consumption and cost, in a word: the carbon-footprint. In our analysis, we considered the prototyping phase (model selection by training-validation-test iterations) and in-production phases separately, since they follow different implementation procedures and also require different resources. The results indicate that very often, the simplest algorithms achieve performance very close to that of large LLMs but with very low power consumption and lower resource demands. The results obtained could suggest companies to include additional evaluations in the choice of Machine Learning (ML) solutions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge