Vertical Federated Learning: Challenges, Methodologies and Experiments

Paper and Code

Feb 09, 2022

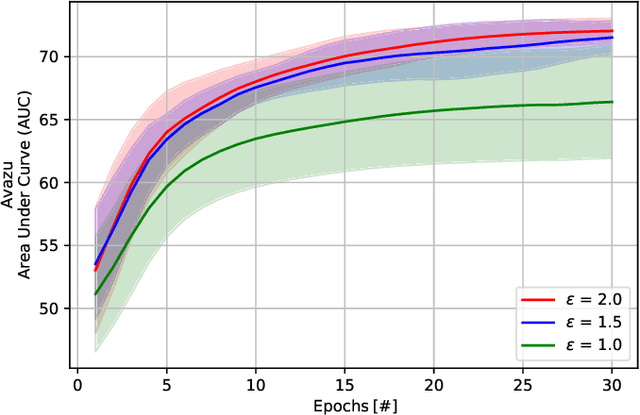

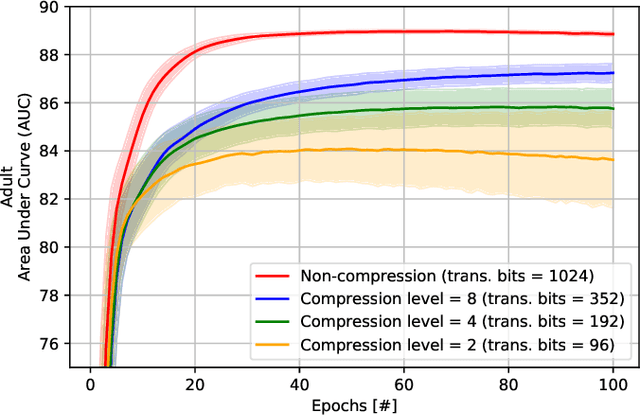

Recently, federated learning (FL) has emerged as a promising distributed machine learning (ML) technology, owing to the advancing computational and sensing capacities of end-user devices, however with the increasing concerns on users' privacy. As a special architecture in FL, vertical FL (VFL) is capable of constructing a hyper ML model by embracing sub-models from different clients. These sub-models are trained locally by vertically partitioned data with distinct attributes. Therefore, the design of VFL is fundamentally different from that of conventional FL, raising new and unique research issues. In this paper, we aim to discuss key challenges in VFL with effective solutions, and conduct experiments on real-life datasets to shed light on these issues. Specifically, we first propose a general framework on VFL, and highlight the key differences between VFL and conventional FL. Then, we discuss research challenges rooted in VFL systems under four aspects, i.e., security and privacy risks, expensive computation and communication costs, possible structural damage caused by model splitting, and system heterogeneity. Afterwards, we develop solutions to addressing the aforementioned challenges, and conduct extensive experiments to showcase the effectiveness of our proposed solutions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge