UPNeRF: A Unified Framework for Monocular 3D Object Reconstruction and Pose Estimation

Paper and Code

Mar 23, 2024

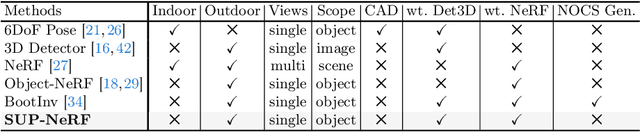

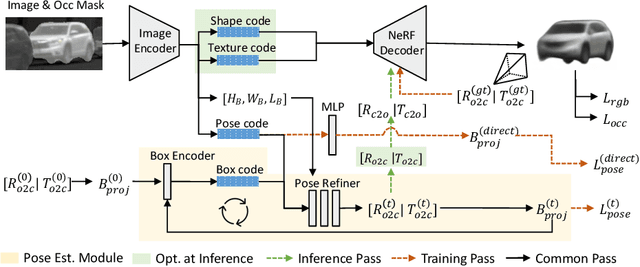

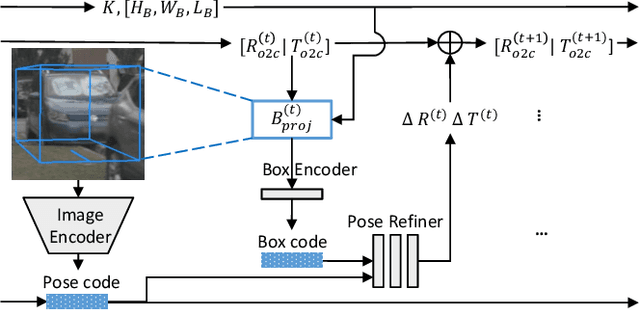

Monocular 3D reconstruction for categorical objects heavily relies on accurately perceiving each object's pose. While gradient-based optimization within a NeRF framework updates initially given poses, this paper highlights that such a scheme fails when the initial pose even moderately deviates from the true pose. Consequently, existing methods often depend on a third-party 3D object to provide an initial object pose, leading to increased complexity and generalization issues. To address these challenges, we present UPNeRF, a Unified framework integrating Pose estimation and NeRF-based reconstruction, bringing us closer to real-time monocular 3D object reconstruction. UPNeRF decouples the object's dimension estimation and pose refinement to resolve the scale-depth ambiguity, and introduces an effective projected-box representation that generalizes well cross different domains. While using a dedicated pose estimator that smoothly integrates into an object-centric NeRF, UPNeRF is free from external 3D detectors. UPNeRF achieves state-of-the-art results in both reconstruction and pose estimation tasks on the nuScenes dataset. Furthermore, UPNeRF exhibits exceptional Cross-dataset generalization on the KITTI and Waymo datasets, surpassing prior methods with up to 50% reduction in rotation and translation error.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge