Unsupervised Domain Adaptation for Vestibular Schwannoma and Cochlea Segmentation via Semi-supervised Learning and Label Fusion

Paper and Code

Jan 25, 2022

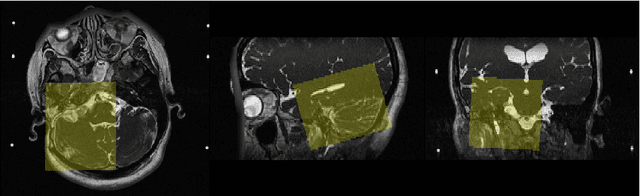

Automatic methods to segment the vestibular schwannoma (VS) tumors and the cochlea from magnetic resonance imaging (MRI) are critical to VS treatment planning. Although supervised methods have achieved satisfactory performance in VS segmentation, they require full annotations by experts, which is laborious and time-consuming. In this work, we aim to tackle the VS and cochlea segmentation problem in an unsupervised domain adaptation setting. Our proposed method leverages both the image-level domain alignment to minimize the domain divergence and semi-supervised training to further boost the performance. Furthermore, we propose to fuse the labels predicted from multiple models via noisy label correction. In the MICCAI 2021 crossMoDA challenge, our results on the final evaluation leaderboard showed that our proposed method has achieved promising segmentation performance with mean dice score of 79.9% and 82.5% and ASSD of 1.29 mm and 0.18 mm for VS tumor and cochlea, respectively. The cochlea ASSD achieved by our method has outperformed all other competing methods as well as the supervised nnU-Net.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge