Transformation Importance with Applications to Cosmology

Paper and Code

Mar 04, 2020

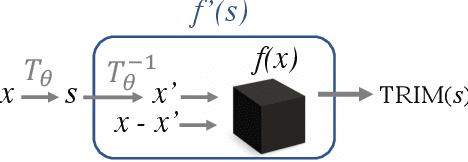

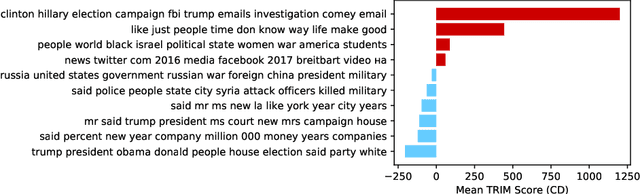

Machine learning lies at the heart of new possibilities for scientific discovery, knowledge generation, and artificial intelligence. Its potential benefits to these fields requires going beyond predictive accuracy and focusing on interpretability. In particular, many scientific problems require interpretations in a domain-specific interpretable feature space (e.g. the frequency domain) whereas attributions to the raw features (e.g. the pixel space) may be unintelligible or even misleading. To address this challenge, we propose TRIM (TRansformation IMportance), a novel approach which attributes importances to features in a transformed space and can be applied post-hoc to a fully trained model. TRIM is motivated by a cosmological parameter estimation problem using deep neural networks (DNNs) on simulated data, but it is generally applicable across domains/models and can be combined with any local interpretation method. In our cosmology example, combining TRIM with contextual decomposition shows promising results for identifying which frequencies a DNN uses, helping cosmologists to understand and validate that the model learns appropriate physical features rather than simulation artifacts.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge