Towards Extremely Fast Bilevel Optimization with Self-governed Convergence Guarantees

Paper and Code

May 20, 2022

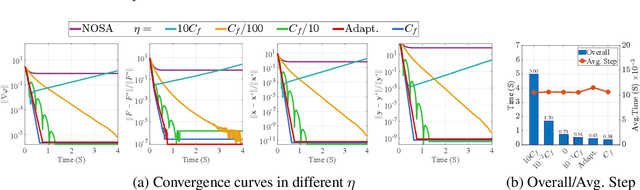

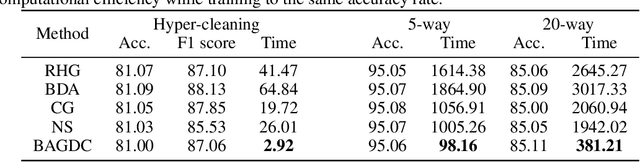

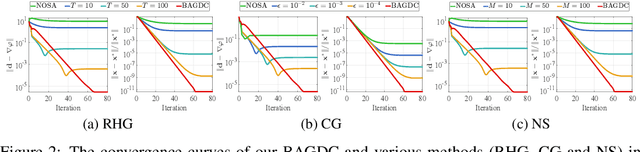

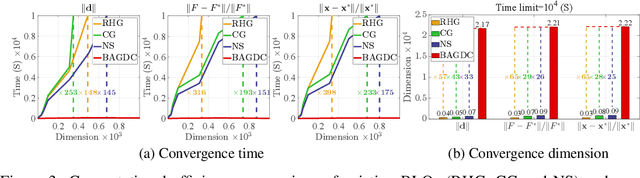

Gradient methods have become mainstream techniques for Bi-Level Optimization (BLO) in learning and vision fields. The validity of existing works heavily relies on solving a series of approximation subproblems with extraordinarily high accuracy. Unfortunately, to achieve the approximation accuracy requires executing a large quantity of time-consuming iterations and computational burden is naturally caused. This paper is thus devoted to address this critical computational issue. In particular, we propose a single-level formulation to uniformly understand existing explicit and implicit Gradient-based BLOs (GBLOs). This together with our designed counter-example can clearly illustrate the fundamental numerical and theoretical issues of GBLOs and their naive accelerations. By introducing the dual multipliers as a new variable, we then establish Bilevel Alternating Gradient with Dual Correction (BAGDC), a general framework, which significantly accelerates different categories of existing methods by taking specific settings. A striking feature of our convergence result is that, compared to those original unaccelerated GBLO versions, the fast BAGDC admits a unified non-asymptotic convergence theory towards stationarity. A variety of numerical experiments have also been conducted to demonstrate the superiority of the proposed algorithmic framework.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge