Towards Annotation-free Instance Segmentation and Tracking with Adversarial Simulations

Paper and Code

Jan 19, 2021

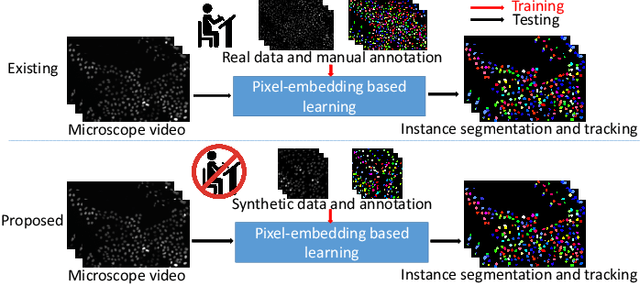

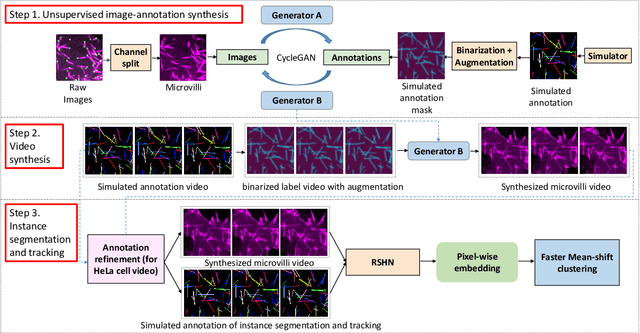

The quantitative analysis of microscope videos often requires instance segmentation and tracking of cellular and subcellular objects. The traditional method is composed of two stages: (1) performing instance object segmentation of each frame, and (2) associating objects frame-by-frame. Recently, pixel-embedding-based deep learning approaches provide single stage holistic solutions to tackle instance segmentation and tracking simultaneously. However, such deep learning methods require consistent annotations not only spatially (for segmentation), but also temporally (for tracking). In computer vision, annotated training data with consistent segmentation and tracking is resource intensive, the severity of which can be multiplied in microscopy imaging due to (1) dense objects (e.g., overlapping or touching), and (2) high dynamics (e.g., irregular motion and mitosis). To alleviate the lack of such annotations in dynamics scenes, adversarial simulations have provided successful solutions in computer vision, such as using simulated environments (e.g., computer games) to train real-world self-driving systems. In this paper, we propose an annotation-free synthetic instance segmentation and tracking (ASIST) method with adversarial simulation and single-stage pixel-embedding based learning. The contribution of this paper is three-fold: (1) the proposed method aggregates adversarial simulations and single-stage pixel-embedding based deep learning; (2) the method is assessed with both the cellular (i.e., HeLa cells) and subcellular (i.e., microvilli) objects; and (3) to the best of our knowledge, this is the first study to explore annotation-free instance segmentation and tracking study for microscope videos. This ASIST method achieved an important step forward, when compared with fully supervised approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge