The Value of Nullspace Tuning Using Partial Label Information

Paper and Code

Mar 17, 2020

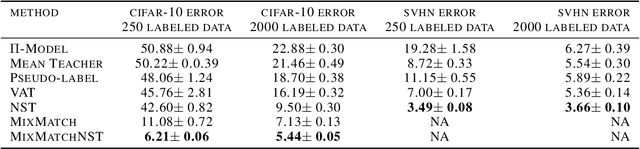

In semi-supervised learning, information from unlabeled examples is used to improve the model learned from labeled examples. But in some learning problems, partial label information can be inferred from otherwise unlabeled examples and used to further improve the model. In particular, partial label information exists when subsets of training examples are known to have the same label, even though the label itself is missing. By encouraging a model to give the same label to all such examples, we can potentially improve its performance. We call this encouragement \emph{Nullspace Tuning} because the difference vector between any pair of examples with the same label should lie in the nullspace of a linear model. In this paper, we investigate the benefit of using partial label information using a careful comparison framework over well-characterized public datasets. We show that the additional information provided by partial labels reduces test error over good semi-supervised methods usually by a factor of 2, up to a factor of 5.5 in the best case. We also show that adding Nullspace Tuning to the newer and state-of-the-art MixMatch method decreases its test error by up to a factor of 1.8.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge