Tensor Relational Algebra for Machine Learning System Design

Paper and Code

Oct 01, 2020

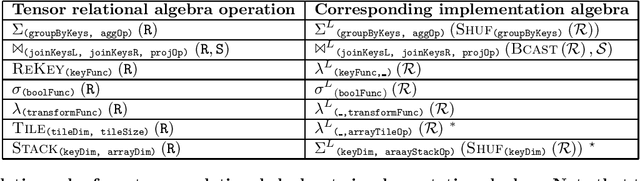

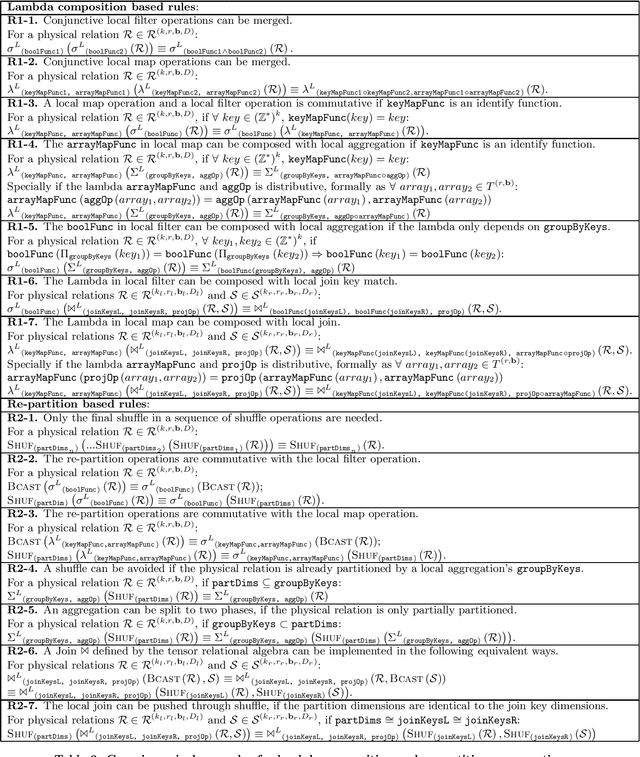

Machine learning (ML) systems have to support various tensor operations. However, such ML systems were largely developed without asking: what are the foundational abstractions necessary for building machine learning systems? We believe that proper computational and implementation abstractions will allow for the construction of self-configuring, declarative ML systems, especially when the goal is to execute tensor operations in a distributed environment, or partitioned across multiple AI accelerators (ASICs). To this end, we first introduce a tensor relational algebra (TRA), which is expressive to encode any tensor operation that can be written in the Einstein notation. We consider how TRA expressions can be re-written into an implementation algebra (IA) that enables effective implementation in a distributed environment, as well as how expressions in the IA can be optimized. Our empirical study shows that the optimized implementation provided by IA can reach or even out-perform carefully engineered HPC or ML systems for large scale tensor manipulations and ML workflows in distributed clusters.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge