Stochastic Item Descent Method for Large Scale Equal Circle Packing Problem

Paper and Code

Jan 22, 2020

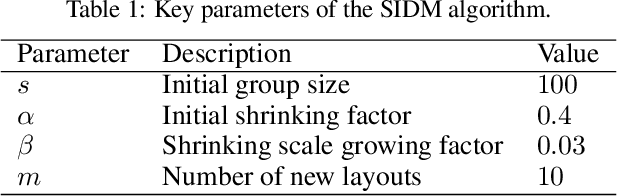

Stochastic gradient descent (SGD) is a powerful method for large-scale optimization problems in the area of machine learning, especially for a finite-sum formulation with numerous variables. In recent years, mini-batch SGD gains great success and has become a standard technique for training deep neural networks fed with big amount of data. Inspired by its success in deep learning, we apply the idea of SGD with batch selection of samples to a classic optimization problem in decision version. Given $n$ unit circles, the equal circle packing problem (ECPP) asks whether there exist a feasible packing that could put all the circles inside a circular container without overlapping. Specifically, we propose a stochastic item descent method (SIDM) for ECPP in large scale, which randomly divides the unit circles into batches and runs Broyden-Fletcher-Goldfarb-Shanno (BFGS) algorithm on the corresponding batch function iteratively to speedup the calculation. We also increase the batch size during the batch iterations to gain higher quality solution. Comparing to the current best packing algorithms, SIDM greatly speeds up the calculation of optimization process and guarantees the solution quality for large scale instances with up to 1500 circle items, while the baseline algorithms usually handle about 300 circle items. The results indicate the highly efficiency of SIDM for this classic optimization problem in large scale, and show potential for other large scale classic optimization problems in which gradient descent is used for optimization.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge