Spatiotemporal Contrastive Video Representation Learning

Paper and Code

Aug 09, 2020

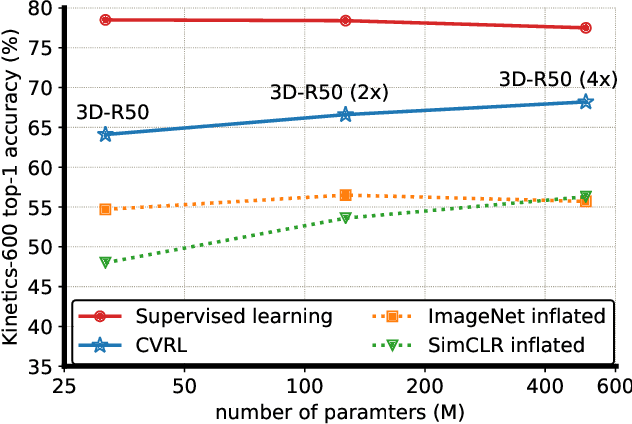

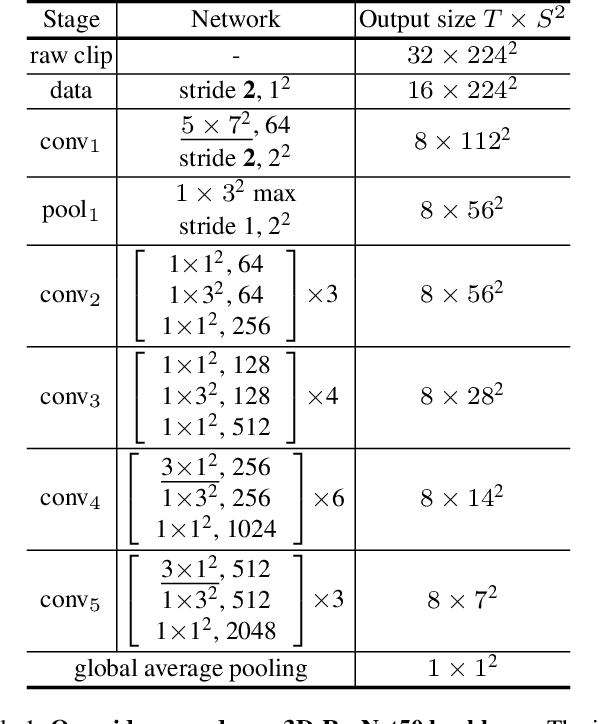

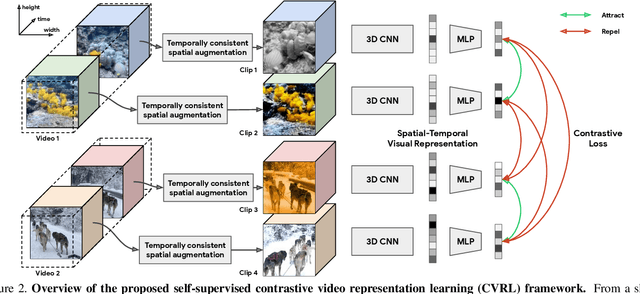

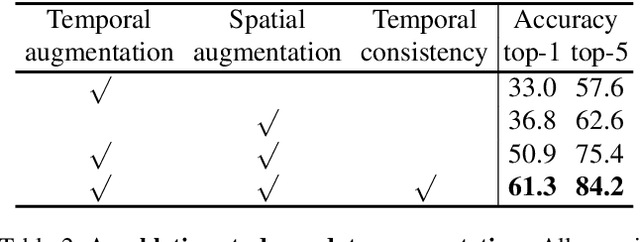

We present a self-supervised Contrastive Video Representation Learning (CVRL) method to learn spatiotemporal visual representations from unlabeled videos. Inspired by the recently proposed self-supervised contrastive learning framework, our representations are learned using a contrastive loss, where two clips from the same short video are pulled together in the embedding space, while clips from different videos are pushed away. We study what makes for good data augmentation for video self-supervised learning and find both spatial and temporal information are crucial. In particular, we propose a simple yet effective temporally consistent spatial augmentation method to impose strong spatial augmentations on each frame of a video clip while maintaining the temporal consistency across frames. For Kinetics-600 action recognition, a linear classifier trained on representations learned by CVRL achieves 64.1\% top-1 accuracy with a 3D-ResNet50 backbone, outperforming ImageNet supervised pre-training by 9.4\% and SimCLR unsupervised pre-training by 16.1\% using the same inflated 3D-ResNet50. The performance of CVRL can be further improved to 68.2\% with a larger 3D-ResNet50 (4$\times$) backbone, significantly closing the gap between unsupervised and supervised video representation learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge