Robust Contextual Bandit via the Capped-$\ell_{2}$ norm

Paper and Code

Aug 17, 2017

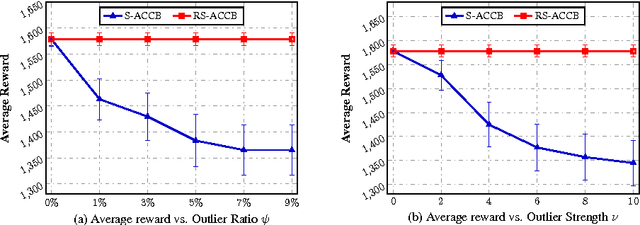

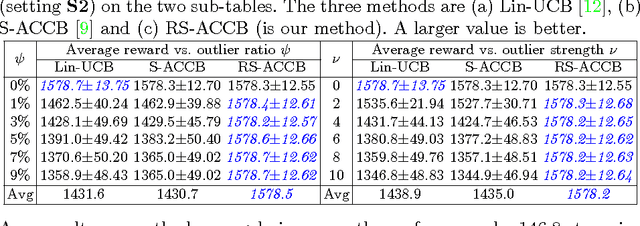

This paper considers the actor-critic contextual bandit for the mobile health (mHealth) intervention. The state-of-the-art decision-making methods in mHealth generally assume that the noise in the dynamic system follows the Gaussian distribution. Those methods use the least-square-based algorithm to estimate the expected reward, which is prone to the existence of outliers. To deal with the issue of outliers, we propose a novel robust actor-critic contextual bandit method for the mHealth intervention. In the critic updating, the capped-$\ell_{2}$ norm is used to measure the approximation error, which prevents outliers from dominating our objective. A set of weights could be achieved from the critic updating. Considering them gives a weighted objective for the actor updating. It provides the badly noised sample in the critic updating with zero weights for the actor updating. As a result, the robustness of both actor-critic updating is enhanced. There is a key parameter in the capped-$\ell_{2}$ norm. We provide a reliable method to properly set it by making use of one of the most fundamental definitions of outliers in statistics. Extensive experiment results demonstrate that our method can achieve almost identical results compared with the state-of-the-art methods on the dataset without outliers and dramatically outperform them on the datasets noised by outliers.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge