Rethinking and Recomputing the Value of ML Models

Paper and Code

Sep 30, 2022

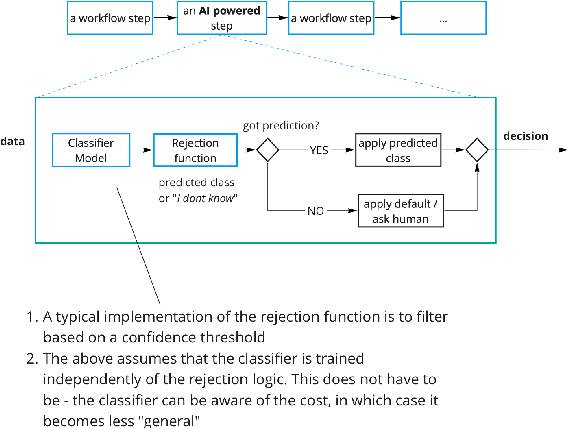

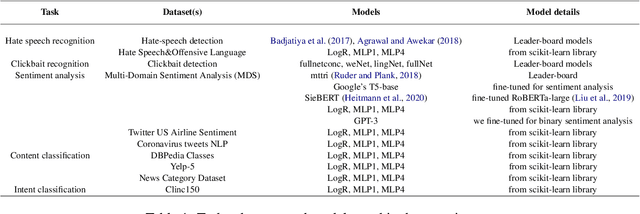

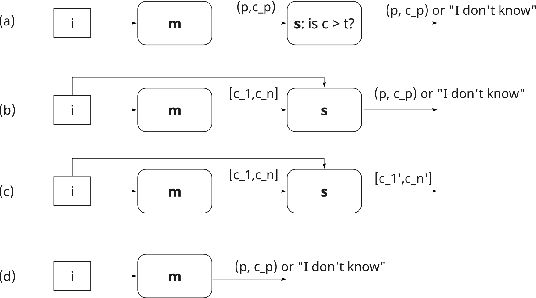

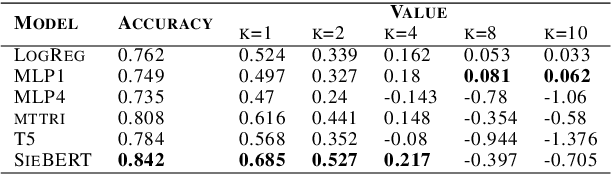

In this paper, we argue that the way we have been training and evaluating ML models has largely forgotten the fact that they are applied in an organization or societal context as they provide value to people. We show that with this perspective we fundamentally change how we evaluate, select and deploy ML models - and to some extent even what it means to learn. Specifically, we stress that the notion of value plays a central role in learning and evaluating, and different models may require different learning practices and provide different values based on the application context they are applied. We also show that this concretely impacts how we select and embed models into human workflows based on experimental datasets. Nothing of what is presented here is hard: to a large extent is a series of fairly trivial observations with massive practical implications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge