Probabilistic Deep Learning using Random Sum-Product Networks

Paper and Code

Jun 22, 2018

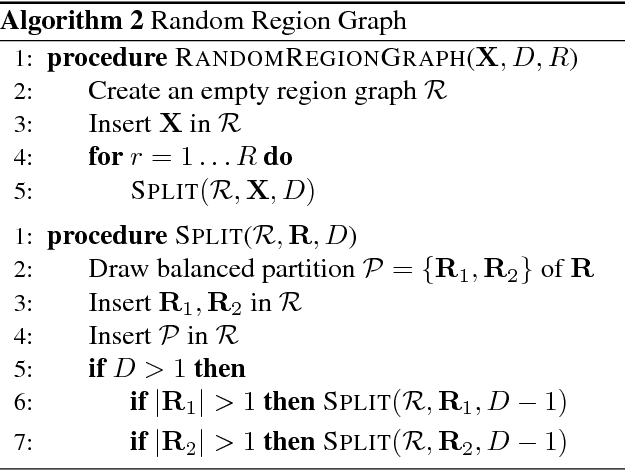

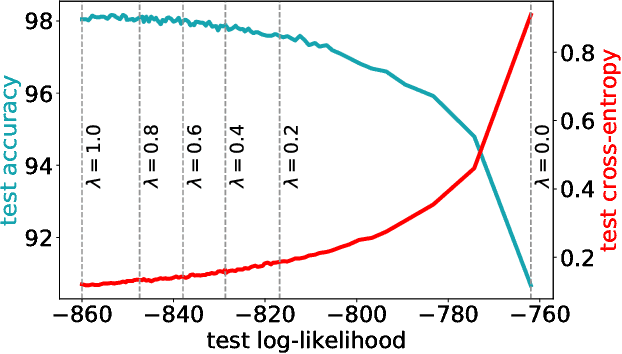

The need for consistent treatment of uncertainty has recently triggered increased interest in probabilistic deep learning methods. However, most current approaches have severe limitations when it comes to inference, since many of these models do not even permit to evaluate exact data likelihoods. Sum-product networks (SPNs), on the other hand, are an excellent architecture in that regard, as they allow to efficiently evaluate likelihoods, as well as arbitrary marginalization and conditioning tasks. Nevertheless, SPNs have not been fully explored as serious deep learning models, likely due to their special structural requirements, which complicate learning. In this paper, we make a drastic simplification and use random SPN structures which are trained in a "classical deep learning manner", i.e. employing automatic differentiation, SGD, and GPU support. The resulting models, called RAT-SPNs, yield prediction results comparable to deep neural networks, while still being interpretable as generative model and maintaining well-calibrated uncertainties. This property makes them highly robust under missing input features and enables them to naturally detect outliers and peculiar samples.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge