Proactively Control Privacy in Recommender Systems

Paper and Code

Apr 01, 2022

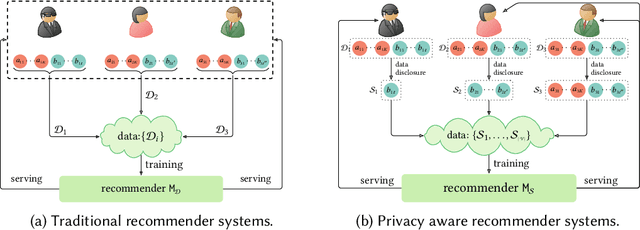

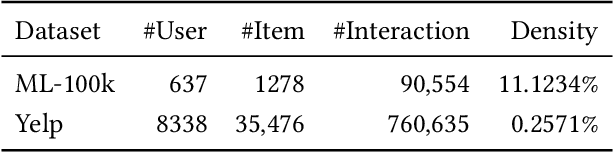

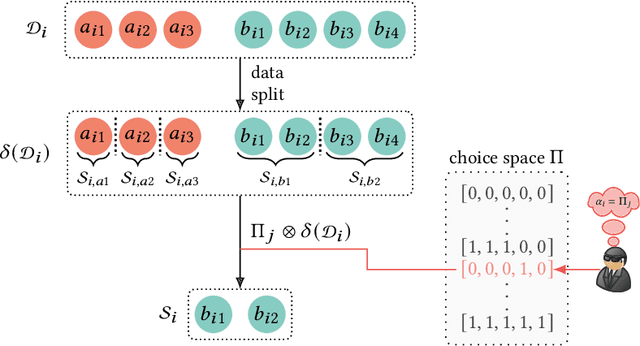

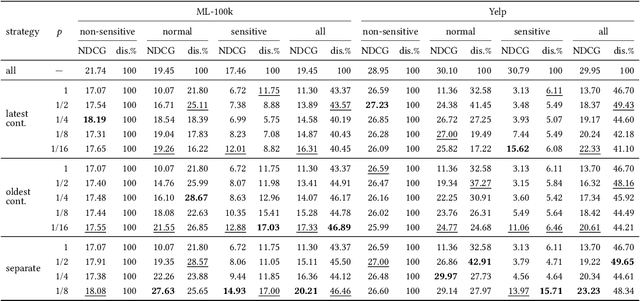

Recently, privacy issues in web services that rely on users' personal data have raised great attention. Unlike existing privacy-preserving technologies such as federated learning and differential privacy, we explore another way to mitigate users' privacy concerns, giving them control over their own data. For this goal, we propose a privacy aware recommendation framework that gives users delicate control over their personal data, including implicit behaviors, e.g., clicks and watches. In this new framework, users can proactively control which data to disclose based on the trade-off between anticipated privacy risks and potential utilities. Then we study users' privacy decision making under different data disclosure mechanisms and recommendation models, and how their data disclosure decisions affect the recommender system's performance. To avoid the high cost of real-world experiments, we apply simulations to study the effects of our proposed framework. Specifically, we propose a reinforcement learning algorithm to simulate users' decisions (with various sensitivities) under three proposed platform mechanisms on two datasets with three representative recommendation models. The simulation results show that the platform mechanisms with finer split granularity and more unrestrained disclosure strategy can bring better results for both end users and platforms than the "all or nothing" binary mechanism adopted by most real-world applications. It also shows that our proposed framework can effectively protect users' privacy since they can obtain comparable or even better results with much less disclosed data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge