pFedMoE: Data-Level Personalization with Mixture of Experts for Model-Heterogeneous Personalized Federated Learning

Paper and Code

Feb 11, 2024

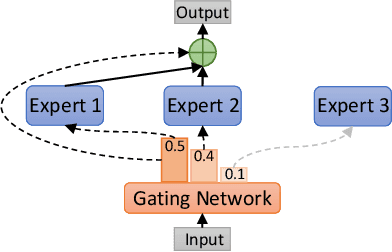

Federated learning (FL) has been widely adopted for collaborative training on decentralized data. However, it faces the challenges of data, system, and model heterogeneity. This has inspired the emergence of model-heterogeneous personalized federated learning (MHPFL). Nevertheless, the problem of ensuring data and model privacy, while achieving good model performance and keeping communication and computation costs low remains open in MHPFL. To address this problem, we propose a model-heterogeneous personalized Federated learning with Mixture of Experts (pFedMoE) method. It assigns a shared homogeneous small feature extractor and a local gating network for each client's local heterogeneous large model. Firstly, during local training, the local heterogeneous model's feature extractor acts as a local expert for personalized feature (representation) extraction, while the shared homogeneous small feature extractor serves as a global expert for generalized feature extraction. The local gating network produces personalized weights for extracted representations from both experts on each data sample. The three models form a local heterogeneous MoE. The weighted mixed representation fuses generalized and personalized features and is processed by the local heterogeneous large model's header with personalized prediction information. The MoE and prediction header are updated simultaneously. Secondly, the trained local homogeneous small feature extractors are sent to the server for cross-client information fusion via aggregation. Overall, pFedMoE enhances local model personalization at a fine-grained data level, while supporting model heterogeneity.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge