Parallel Restarted SPIDER -- Communication Efficient Distributed Nonconvex Optimization with Optimal Computation Complexity

Paper and Code

Dec 12, 2019

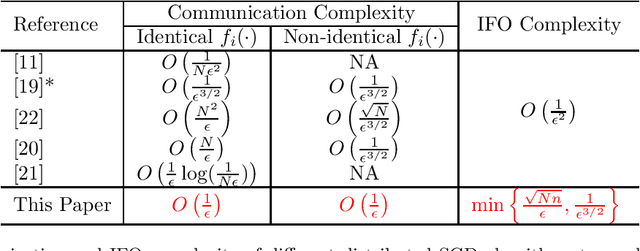

In this paper, we propose a distributed algorithm for stochastic smooth, non-convex optimization. We assume a worker-server architecture where $N$ nodes, each having $n$ (potentially infinite) number of samples, collaborate with the help of a central server to perform the optimization task. The global objective is to minimize the average of local cost functions available at individual nodes. The proposed approach is a non-trivial extension of the popular parallel-restarted SGD algorithm, incorporating the optimal variance-reduction based SPIDER gradient estimator into it. We prove convergence of our algorithm to a first-order stationary solution. The proposed approach achieves the best known communication complexity $O(\epsilon^{-1})$ along with the optimal computation complexity. For finite-sum problems (finite $n$), we achieve the optimal computation (IFO) complexity $O(\sqrt{Nn}\epsilon^{-1})$. For online problems ($n$ unknown or infinite), we achieve the optimal IFO complexity $O(\epsilon^{-3/2})$. In both the cases, we maintain the linear speedup achieved by existing methods. This is a massive improvement over the $O(\epsilon^{-2})$ IFO complexity of the existing approaches. Additionally, our algorithm is general enough to allow non-identical distributions of data across workers, as in the recently proposed federated learning paradigm.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge