On the Intrinsic and Extrinsic Fairness Evaluation Metrics for Contextualized Language Representations

Paper and Code

Mar 25, 2022

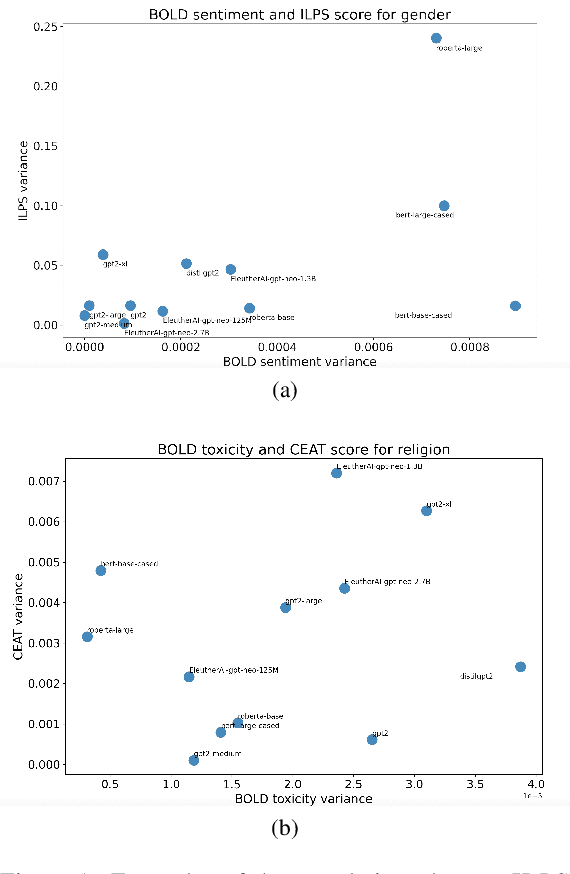

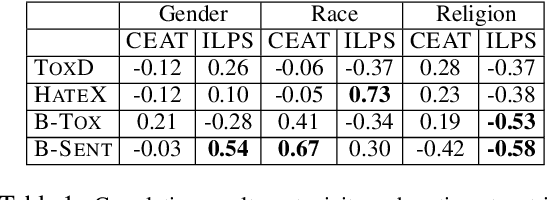

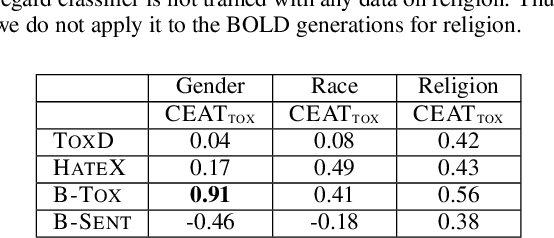

Multiple metrics have been introduced to measure fairness in various natural language processing tasks. These metrics can be roughly categorized into two categories: 1) \emph{extrinsic metrics} for evaluating fairness in downstream applications and 2) \emph{intrinsic metrics} for estimating fairness in upstream contextualized language representation models. In this paper, we conduct an extensive correlation study between intrinsic and extrinsic metrics across bias notions using 19 contextualized language models. We find that intrinsic and extrinsic metrics do not necessarily correlate in their original setting, even when correcting for metric misalignments, noise in evaluation datasets, and confounding factors such as experiment configuration for extrinsic metrics. %al

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge