NoiLIn: Do Noisy Labels Always Hurt Adversarial Training?

Paper and Code

May 31, 2021

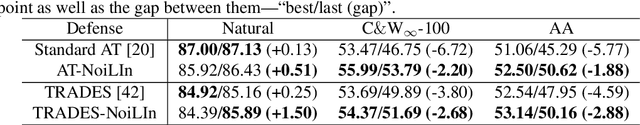

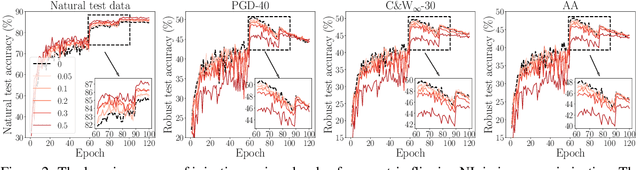

Adversarial training (AT) based on minimax optimization is a popular learning style that enhances the model's adversarial robustness. Noisy labels (NL) commonly undermine the learning and hurt the model's performance. Interestingly, both research directions hardly crossover and hit sparks. In this paper, we raise an intriguing question -- Does NL always hurt AT? Firstly, we find that NL injection in inner maximization for generating adversarial data augments natural data implicitly, which benefits AT's generalization. Secondly, we find NL injection in outer minimization for the learning serves as regularization that alleviates robust overfitting, which benefits AT's robustness. To enhance AT's adversarial robustness, we propose "NoiLIn" that gradually increases \underline{Noi}sy \underline{L}abels \underline{In}jection over the AT's training process. Empirically, NoiLIn answers the previous question negatively -- the adversarial robustness can be indeed enhanced by NL injection. Philosophically, we provide a new perspective of the learning with NL: NL should not always be deemed detrimental, and even in the absence of NL in the training set, we may consider injecting it deliberately.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge