Multimodal Fake News Detection with Adaptive Unimodal Representation Aggregation

Paper and Code

Jun 12, 2022

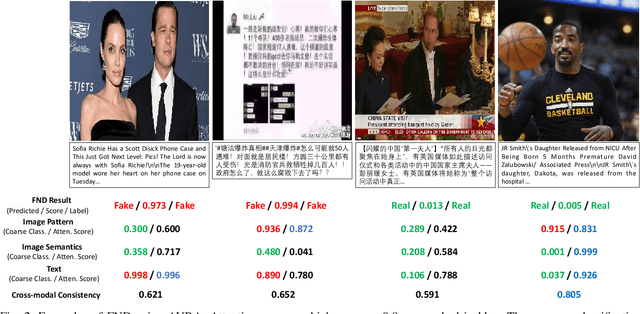

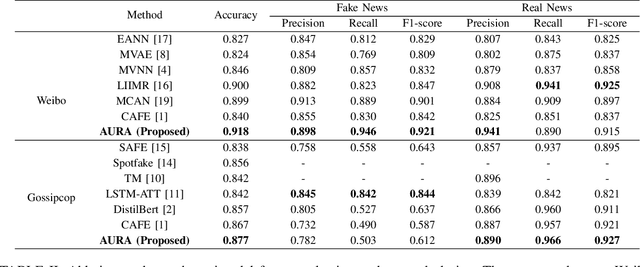

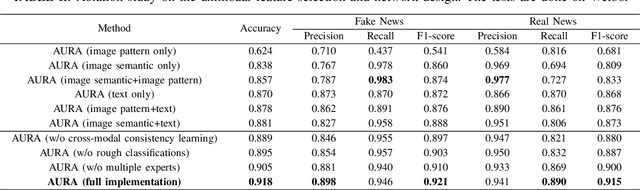

The development of Internet technology has continuously intensified the spread and destructive power of rumors and fake news. Previous researches on multimedia fake news detection include a series of complex feature extraction and fusion networks to achieve feature alignment between images and texts. However, what the multimodal features are composed of and how features from different modalities affect the decision-making process are still open questions. We present AURA, a multimodal fake news detection network with Adaptive Unimodal Representation Aggregation. We first extract representations respectively from image pattern, image semantics and text, and multimodal representations are generated by sending the semantic and linguistic representations into an expert network. Then, we perform coarse-level fake news detection and cross-modal cosistency learning according to the unimodal and multimodal representations. The classification and consistency scores are mapped into modality-aware attention scores that readjust the features. Finally, we aggregation and classify the weighted features for refined fake news detection. Comprehensive experiments on Weibo and Gossipcop prove that AURA can successfully beat several state-of-the-art FND schemes, where the overall prediction accuracy and the recall of fake news is steadily improved.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge