Multi-Expert Adversarial Attack Detection in Person Re-identification Using Context Inconsistency

Paper and Code

Aug 23, 2021

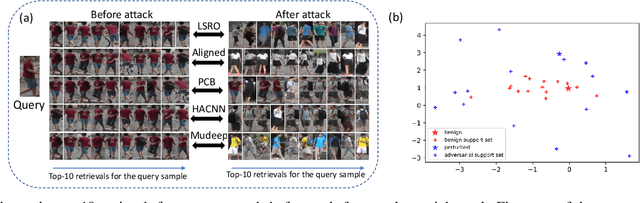

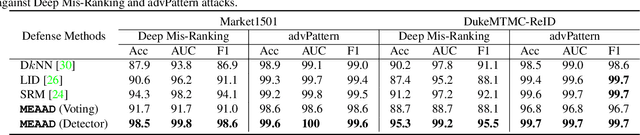

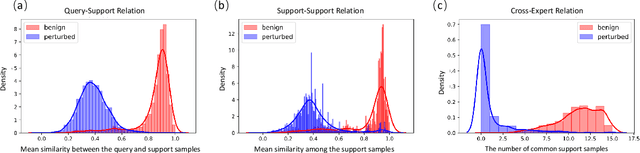

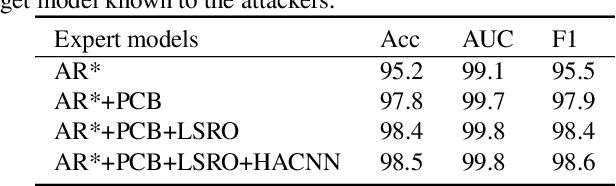

The success of deep neural networks (DNNs) haspromoted the widespread applications of person re-identification (ReID). However, ReID systems inherit thevulnerability of DNNs to malicious attacks of visually in-conspicuous adversarial perturbations. Detection of adver-sarial attacks is, therefore, a fundamental requirement forrobust ReID systems. In this work, we propose a Multi-Expert Adversarial Attack Detection (MEAAD) approach toachieve this goal by checking context inconsistency, whichis suitable for any DNN-based ReID systems. Specifically,three kinds of context inconsistencies caused by adversar-ial attacks are employed to learn a detector for distinguish-ing the perturbed examples, i.e., a) the embedding distancesbetween a perturbed query person image and its top-K re-trievals are generally larger than those between a benignquery image and its top-K retrievals, b) the embedding dis-tances among the top-K retrievals of a perturbed query im-age are larger than those of a benign query image, c) thetop-K retrievals of a benign query image obtained with mul-tiple expert ReID models tend to be consistent, which isnot preserved when attacks are present. Extensive exper-iments on the Market1501 and DukeMTMC-ReID datasetsshow that, as the first adversarial attack detection approachfor ReID,MEAADeffectively detects various adversarial at-tacks and achieves high ROC-AUC (over 97.5%).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge