Mixture of Decoupled Message Passing Experts with Entropy Constraint for General Node Classification

Paper and Code

Feb 12, 2025

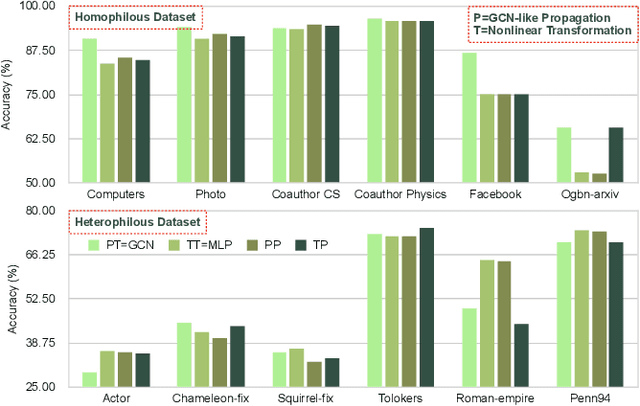

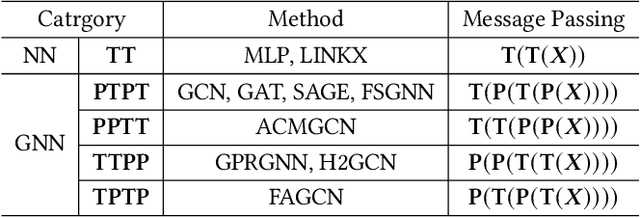

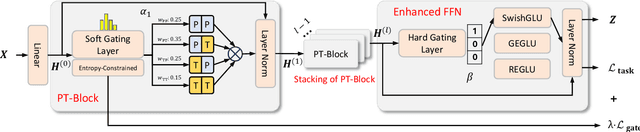

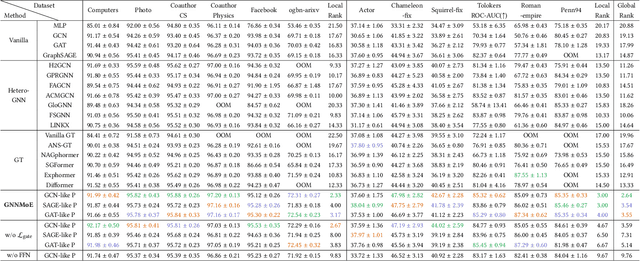

The varying degrees of homophily and heterophily in real-world graphs persistently constrain the universality of graph neural networks (GNNs) for node classification. Adopting a data-centric perspective, this work reveals an inherent preference of different graphs towards distinct message encoding schemes: homophilous graphs favor local propagation, while heterophilous graphs exhibit preference for flexible combinations of propagation and transformation. To address this, we propose GNNMoE, a universal node classification framework based on the Mixture-of-Experts (MoE) mechanism. The framework first constructs diverse message-passing experts through recombination of fine-grained encoding operators, then designs soft and hard gating layers to allocate the most suitable expert networks for each node's representation learning, thereby enhancing both model expressiveness and adaptability to diverse graphs. Furthermore, considering that soft gating might introduce encoding noise in homophilous scenarios, we introduce an entropy constraint to guide sharpening of soft gates, achieving organic integration of weighted combination and Top-K selection. Extensive experiments demonstrate that GNNMoE significantly outperforms mainstream GNNs, heterophilous GNNs, and graph transformers in both node classification performance and universality across diverse graph datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge