Mixed-Precision Quantization and Parallel Implementation of Multispectral Riemannian Classification for Brain--Machine Interfaces

Paper and Code

Feb 22, 2021

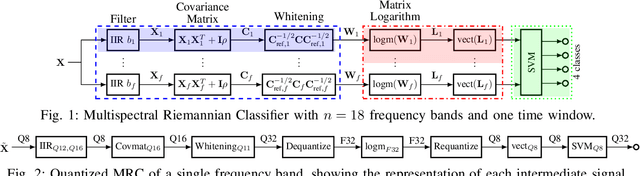

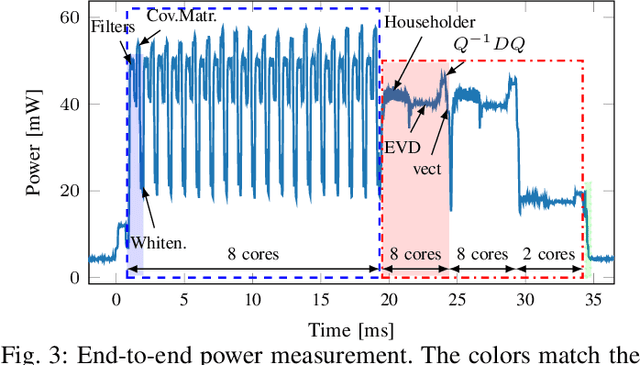

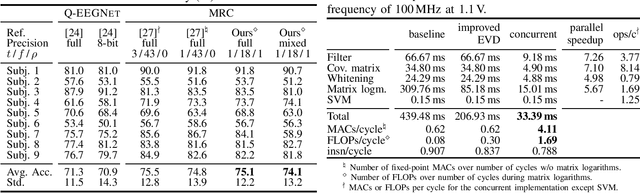

With Motor-Imagery (MI) Brain--Machine Interfaces (BMIs) we may control machines by merely thinking of performing a motor action. Practical use cases require a wearable solution where the classification of the brain signals is done locally near the sensor using machine learning models embedded on energy-efficient microcontroller units (MCUs), for assured privacy, user comfort, and long-term usage. In this work, we provide practical insights on the accuracy-cost tradeoff for embedded BMI solutions. Our proposed Multispectral Riemannian Classifier reaches 75.1% accuracy on 4-class MI task. We further scale down the model by quantizing it to mixed-precision representations with a minimal accuracy loss of 1%, which is still 3.2% more accurate than the state-of-the-art embedded convolutional neural network. We implement the model on a low-power MCU with parallel processing units taking only 33.39ms and consuming 1.304mJ per classification.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge