Low-Rank Representations Meets Deep Unfolding: A Generalized and Interpretable Network for Hyperspectral Anomaly Detection

Paper and Code

Feb 23, 2024

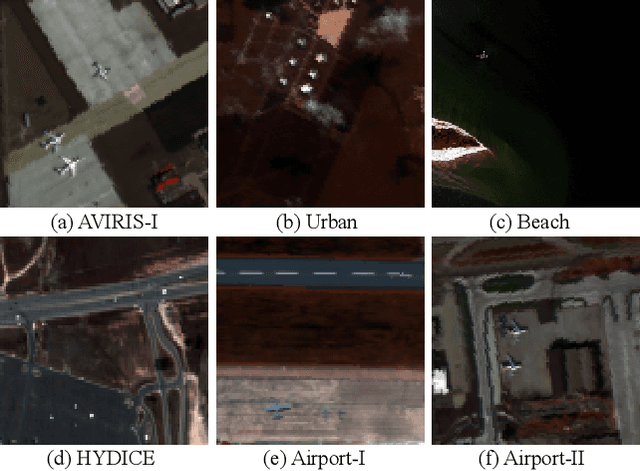

Current hyperspectral anomaly detection (HAD) benchmark datasets suffer from low resolution, simple background, and small size of the detection data. These factors also limit the performance of the well-known low-rank representation (LRR) models in terms of robustness on the separation of background and target features and the reliance on manual parameter selection. To this end, we build a new set of HAD benchmark datasets for improving the robustness of the HAD algorithm in complex scenarios, AIR-HAD for short. Accordingly, we propose a generalized and interpretable HAD network by deeply unfolding a dictionary-learnable LLR model, named LRR-Net$^+$, which is capable of spectrally decoupling the background structure and object properties in a more generalized fashion and eliminating the bias introduced by vital interference targets concurrently. In addition, LRR-Net$^+$ integrates the solution process of the Alternating Direction Method of Multipliers (ADMM) optimizer with the deep network, guiding its search process and imparting a level of interpretability to parameter optimization. Additionally, the integration of physical models with DL techniques eliminates the need for manual parameter tuning. The manually tuned parameters are seamlessly transformed into trainable parameters for deep neural networks, facilitating a more efficient and automated optimization process. Extensive experiments conducted on the AIR-HAD dataset show the superiority of our LRR-Net$^+$ in terms of detection performance and generalization ability, compared to top-performing rivals. Furthermore, the compilable codes and our AIR-HAD benchmark datasets in this paper will be made available freely and openly at \url{https://sites.google.com/view/danfeng-hong}.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge