Light Pose Calibration for Camera-light Vision Systems

Paper and Code

Jun 27, 2020

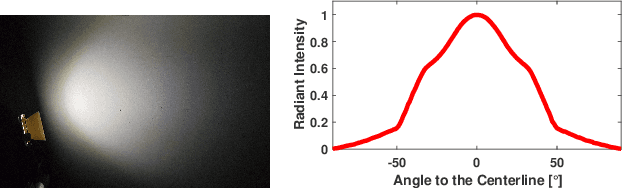

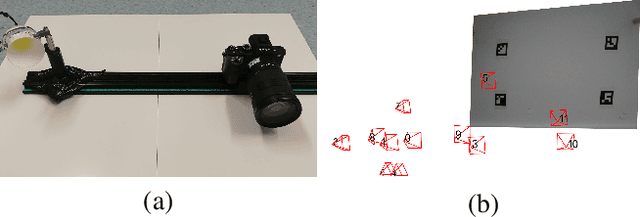

Illuminating a scene with artificial light is a prerequisite for seeing in dark environments. However, nonuniform and dynamic illumination can deteriorate or even break computer vision approaches, for instance when operating a robot with headlights in the darkness. This paper presents a novel light calibration approach by taking multi-view and -distance images of a reference plane in order to provide pose information of the employed light sources to the computer vision system. By following a physical light propagation approach, under consideration of energy preservation, the estimation of light poses is solved by minimizing of the differences between real and rendered pixel intensities. During the evaluation we show the robustness and consistency of this method by statistically analyzing the light pose estimation results with different setups. Although the results are demonstrated using a rotationally-symmetric non-isotropic light, the method is suited also for non-symmetric lights.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge