Learning Transferable Domain Priors for Safe Exploration in Reinforcement Learning

Paper and Code

Sep 11, 2019

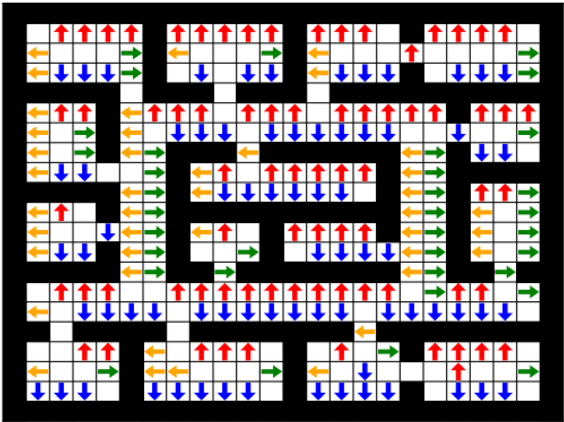

Prior access to domain knowledge could significantly improve the performance of a reinforcement learning agent. In particular, it could help agents avoid potentially catastrophic exploratory actions, which would otherwise have to be experienced during learning. In this work, we identify consistently undesirable actions in a set of previously learned tasks, and use pseudo-rewards associated with them to learn a prior policy. In addition to enabling safe exploratory behaviors in subsequent tasks in the domain, these priors are transferable to similar environments, and can be learned off-policy and in parallel with the learning of other tasks in the domain. We compare our approach to established, state-of-the-art algorithms in a grid-world navigation environment, and demonstrate that it exhibits a superior performance with respect to avoiding unsafe actions while learning to perform arbitrary tasks in the domain. We also present some theoretical analysis to support these results, and discuss the implications and some alternative formulations of this approach, which could also be useful to accelerate learning in certain scenarios.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge