Learning compositionally through attentive guidance

Paper and Code

Sep 10, 2018

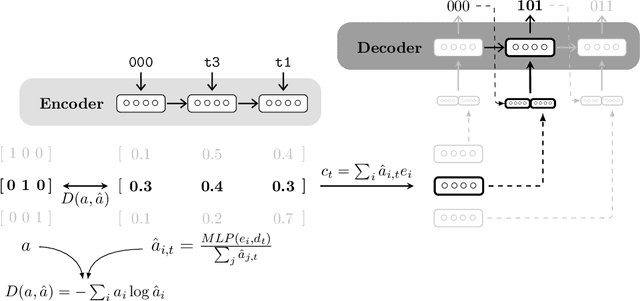

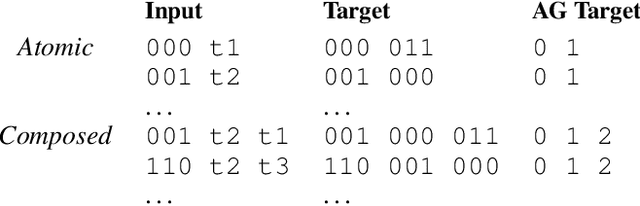

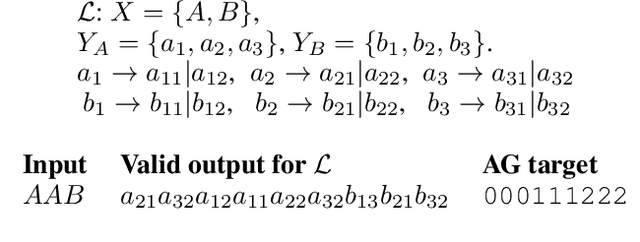

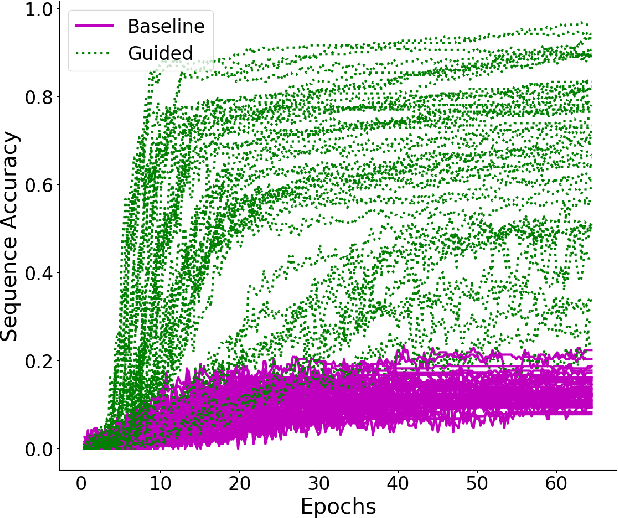

While neural network models have been successfully applied to domains that require substantial generalisation skills, recent studies have implied that they struggle when solving the task they are trained on requires inferring its underlying compositional structure. In this paper, we introduce Attentive Guidance, a mechanism to direct a sequence to sequence model equipped with attention to find more compositional solutions. We test it on two tasks, devised precisely to assess the compositional capabilities of neural models, and we show that vanilla sequence to sequence models with attention overfit the training distribution, while the guided versions come up with compositional solutions that fit the training and testing distributions almost equally well. Moreover, the learned solutions generalise even in cases where the training and testing distributions strongly diverge. In this way, we demonstrate that sequence to sequence models are capable of finding compositional solutions without requiring extra components. These results helps to disentangle the causes for the lack of systematic compositionality in neural networks, which can in turn fuel future work.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge